Gaming AIs: NVIDIA Teaches A Neural Network to Recreate Pac-Man

by Ryan Smith on May 22, 2020 10:30 AM EST- Posted in

- GPUs

- Gaming

- NVIDIA

- Deep Learning

- Artificial Intelligence

Following last week’s virtual GTC keynote and the announcement of their Ampere architecture, this week NVIDIA has been holding the back-half of their conference schedule. As with the real event, the company has been posting numerous sessions on everything NVIDIA, from Ampere to CUDA to remote desktop. But perhaps the most interesting talk – and certainly the most amusing – is coming from NVIDIA’s research group.

Tasked with developing future technologies and finding new uses for current technologies, today the group is announcing that they have taught a neural network Pac-Man.

And no, I don’t mean how to play Pac-Man. I mean how to be the game of Pac-Man.

The reveal, timed to coincide with the 40th anniversary of the ghost-munching game, is coming out of NVIDIA’s research into Generative Adversarial Networks (GANs). At a very high level, GANs are a type of neural network where two neural networks are trained against each other – typically one learning how to do a task and the other learning how to spot the first doing that task – with the end goal being that the competition between the networks can help make the two networks better by forcing them to improve to win. In terms of practical applications, GANs have most famously been used in research projects to create programs that can create realistic-looking images of real-world items, upscale existing images, and other image synthesis/manipulation tasks.

For Pac-Man, however, the researchers behind the fittingly named GameGAN project took things one step further, focusing on creating a GAN that can be taught how to emulate/generate a video game. This includes not only recreating the look of a game, but perhaps most importantly, the rules of a game as well. In essence, GameGAN is intended to learn how a game works by watching it, not unlike a human would.

For their first project, the GameGAN researchers settled on Pac-Man, which is as good a starting point as any. The 1980 game has relatively simple rules and graphics, and crucially for the training process, a complete game can be played in a short amount of time. As a result, over 50K “episodes” of training, the researchers taught a GAN how to be Pac-Man solely by having the neural network watch the game being played.

And most impressive of all, the crazy thing actually works.

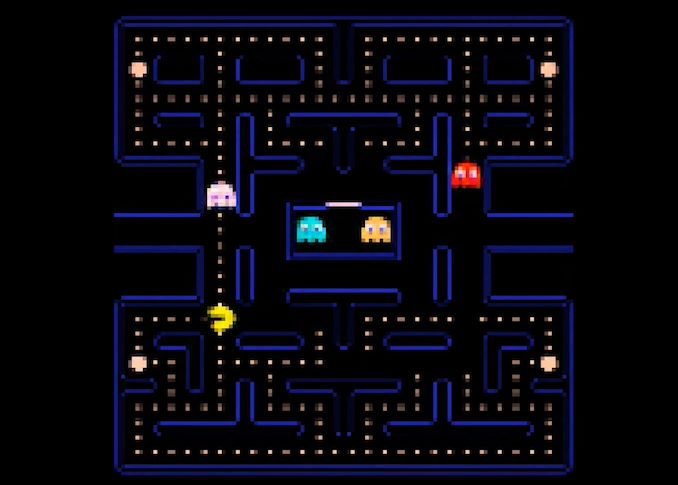

In a video released by NVIDIA, the company is briefly showing off the Pac-Man-trained GameGAN in action. While the resulting game isn’t a pixel-perfect recreation of Pac-Man – notably, GameGAN’s simulated resolution is lower – the game none the less looks and functions like the arcade version of Pac-Man. And it’s not just for looks, either: the GameGAN version of Pac-Man accepts player input, just like the real game. In fact, while it’s not ready for public consumption quite yet, NVIDIA has already said that they want to release a publicly playable version this summer, so that everyone can see it in action.

Fittingly for a gaming-related research project, the training and development for the GameGAN was equally as silly at times. Because the network needed to consume thousands upon thousand of gameplay sessions – and NVIDIA presumably doesn’t want to pay its staff to play Pac-Man all day – the researchers relied on a Pac-Man-playing bot to automatically play the game. As a result, the AI that is GameGAN has essentially been trained in Pac-Man by another AI. And this is not without repercussions – in their presentation, the researchers have noted that because the Pac-Man bot was so good at the game, GameGAN has developed a tendency to avoid killing Pac-Man, as if it were part of the rules. Which, if nothing else, is a lot more comforting than finding out that our soon-to-be AI overlords are playing favorites.

All told, training the GameGAN for Pac-Man took a quad GV100 setup four days, over which time it monitored 50,000 gameplay sessions. Which, to put things in perspective of the amount of hardware used, 4 GV100 GPUs is 84.4 billion transistors, almost 10 million times as many transistors as are found in the original arcade game’s Z80 CPU. So while teaching a GAN how to be a Pac-Man is incredibly impressive, it is, perhaps, not an especially efficient way to execute the game.

Meanwhile, figuring out how to teach a neural network to be Pac-Man does have some practical goals to it as well. According to the research group, one big focus right now is in using this concept to more quickly train simulators, which traditionally have to be carefully constructed by humans in order to capture all of the possible interactions. If a neural network can instead learn how something behaves by watching what’s happening and what inputs are being made, this could conceivably make creating simulators far faster and easier. Interestingly, the entire concept leads to something of a self-feedback loop, as the idea is to then use those simulators to then train other neural networks how to perform a task, such as NVIDIA’s favorite goal of self-driving cars.

Ultimately, whether it leads to real-world payoffs or not, there’s something amusingly human about a neural network learning a game by observing – even (and especially) if it doesn’t always learn the desired lesson.

Source: NVIDIA

20 Comments

View All Comments

brucethemoose - Friday, May 22, 2020 - link

"All told, training the GameGAN for Pac-Man took a quad GV100 setup four days, over which time it monitored 50,000 gameplay sessions. Which, to put things in perspective of the amount of hardware used, 4 GV100 GPUs is 84.4 billion transistors, almost 10 million times as many transistors as are found in the original arcade game’s Z80 CPU. So while teaching a GAN how to be a Pac-Man is incredibly impressive, it is, perhaps, not an especially efficient way to execute the game"Note that it took 4 days to *train*, not execute. A more appropriate analogy would be development time... aka X months of meatbags on typewriters.

I do wonder what it takes to run at 50 FPS. The plans for a demo suggest you don't need a DGX box that costs more than a house, but can it execute on, say, an 8GB RTX 2070?

Lord of the Bored - Friday, May 22, 2020 - link

I'd hope that running Pac-Man on a 2070 at least equals the original 60FPS.jeremyshaw - Saturday, May 23, 2020 - link

Costs more than a house?Ah, you don't live in this nightmare world called Silicon Valley. Hopefully, remote work will allow people to think of $200k as a house, lol.

p1esk - Sunday, May 24, 2020 - link

Quad GV100 setup is ~$40k. Where did you get $200k from? Also, I don't think $200k will get you a decent house anywhere. Certainly not in Houston, TX.skavi - Tuesday, May 26, 2020 - link

They are referring to the above mentioned DGX boxalicebcao75 - Monday, June 8, 2020 - link

Make 6150 bucks every month… Start doing online computer-based work through our website. I have been working from home for 4 years now and I love it. I don’t have a boss standing over my shoulder and I make my own hours. The tips below are very informative and anyone currently working from home or planning to in the future could use this website. WWW. iⅭash68.ⅭOⅯeastcoast_pete - Friday, May 22, 2020 - link

So, how did the AI deal with the copyright/IP issues around PacMan? If it managed to find a loophole by itself, I'd really be impressed (:UltraWide - Friday, May 22, 2020 - link

LOLFortunately it was in collaboration with NAMCO for their 40th anniversary for PAC-MAN!

edzieba - Friday, May 22, 2020 - link

It created the entire game from scratch, no original code or sprites were copied.soresu - Friday, May 22, 2020 - link

Well, not really from scratch when it takes 50,000 repetitions of observing the original game to do it - it's basically the neural net equivalent of a savant copying a painting, except in motion and playable.The code and the sprites are there, the AI is just taking a guess at the former and copying the latter from what it sees on screen.

I'd be much more impressed if it really created Pac Man from scratch using no more than a description of game play.

I'll be even more impressed when it can meet and surpass the efforts of ReactOS in reverse engineering Windows (at this point they may as well give up).