Analysis of the new Apple iPad

by Anand Lal Shimpi on March 9, 2012 1:37 AM ESTYesterday Apple unveiled its third generation iPad, simply called the new iPad, at an event in San Francisco. The form factor remains mostly unchanged with a 9.7-inch display, however the new device is thicker at 9.4mm vs. 8.8mm for its predecessor. The added thickness was necessary to support the iPad's new 2048 x 1536 Retina Display.

| Tablet Specification Comparison | ||||||

| ASUS Transformer Pad Infinity | Apple's new iPad (2012) | Apple iPad 2 | ||||

| Dimensions | 263 x 180.8 x 8.5mm | 241.2 x 185.7 x 9.4mm | 241.2 x 185.7 x 8.8mm | |||

| Display | 10.1-inch 1920 x 1200 Super IPS+ | 9.7-inch 2048 x 1536 IPS | 9.7-inch 1024 x 768 IPS | |||

| Weight (WiFi) | 586g | 652g | 601g | |||

| Weight (4G LTE) | 586g | 662g | 601g | |||

| Processor (WiFi) |

1.6GHz NVIDIA Tegra 3 T33 (4 x Cortex A9) |

Apple A5X (2 x Cortex A9, PowerVR SGX 543MP4) |

1GHz Apple A5 (2 x Cortex A9, PowerVR SGX543MP2) | |||

| Processor (4G LTE) | 1.5GHz Qualcomm Snapdragon S4 MSM8960 (2 x Krait) |

Apple A5X (2 x Cortex A9, PowerVR SGX 543MP4) |

1GHz Apple A5 (2 x Cortex A9, PowerVR SGX543MP2) | |||

| Connectivity | WiFi , Optional 4G LTE | WiFi , Optional 4G LTE | WiFi , Optional 3G | |||

| Memory | 1GB | 1GB | 512MB | |||

| Storage | 16GB - 64GB | 16GB - 64GB | 16GB | |||

| Battery | 25Whr | 42.5Whr | 25Whr | |||

| Pricing | $599 - $799 est | $499 - $829 | $399, $529 | |||

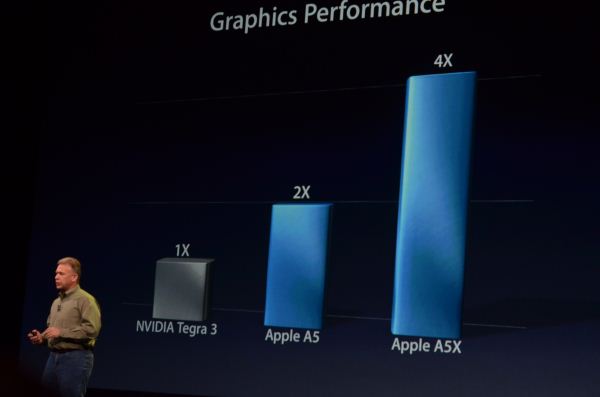

Driving the new display is Apple's A5X SoC. Apple hasn't been too specific about what's inside the A5X other than to say it features "quad-core graphics". Upon further prodding Apple did confirm that there are two CPU cores inside the SoC. It's safe to assume that there are still a pair of Cortex A9s in the A5X but now paired with a PowerVR SGX543MP4 instead of the 543MP2 used in the iPad 2. The chart below gives us an indication of the performance Apple expects to see from the A5X's GPU vs what's in the A5:

Apple ran the PowerVR SGX 543MP2 in its A5 SoC at around 250MHz, which puts it at 16 GFLOPS of peak theoretical compute horsepower. NVIDIA claims the GPU in Tegra 3 is clocked higher than Tegra 2, which was around 300MHz. In practice, Tegra 3 GPU clocks range from 333MHz on the low end for smartphones and reach as high as 500MHz on the high end for tablets. If we assume a 333MHz GPU clock in Tegra 3, that puts NVIDIA at roughly 8 GFLOPS, which rationalizes the 2x advantage Apple claims in the chart above. The real world performance gap isn't anywhere near that large of course - particularly if you run on a device with a ~500MHz GPU clock (12 GFLOPS):

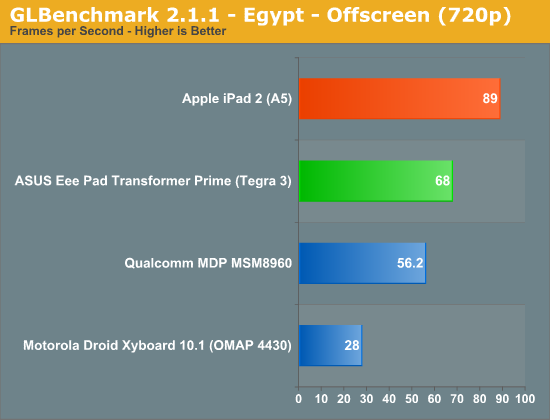

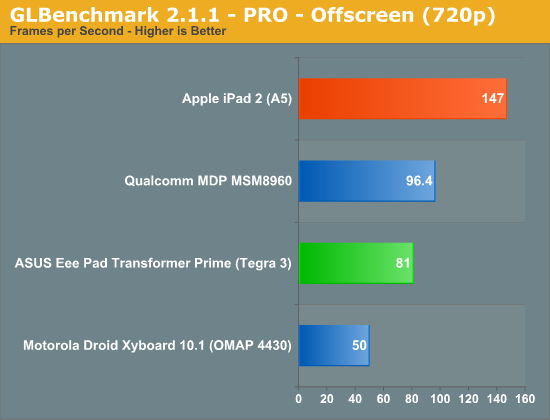

GLBenchmark 2.1.1's Egypt offscreen test pegs the PowerVR SGX 543MP2 advantage at just over 30%, at least at 1280 x 720. Based on the raw FP numbers for a 500MHz Tegra 3 GPU vs. a 250MHz PowerVR SGX 543MP2, around a 30% performance advantage is what you'd expect from a mostly compute limited workload. It's possible that the gap could grow at higher resolutions or with a different workload. For example, look at the older GLBenchmark PRO results and you will see a 2x gap in graphics performance:

For most real world gaming workloads I do believe that the A5 is faster than Tegra 3, but the advantage is unlikely to be 2x at non-retinadisplay resolutions. The same applies to the A5X vs. Tegra 3 comparison. I fully expect there to be a significant performance gap at the same resolution, but I doubt it is 4x in a game.

| Mobile SoC GPU Comparison | |||||||||||||

| Apple A4 | Apple A5 | Apple A5X | Tegra 3 (max) | Tegra 3 (min) | Intel Z2580 | ||||||||

| GPU | PowerVR SGX 535 | PowerVR SGX 543MP2 | PowerVR SGX 543MP4 | GeForce | GeForce | PowerVR SGX 544MP2 | |||||||

| MADs per Clock | 4 | 32 | 64 | 12 | 12 | 32 | |||||||

| Clock Speed | 250MHz | 250MHz | 250MHz | 500MHz | 333MHz | 533MHz | |||||||

| Peak Compute | 2.0 GFLOPS | 16.0 GFLOPS | 32.0 GFLOPS | 12.0 GFLOPS | 8.0 GFLOPS | 34.1 GFLOPS | |||||||

The A5X doubles GPU execution resources compared to the A5. Imagination Technologies' PowerVR SGX 543 is modular - you can expand by simply increasing "core" count. Apple tells us all we need to know about clock speed in the chart above: with 2x the execution resources and 2x the performance of the A5, Apple hasn't changed the GPU clock of the A5X.

Assuming perfect scaling, I'd expect around a 2x performance gain over Tegra 3 in GLBenchmark (Egypt) at 720p. Again, not 4x but at the same time, hardly insignificant. It can take multiple generations of GPUs to deliver that sort of a performance advantage at a similar price point. Granted Apple has no problems eating the cost of a larger, more expensive die, but that doesn't change the fact that the GPU advantage Apple will hold thanks to the A5X is generational.

I'd also point out that the theoretical GPU performance of the A5X is identical to what Intel is promising with its Atom Z2580 SoC. Apple arrives there with four SGX 543 cores, while Intel gets there with two SGX 544 cores running at ~2x the frequency (533MHz vs. 250MHz).

With the new iPad's Retina Display delivering 4x the pixels of the iPad 2, a 2x increase in GPU horsepower isn't enough to maintain performance. If you remember back to our iPad 2 review however, the PowerVR SGX 543MP2 used in it was largely overkill for the 1024 x 768 display. It's likely that a 4x increase in GPU horsepower wasn't necessary to deliver a similar experience on games. Also keep in mind that memory bandwidth limitations will keep many titles from running at the new iPad's native resolution. Remember that we need huge GPUs with 100s of GB/s of memory bandwidth to deliver a high frame rate on 3 - 4MP PC displays. I'd expect many games to render at lower resolutions and possibly scale up to fit the panel.

What About the Display?

Performance specs aside, the iPad's Retina Display does look amazing. The 1024 x 768 panel in the older models was simply getting long in the tooth and the Retina Display ensures Apple won't need to increase screen resolution for a very long time. Apple also increased color gamut by 44% with the panel, but the increase in resolution alone is worth the upgrade for anyone who spends a lot of time reading on their iPad. The photos below give you an idea of just how sharp text and graphics are on the new display compared to its predecessor (iPad 2, left vs. new iPad, right):

The improvement is dramatic in these macro shots but I do believe that it's just as significant in normal use.

Apple continues to invest heavily in the aspects of its devices that users interact with the most frequently. Spending a significant amount of money on the display makes a lot of sense. Kudos to Apple for pushing the industry forward here. The only downside is supply of these greater-than-HD panels is apparently very limited as a result of Apple buying up most of the production from as many as three different panel vendors. It will be a while before we see Android tablets with comparable resolutions, although we will see 1920 x 1200 Android tablets shipping in this half.

161 Comments

View All Comments

robinthakur - Tuesday, March 13, 2012 - link

I've noticed that the trends in the organisation are to enable user's personal devices for company data. Spearheading that is the iPad/iPhones. In the last 4 companies I've worked for as a contractor, they were both issuing company iPads and enabling people's own devices, having changed the IT policy in response to user/management pressure. This is not just small companies either. BoA have now started doing it. Using Exchange you can enforce passcodes and remote wipe them. This is the current trend, and I find it hard to believe that MS will have anything on the market which will remotely worry Apple. It's not like you can use all the old windows software on a Windows 8 tablet running on Arm, and users still have to learn a new and somewhat unintuitive interface, so where' s the win there? People have got used to the super intuitive iOS since 2007 and they like it, hence the change. To most users, even in business, Windows is far too complicated for its own good. I see this everyday.The only possible win is decent Office/SharePoint integration, but since Office will shortly be arriving on iPad and there are already many solutions in the App Store for SharePoint integration, it's something of a moot point IMO. The only thing Windows had going for it were compatibility and a familiar interface which people had grown up with, which the decent Windows 7 offers. This is why the phones failed and this is also why Windows 8 will underperform. Windows 7 will survive in a similar way to XP, far past MS's predictions.

wilmarkj - Sunday, March 11, 2012 - link

All Current 30" monitors are already 4 MP. But they cost around 1100$ but they are high quality (16 bit color and SIPS), at thats at the same dpi as current 24" 1900x1200 monitors not anywhere close to retina quality, which i thought was over 300 dpi.solipsism - Sunday, March 11, 2012 - link

It's a factor dpi/ppi + distance from your retina. That's why an HDTV could be considered Retina Display at pixels than a handheld device.The equation for 20/20 vision is: 3438 * (1/x) = y, where x is the minimum distance away from your eyes it has to be placed and y is the number of pixels per inch.

If you have a 46" 1080p HDTV that is 48 PPI so the equation is: 3438 * (1/x) = 48, which means you need to sit over 6" away for the pixels to become indistinguishable. Of course, there are other factors involved but that is the basis of the definition.

Mitch89 - Tuesday, March 13, 2012 - link

Hence why most people can't tell the difference between 720p and 1080p on their HDTVs.Mitch89 - Tuesday, March 13, 2012 - link

4K does not equal 4 megapixel. A 4K display would have more than 7 megapixels.4K refers to the width of the display in pixels, likely 4096x2560 in a 16:10 aspect.

To put that in perspective, most current 30" displays (Dell, Apple, etc) are 2560px wide.

I for one and looking forward to that with some better UI scaling. My 27" Dell 2709W looks great at 1920x1200 for readability, but if that resolution were doubled like the new iPad (more pixels, same view), it would be incredibly smooth.

steven75 - Sunday, March 11, 2012 - link

They'll buy iPads because Win8 (metro interface) won't have the 200,000 apps the iPad does.tdawg - Friday, March 9, 2012 - link

Agreed. I'd love a high resolution 27" or 30" monitor, but I'm not willing to pay more than $400-$500 for a PC monitor. If panel prices can be driven down to my price point, I'd be happy.Mitch89 - Tuesday, March 13, 2012 - link

I'm tempted to replace my Dell 2709W with a U2711 just for the relatively minor res bump (2560x1440 vs 1920x1200). The drop to 16x9 is a shame, but having recently purchased dual U2711's for an editing suite I built for a friend, they are awesome, not to mention sharply priced.RHurst - Friday, March 9, 2012 - link

YES! Couldn't agree more.Sabresiberian - Tuesday, March 13, 2012 - link

I agree, a pixel density higher than 200 PPI and 16:10, about 27" or 30" size.200Hz would be great, too. (I'll settle for 120 though.)

You know, this technology isn't new. There were LCD panels made with that kind of density over a decade ago:

http://en.wikipedia.org/wiki/IBM_T220/T221_LCD_mon...

Of course, they were extremely expensive back then ($18,000), but tablets prove they don't have to be today.

One thing I DO NOT want is a screen with a ratio less than 16:10, and a lot of these "4K" displays are worse than 16:9.