As HPC Chip Sizes Grow, So Does the Need For 1kW+ Chip Cooling

by Anton Shilov on June 27, 2022 10:00 AM EST- Posted in

- Semiconductors

- Immersion

- TSMC

- CoWoS

- 3D Packaging

- 3DFabric

- InFO

One trend in the high performance computing (HPC) space that is becoming increasingly clear is that power consumption per chip and per rack unit is not going to stop with the limits of air cooling. As supercomputers and other high performance systems have already hit – and in some cases exceeded these limits – power requirements and power densities have continued to scale up. And based on the news from TSMC's recent annual technology symposium, we should expect to see this trend continue as TSMC lays the groundwork for even denser chip configurations.

The problem at hand is not a new one: transistor power consumption isn't scaling down nearly as quickly as transistor sizes. And as chipmakers are not about to leave performance on the table (and fail to deliver semi-annual increases for their customers), in the HPC space power per transistor is quickly growing. As an additional wrinkle, chiplets are paving the way towards constructing chips with even more silicon than traditional reticle limits, which is good for performance and latency, but even more problematic for cooling.

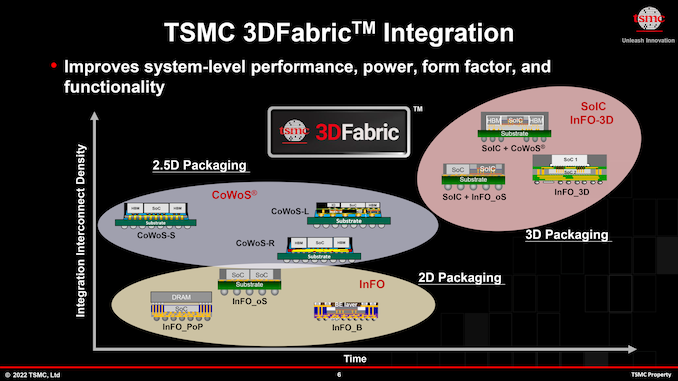

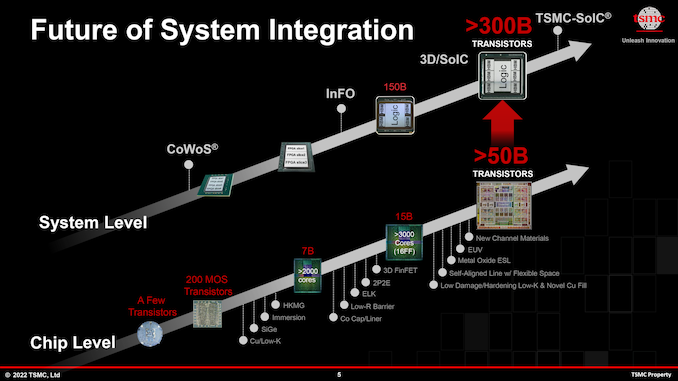

Enabling this kind of silicon and power growth has been modern technologies like TSMC'a CoWoS and InFO, which allow chipmakers to build integrated multi-chiplet system-in-packages (SiPs) with as much a double the amount of silicon otherwise allowed by TSMC's reticle limits. By 2024, advancements of TSMC's CoWoS packaging technology will enable building even larger multi-chiplet SiPs, with TSMC anticipating stitching together upwards of four reticle-sized chiplets, This will enable tremendous levels of complexity (over 300 billion transistor per SiP is a possibility that TSMC and its partners are looking at) and performance, but naturally at the cost of formidable power consumption and heat generation.

Already, flagship products like NVIDIA's H100 accelerator module require upwards of 700W of power for peak performance. So the prospect of multiple, GH100-sized chiplets on a single product is raising eyebrows – and power budgets. TSMC envisions that several years down the road there will be multi-chiplet SiPs with a power consumption of around 1000W or even higher, Creating a cooling challenge.

At 700W, H100 already requires liquid cooling; and the story is much the same for the chiplet based Ponte Vecchio from Intel, and AMD's Instinct MI250X. But even traditional liquid cooling has its limits. By the time chips reach a cumulative 1 kW, TSMC envisions that datacenters will need to use immersion liquid cooling systems for such extreme AI and HPC processors. Immersion liquid cooling, in turn, will require rearchitecting datacenters themselves, which will be a major change in design and a major challenge in continuity.

The short-tem challenges aside, once datacenters are setup for immersion liquid cooling, they will be ready for even hotter chips. Liquid immersion cooling has a lot of potential for handling large cooling loads, which is one reason why Intel is investing heavily in this technology in an attempt to make it more mainstream.

In addition to immersion liquid cooling, there is another technology that can be used to cool down ultra-hot chips — on-chip water cooling. Last year TSMC revealed that it had experimented with on-chip water cooling and said that even 2.6 kW SiPs could be cooled down using this technology. But of course, on-chip water cooling is an extremely expensive technology by itself, which will drive costs of those extreme AI and HPC solutions to unprecedented levels.

None the less, while the future isn't set in stone, seemingly it has been cast in silicon. TSMC's chipmaking clients have customers willing to pay a top dollar for those ultra-high-performance solutions (think operators of hyperscale cloud datacenters), even with the high costs and technical complexity that entails. Which to bring things back to where we started, is why TSMC has been developing CoWoS and InFO packaging processes on the first place – because there are customers ready and eager to break the reticle limit via chiplet technology. We're already seeing some of this today with products like Cerebras' massive Wafer Scale Engine processor, and via large chiplets, TSMC is preparing to make smaller (but still reticle-breaking) designs more accessible to their wider customer base.

Such extreme requirements for performance, packaging, and cooling not only push producers of semiconductors, servers, and cooling systems to their limits, but also require modifications of cloud datacenters. If indeed massive SiPs for AI and HPC workloads become widespread, cloud datacenters will be completely different in the coming years.

40 Comments

View All Comments

HappyCracker - Tuesday, June 28, 2022 - link

I work in the enterprise data center space. This is a true statement. We've tested rack-scale liquid cooling for servers (basically the same as your home PC with a centralized manifold. It works well and does drop the overall energy consumption of the system (those fans draw some power). The other advantage is that you can move the heat to a more convenient location compared to the traditional hot/cold aisle approach.FunBunny2 - Tuesday, June 28, 2022 - link

"There should be a decent energy efficiency gain using liquid cooling"would be nice if it were true, but the Laws of Thermodynamics make it impossible.

davedriggers - Tuesday, June 28, 2022 - link

Direct to Chip cooling leveraging water can easily handle these power levels. Direct to Chip has been leveraged for over a decade and at these power levels is actually fairly inexpensive, relative to the cost of the processors being created. The 700 watt Nvidia H100 will cost over $30,000 each, even if the cooling solution costs $1/watt (should be lower on scale), that would be just over a 2% premium. Direct to Chip cooling is also extremely efficient on scale, which would likely make up in operational costs as well as improving reliability and performance. The majority of the OEMs are planning on supporting Direct to Chip for the H100 GPUs, so we should start to see more off-the-shelf solutions entering the market vs. custom one off cooling systems fairly soon.mode_13h - Monday, July 4, 2022 - link

By "direct to chip" water cooling, I take it you mean having a waterblock on each processor?davedriggers - Tuesday, July 5, 2022 - link

Yes vs. liquid to the rack i.e. rear door heat exchanger.Jp7188 - Wednesday, June 29, 2022 - link

I can see a day when it becomes the norm to install a geo loop under the datacenter foundation at time of construction. The ground can absorb a lot of heat and direct cooling of water pipes in this fashion is very efficient.mode_13h - Monday, July 4, 2022 - link

> The ground can absorb a lot of heatWell, the thermal gradient should stabilize over time, eventually resulting in less efficient heat disposal. If the datacenter is located somewhere that gets cold, then you could get rid of some built-up heat during the winter. That's the only way it makes much sense to me.

alpha754293 - Thursday, June 30, 2022 - link

Direct die evaporative cooling is nothing new.My college housemates and I have been talking about that since AT LEAST as early as 2007.

Back then, I was already trying to design my own custom waterblock to water cool/liquid cool a Socket940 AMD Opteron, as a 3rd year mechanical engineering student.

This is nothing new.

ravikrieg - Wednesday, July 20, 2022 - link

Transistor power consumption isn't scaling down nearly as quickly as transistor sizes.https://wikiguide.tips/yahoo-mail-sign-up