Investigating Cavium's ThunderX: The First ARM Server SoC With Ambition

by Johan De Gelas on June 15, 2016 8:00 AM EST- Posted in

- SoCs

- IT Computing

- Enterprise

- Enterprise CPUs

- Microserver

- Cavium

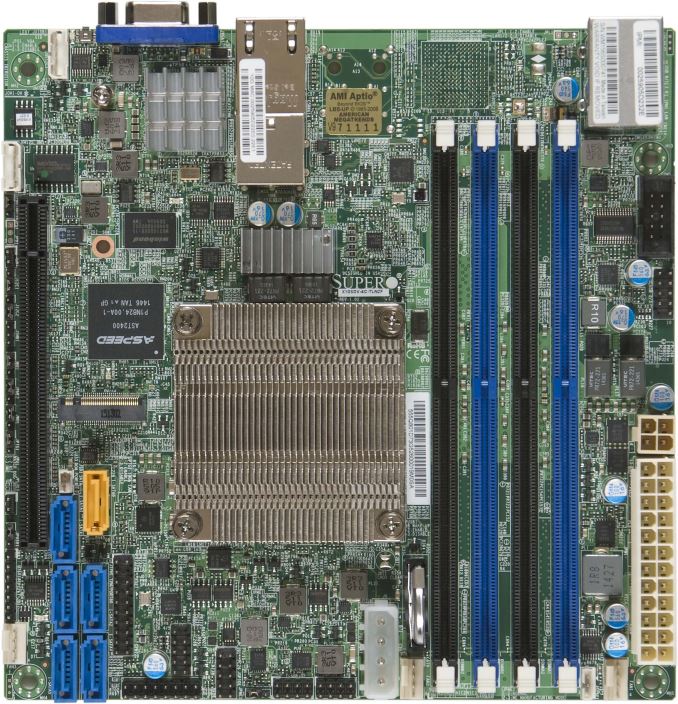

Supermicro's Xeon D Solution: X10SDV-12C-TLN4F

The top tier server vendors seem to offer very few Xeon D based servers. For example, there is still no Xeon D server among the HP Moonshot cartridges as far as we know. Supermicro, on the other hand, has an extensive line of Xeon D motherboards and servers, and is basically the vendor that has made the Xeon D accessible to those of us that do not work at Facebook or Google.

The first board we were able to test was the mini-ITX X10SDV-12C-TLN4F. It is a reasonably priced ($1200) model, in the range what the Gigabyte board and Cavium CN8880 -2.0 will cost (+/- $1100).

The Xeon D makes six SATA3 ports and two 10GBase available on this board. An additional i350-AM2 chip offers 2 gigabit Ethernet ports. The board has one PCI-e 3.0 x16 slot for further expansion. We have been testing this board 24/7 for almost 3 months now. We have tried out the different Ethernet ports and different DDR-4 DIMMS: it is a trouble-free.

The one disadvantage of all Supermicro boards remains their Java-based remote management system. It is a hassle to get it working securely (Java security is a user unfriendly mess), and it lacks some features like booting into the BIOS configuration system, which saves time. Furthermore video is sometimes not available, for example we got a black screen when a faulty network configuration caused the Linux bootup procedure to wait for a long time. Remote management solutions from HP and Intel offer better remote consoles.

82 Comments

View All Comments

vivs26 - Wednesday, June 15, 2016 - link

Not necessarily - (read Amdahl's law of diminishing returns). The performance actually depends on the workload. Having a million cores guarantees nothing in terms of performance unless the workload is parallelizable which in the real world is not as much as we think it could be. I'm curious to see how xeon merged with altera programmable fabric performs than ARM on a server.maxxbot - Wednesday, June 22, 2016 - link

Technically true but every generation that millstone gets a little smaller, the die area and power needed to translate x86 into uops isn't huge and reduces every generation.jardows2 - Wednesday, June 15, 2016 - link

Interesting. Faster in a few workloads where heavy use of multi-thread is important, but significantly slower in more single thread workloads. For server use, you don't always want parallelized tasks. The results are pretty much across the board for all the processors tested: If the ThunderX was slower, it was slower than all the Intel chips. If it were faster, it was faster than all but the highest end Intel Chips. With the price only being slightly lower than the cheapest Intel chip being sold, I don't think this is going to be a Xeon competitor at all, but will take a few niche applications where it can do better.With no significant energy savings, we should be looking forward to the ThunderX2 to see if it will bring this into a better alternative.

ddriver - Wednesday, June 15, 2016 - link

There is hardly a server workload where you don't get better throughput by throwing more cores and servers at it. Servers are NOT about parallelized task, but about concurrent tasks. That's why while desktops are still stuck at 8 cores, server chips come with 20 and more... Server workloads are usually very simple, it is just that there is a lot of them. They are so simple and take so little time it literally makes no sense parallelizing them.jardows2 - Wednesday, June 15, 2016 - link

In the scenario you described, the single-thread performance takes on even more importance, thus highlighting the advantage the Xeon's currently have in most server configurations.niva - Wednesday, June 15, 2016 - link

Not if the Xeon doesn't have enough cores to actually process 40+ singlethreaded tasks con-currently.hechacker1 - Wednesday, June 15, 2016 - link

But kernels and VMWare know how to schedule multiple threads on 1 core if it's not being fully utilized. Single threaded IPC can make up for not having as many cores. See the iPhone SoCs for another example.ddriver - Wednesday, June 15, 2016 - link

Not if you have thousands of concurrent workloads and only like 8 cores. As fast as each core might be, the overhead from workload context switching will eat it up.willis936 - Thursday, June 16, 2016 - link

Yeah if each task is not significantly longer than a context switch. Context switches are very fast, especially with processors with many sets of SMT registers per core.ddriver - Thursday, June 16, 2016 - link

If what you suggest is correct, then intel would not be investing chip TDP in more cores but higher clocks and better single threaded performance. Clearly this is not the case, as they are pushing 20 cores at the fairly modest 2.4 Ghz.