Free Cooling: the Server Side of the Story

by Johan De Gelas on February 11, 2014 7:00 AM EST- Posted in

- Cloud Computing

- IT Computing

- Intel

- Xeon

- Ivy Bridge EP

- Supermicro

The Server CPU Temperatures

Given Intel's dominance in the server area, we will focus on the Intel Xeons. The "normal", non-low power, Xeons have a specified Tcase of 75°C (167 °F, 95 W) to 88°C (190 °F, 130 W). Tcase is the temperature measurement using a thermocouple embedded in the center of the heat spreader, so there is a lot of temperature headroom. The low power Xeons (70 W TDP or less) have a lot less headroom as the Tcase is a pretty low 65°C (149 °F). But since those Xeons produce a lot less heat, it should be easier to keep them at lower temperatures. In all cases, there is quite a bit of headroom.

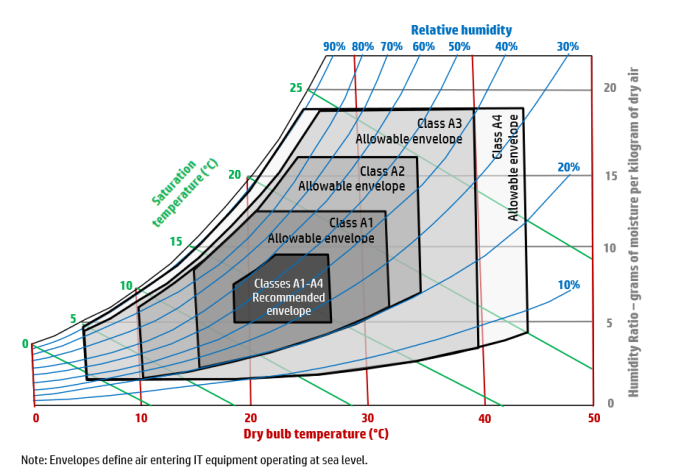

But there is more than the CPU of course; the complete server must be up for running with higher temperatures. That is where the ASHRAE specifications come in. The American Society of Heating, Refrigeration, and Air conditioning Engineers publishes guidelines for the temperature and humidity operating ranges of IT equipment. If vendors comply with these guidelines, administrators can be sure that they will not void warranties when running servers at higher temperatures. Most vendors - including HP and DELL - now allow the inlet temperature of a server to be as high as 35 °C, the so called A2 class.

ASHRAE specifications per class

The specified temperature is the so called "dry bulb" temperature, which is the normal measured temperature by a dry thermometer. Humidity should be approximately between 20 and 80%. Specially equipped servers (Class A4) can go as high as 45°C with humidity being between 10 and 90%.

It is hard to overestimate the impact of servers being capable of breathing hotter air. In modern data centers this ability could be the difference between being able to depend on free cooling only, or having to continue to invest in very expensive chilling installations. Being able to use free cooling comes with both OPEX and CAPEX savings. In traditional data centers, this allows administrators to raise the room temperature and decrease the amount of energy the cooling requires.

And last but not least, it increases the time before a complete shutdown is necessary when the cooling installation fails. The more headroom you get, the easier it is to fix the cooling problems before critical temperatures are reached and the reputation of the hosting provider is tarnished. In a modern data center, it is almost the only way to run most of the year with free cooling.

Raising the inlet temperature is not easy when you are providing hosting for many customers (i.e. a "multi-tenant data center"). Most customers resist warmer data centers, with good reason in some cases. We watched a 1U server use 80 Watt to power its fans on a total of less than 200 Watt! In that case, the savings of the data center facility are paid by the energy losses of the IT equipment. It's great for the data center's PUE, but not very compelling for customers.

But how about the latest servers that support much higher inlet temperatures? Supermicro claims their servers can work with up to 47°C inlet temperatures. It's time to do what Anandtech does best and give you facts and figures so you can decide if higher temperatures are viable.

48 Comments

View All Comments

iTzSnypah - Tuesday, February 11, 2014 - link

I wonder why nobody has tried geothermal liquid cooling. You could do it 2 ways. Either with a geothermal heat pump set up or cut out the middle man and just use the earth like you would a radiator in a liquid cooling loop. The only problem would be how many wells you would have to drill to cool up to 100MW (I'm thinking 20+ at a depth of at least 50ft).ShieTar - Tuesday, February 11, 2014 - link

Its kind of easier to just use a nearby river than dig for and pump up ground water. That's what power stations and big chemical factories do. For everybody else, air-cooling is just easier and less expensive.iTzSnypah - Tuesday, February 11, 2014 - link

You wouldn't be drilling for water. You drill a well so you can put pipe in it, fill it back up and then pump water through the pipes using the earth's constant temp (~20c) to cool your liquid which is warmer (>~30c).looncraz - Tuesday, February 11, 2014 - link

I experimented with this (mathematically) and found that heat soak is a serious, variable, concern. If the new moisture is coming from the surface, this is not as much of an issue, but if it isn't, you could have a problem in short order. Then there are the corrosion and maintenance issues...The net result is that it is cheaper and easier to just install a few ten thousand gallon coolant holding tanks and keep them cool (but above ambient) and to cool the air in the server room(s). These tanks can be put inside a hill or in the ground for extra installation and a surface radiator system could allow using cold outside air to save energy.

superflex - Wednesday, February 12, 2014 - link

You obviously dont know have a clue about drilling costs.For a 2,000 s.f. home, a geothermal driller needs between 200-300 lineal feet of well bore to cool the house. In unconsolidated material, drilling costs per foot range from $15-$30/foot, depending on the rig. For drilling in rock, up the cost to $45/foot.

For something that uses 80,000x more power than a typical home, what do you think the drilling costs would be?

Go back to heating up Hot Pockets.

chadwilson - Wednesday, February 19, 2014 - link

That last statement was totally unnecessary. Your perfectly valid point was tarnished by your awful attitude.nathanddrews - Tuesday, February 11, 2014 - link

Small scale, but really cool. Use PV to power your pumps...http://www.overclockers.com/forums/showthread.php?...

Sivar - Tuesday, February 11, 2014 - link

Geothermal heat pumps are only moderately more efficient than standard air conditioning and require an enormous amount of area. 20 holes at a depth of 50ft would handle the cooling requirements for a large residential home, but wouldn't even approach the requirements for a data center.One related possibility is to drill to a nearby aquifer and draw cool water, run it through a heat exchanger, then exhaust warm water into the same aquifer. Unfortunately, water overuse has been drained aquifers such that even the pumping costs would be substantial, and the aquifers will eventually be drained to the point that vacuum-based pumps can no longer draw water.

rkcth - Tuesday, February 11, 2014 - link

They are a lot more efficient at heating, but only mildly more efficient at cooling. They also are really storing heat in the ground in the summer and taking it back in the winter, so if you only store heat you can actually have a problem long-term. Your essentially using the ground as a long-term heat storage device since the ground is between 50-60 degrees depending on your area of the country, but use of the geothermal changes that temperature. An air source makes much more sense since you share the air with everyone else and it essentially just blows away.biohazard918 - Tuesday, February 11, 2014 - link

Wells don't use vacuum based pumps most aquifers are much to deep for that instead you stick the pump in the bottom of the well and push the water to the service.