The AMD Radeon R9 Fury X Review: Aiming For the Top

by Ryan Smith on July 2, 2015 11:15 AM EST

Almost 7 years ago to this day, AMD formally announced their “small die strategy.” Embarked upon in the aftermath of the company’s struggles with the Radeon HD 2900 XT, AMD opted against continuing to try beat NVIDIA at their own game. Rather than chase NVIDIA to absurd die sizes and the risks that come with it, the company would focus on smaller GPUs for the larger sub-$300 market. Meanwhile to compete in the high-end markets, AMD would instead turn to multi-GPU technology – CrossFire – to offer even better performance at a total cost competitive with NVIDIA’s flagship cards.

AMD’s early efforts were highly successful; though they couldn’t take the crown from NVIDIA, products like the Radeon HD 4870 and Radeon HD 5870 were massive spoilers, offering a great deal of NVIDIA’s flagship performance with smaller GPUs, manufactured at a lower cost, and drawing less power. Officially the small die strategy was put to rest earlier this decade, however even informally this strategy has continued to guide AMD GPU designs for quite some time. At 438mm2, Hawaii was AMD’s largest die as of 2013, still more than 100mm2 smaller than NVIDIA’s flagship GK110.

AMD's 2013 Flagship: Radeon R9 290X, Powered By Hawaii

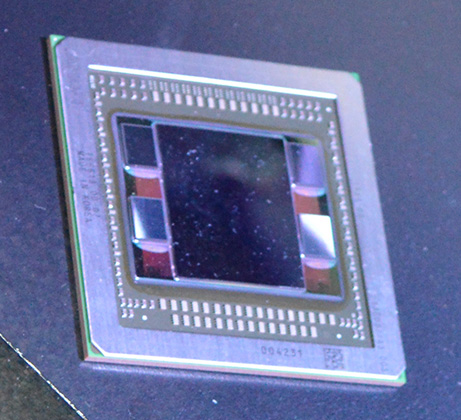

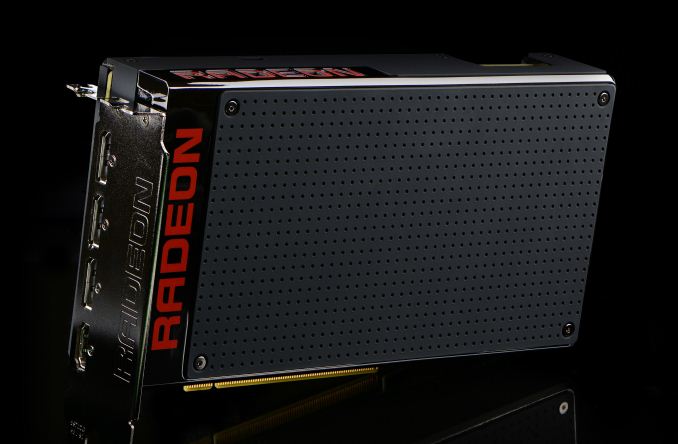

Catching up to the present, this month marks an important occasion for AMD with the launch of their new flagship GPU, Fiji, and the flagship video card based on it, the Radeon R9 Fury X. For AMD the launch of Fiji is not just another high-end GPU launch (their 3rd on the 28nm process), but it marks a significant shift for the company. Fiji is first and foremost a performance play, but it’s also new memory technology, new power optimization technologies, and more. In short it may be the last of the 28nm GPUs, but boy if it isn’t among the most important.

With the recent launch of the Fiji GPU I bring up the small die strategy not just because Fiji is anything but small – AMD has gone right to the reticle limit – but because it highlights how the GPU market has changed in the last seven years and how AMD has needed to respond. Since 2008 NVIDIA has continued to push big dies, but they’ve gotten smarter about it as well, producing increasingly efficient GPUs that have made it harder for a scrappy AMD to undercut NVIDIA. At the same time alternate frame rendering, the cornerstone of CrossFire and SLI, has become increasingly problematic as rendering techniques get less and less AFR-friendly, making dual GPU cards less viable than they once were. And finally, on the business side of matters, AMD’s market share of discrete GPUs is lower than it has been in over a decade, with AMD’s GPU plus APU sales now being estimated as being below just NVIDIA’s GPU sales.

Which is not to say I’m looking to paint a poor picture of the company – AMD Is nothing if not the perennial underdog who constantly manages to surprise us with what they can do with less – but this context is important in understanding why AMD is where they stand today, and why Fiji is in many ways such a monumental GPU for the company. The small die strategy is truly dead, and now AMD is gunning for NVIDIA’s flagship with the biggest, gamiest GPU they could possibly make. The goal? To recapture the performance crown that has been in NVIDIA’s hands for far too long, and to offer a flagship card of their own that doesn’t play second-fiddle to NVIDIA’s.

To get there AMD needs to face down several challenges. There is no getting around the fact that NVIDIA’s Maxwell 2 GPUs are very well done, very performant, and very efficient, and that between GM204 and GM200 AMD has their work cut out for them. Performance, power consumption, form factors; these all matter, and these are all issues that AMD is facing head-on with Fiji and the R9 Fury X.

At the same time however the playing field has never been more equal. We’re now in the 4th year of TSMC’s 28nm process and have a good chunk of another year left to go. AMD and NVIDIA have had an unprecedented amount of time to tweak their wares around what is now a very mature process, and that means that any kind of advantages for being a first-mover or being more aggressive are gone. As the end of the 28nm process’s reign at the top, NVIDIA and AMD now have to rely on their engineers and their architectures to see who can build the best GPU against the very limits of the 28nm process.

Overall, with GPU manufacturing technology having stagnated on the 28nm node, it’s very hard to talk about the GPU situation without talking about the manufacturing situation. For as much as the market situation has forced an evolution in AMD’s business practices, there is no escaping the fact that the current situation on the manufacturing process side has had an incredible, unprecedented effect on the evolution of discrete GPUs from a technology and architectural standpoint. So for AMD Fiji not only represents a shift towards large GPUs that can compete with NVIDIA’s best, but it represents the extensive efforts AMD has gone through to continue improving performance in the face of manufacturing limitations.

And with that we dive in to today’s review of the Radeon R9 Fury X. Launching this month is AMD’s new flagship card, backed by the full force of the Fiji GPU.

| AMD GPU Specification Comparison | ||||||

| AMD Radeon R9 Fury X | AMD Radeon R9 Fury | AMD Radeon R9 290X | AMD Radeon R9 290 | |||

| Stream Processors | 4096 | (Fewer) | 2816 | 2560 | ||

| Texture Units | 256 | (How much) | 176 | 160 | ||

| ROPs | 64 | (Depends) | 64 | 64 | ||

| Boost Clock | 1050MHz | (On Yields) | 1000MHz | 947MHz | ||

| Memory Clock | 1Gbps HBM | (Memory Too) | 5Gbps GDDR5 | 5Gbps GDDR5 | ||

| Memory Bus Width | 4096-bit | 4096-bit | 512-bit | 512-bit | ||

| VRAM | 4GB | 4GB | 4GB | 4GB | ||

| FP64 | 1/16 | 1/16 | 1/8 | 1/8 | ||

| TrueAudio | Y | Y | Y | Y | ||

| Transistor Count | 8.9B | 8.9B | 6.2B | 6.2B | ||

| Typical Board Power | 275W | (High) | 250W | 250W | ||

| Manufacturing Process | TSMC 28nm | TSMC 28nm | TSMC 28nm | TSMC 28nm | ||

| Architecture | GCN 1.2 | GCN 1.2 | GCN 1.1 | GCN 1.1 | ||

| GPU | Fiji | Fiji | Hawaii | Hawaii | ||

| Launch Date | 06/24/15 | 07/14/15 | 10/24/13 | 11/05/13 | ||

| Launch Price | $649 | $549 | $549 | $399 | ||

With 4096 SPs and coupled with the first implementation of High Bandwidth Memory, the R9 Fury X aims for the top. Over the coming pages we’ll get in to a deeper discussion on the architectural and other features found in the card, but the important point to take away right now it that it packs a lot of shaders, even more memory bandwidth, and is meant to offer AMD’s best performance yet. R9 Fury X will eventually be joined by 3 other Fiji-based parts in the coming months, but this month it’s all about AMD’s flagship card.

The R9 Fury X is launching at $649, which happens to be the same price as the card’s primary competition, the GeForce GTX 980 Ti. Launched at the end of May, the GTX 980 Ti is essentially a preemptive attack on the R9 Fury X from NVIDIA, offering performance close enough to NVIDIA’s GTX Titan X flagship that the difference is arguably immaterial. For AMD this means that while beating GTX Titan X would be nice, they really only need a win against the GTX 980 Ti, and as we’ll see the Fury X will make a good run at it, making this the closest AMD has come to an NVIDIA flagship card in quite some time.

Finally, from a market perspective, AMD will be going after a few different categories with the R9 Fury X. As competition for the GTX 980 Ti, AMD is focusing on 4K resolution gaming, based on a combination of the fact that 4K monitors are becoming increasingly affordable, 4K Freesync monitors are finally available, and relative to NVIDIA’s wares, AMD fares the best at 4K. Expect to see AMD also significantly play up the VR possibilities of the R9 Fury X, though the major VR headset, the Oculus Rift, won’t ship until Q1 of 2016. Finally, it has now been over three years since the launch of the original Radeon HD 7970, so for buyers looking for an update AMD’s first 28nm card, Fury X is in a good position to offer the kind of generational performance improvements that typically justify an upgrade.

458 Comments

View All Comments

chizow - Friday, July 3, 2015 - link

Pretty much, AMD supporters/fans/apologists love to parrot the meme that Intel hasn't innovated since original i7 or whatever, and while development there has certainly slowed, we have a number of 18 core e5-2699v3 servers in my data center at work, Broadwell Iris Pro iGPs that handily beat AMD APU and approach low-end dGPU perf, and ultrabooks and tablets that run on fanless 5W Core M CPUs. Oh, and I've upgraded also managed to find meaningful desktop upgrades every few years for no more than $300 since Core 2 put me back in Intel's camp for the first time in nearly a decade.looncraz - Friday, July 3, 2015 - link

None of what you stated is innovation, merely minor evolution. The core design is the same, gaining only ~5% or so IPC per generation, same basic layouts, same basic tech. Are you sure you know what "innovation" means?Bulldozer modules were an innovative design. A failure, but still very innovative. Pentium Pro and Pentium 4 were both innovative designs, both seeking performance in very different ways.

Multi-core CPUs were innovative (AMD), HBM is innovative (AMD+Hynix), multi-GPU was innovative (3dfx), SMT was innovative (IBM, Alpha), CPU+GPU was innovative (Cyrix, IIRC)... you get the idea.

Doing the exact same thing, more or less the exact same way, but slightly better, is not innovation.

chizow - Sunday, July 5, 2015 - link

Huh? So putting Core level performance in a passive design that is as thin as a legal pad and has 10 hours of battery life isn't innovation?Increasing iGPU performance to the point it not only provides top-end CPU performance, and close to dGPU performance, while convincingly beating AMD's entire reason for buying ATI, their Fusion APUs isn't innovation?

And how about the data center where Intel's *18* core CPUs are using the same TDP and sockets, in the same U rack units as their 4 and 6 core equivalents of just a few years ago?

Intel is still innovating in different ways, that may not directly impact the desktop CPU market but it would be extremely ignorant to claim they aren't addressing their core growth and risk areas with new and innovative products.

I've bought more Intel products in recent years vs. prior strictly because of these new innovations that are allowing me to have high performance computing in different form factors and use cases, beyond being tethered to my desktop PC.

looncraz - Friday, July 3, 2015 - link

Show me intel CPU innovations since after the pentium 4.Mind you, innovations can be failures, they can be great successes, or they can be ho-hum.

P6->Core->Nehalem->Sandy Bridge->Haswell->Skylake

The only changes are evolutionary or as a result of process changes (which I don't consider CPU innovations).

This is not to say that they aren't fantastic products - I'm rocking an i7-2600k for a reason - they just aren't innovative products. Indeed, nVidia's Maxwell is a wonderfully designed and engineered GPU, and products based on it are of the highest quality and performance. That doesn't make them innovative in any way. Nothing technically wrong with that, but I wonder how long before someone else came up with a suitable RAM just for GPUs if AMD hadn't done it?

chizow - Sunday, July 5, 2015 - link

I've listed them above and despite slowing the pace of improvements on the desktop CPU side you are still looking at 30-45% improvement clock for clock between Nehalem and Haswell, along with pretty massive improvements in stock clock speed. Not bad given they've had literally zero pressure from AMD. If anything, Intel dominating in a virtual monopoly has afforded me much cheaper and consistent CPU upgrades, all of which provided significant improvements over the previous platform:E6600 $284

Q6600 $299

i7 920 $199!

i7 4770K $229

i7 5820K $299

All cheaper than the $450 AMD wanted for their ENTRY level Athlon 64 when they finally got the lead over Intel, which made it an easy choice to go to Intel for the first time in nearly a decade after AMD got Conroe'd in 2006.

silverblue - Monday, July 6, 2015 - link

I could swear that you've posted this before.I think the drop in prices were more of an attempt to strangle AMD than anything else. Intel can afford it, after all.

chizow - Monday, July 6, 2015 - link

Of course I've posted it elsewhere because it bears repeating, the nonsensical meme AMD fanboys love to parrot about AMD being necessary for low prices and strong competition is a farce. I've enjoyed unparalleled stability at a similar or higher level of relative performance in the years that AMD has become UNCOMPETITIVE in the CPU market. There is no reason to expect otherwise in the dGPU market.zoglike@yahoo.com - Monday, July 6, 2015 - link

Really? Intel hasn't innovated? I really hope you are trolling because if you believe that I fear for you.chizow - Thursday, July 2, 2015 - link

Let's not also discount the fact that's just stock comparisons, once you overclock the cards as many are interested in doing in this $650 bracket, especially with AMD's clams Fury X is an "Overclocker's Dream", we quickly see the 980Ti cannot be touched by Fury X, water cooler or not.Fury X wouldn't have been the failure it is today if not for AMD setting unrealistic and ultimately, unattained expectations. 390X WCE at $550-$600 and its a solid alternative. $650 new "Premium" Brand that doesn't OC at all, has only 4GB, has pump whine issues and is slower than Nvidia's same priced $650 980Ti that launched 3 weeks before it just doesn't get the job done after AMD hyped it from the top brass down.

andychow - Thursday, July 2, 2015 - link

Yeah, "Overclocker's dream", only overclocks by 75 MHz. Just by that statement, AMD has totally lost me.