The AMD Radeon R9 Fury X Review: Aiming For the Top

by Ryan Smith on July 2, 2015 11:15 AM EST

Almost 7 years ago to this day, AMD formally announced their “small die strategy.” Embarked upon in the aftermath of the company’s struggles with the Radeon HD 2900 XT, AMD opted against continuing to try beat NVIDIA at their own game. Rather than chase NVIDIA to absurd die sizes and the risks that come with it, the company would focus on smaller GPUs for the larger sub-$300 market. Meanwhile to compete in the high-end markets, AMD would instead turn to multi-GPU technology – CrossFire – to offer even better performance at a total cost competitive with NVIDIA’s flagship cards.

AMD’s early efforts were highly successful; though they couldn’t take the crown from NVIDIA, products like the Radeon HD 4870 and Radeon HD 5870 were massive spoilers, offering a great deal of NVIDIA’s flagship performance with smaller GPUs, manufactured at a lower cost, and drawing less power. Officially the small die strategy was put to rest earlier this decade, however even informally this strategy has continued to guide AMD GPU designs for quite some time. At 438mm2, Hawaii was AMD’s largest die as of 2013, still more than 100mm2 smaller than NVIDIA’s flagship GK110.

AMD's 2013 Flagship: Radeon R9 290X, Powered By Hawaii

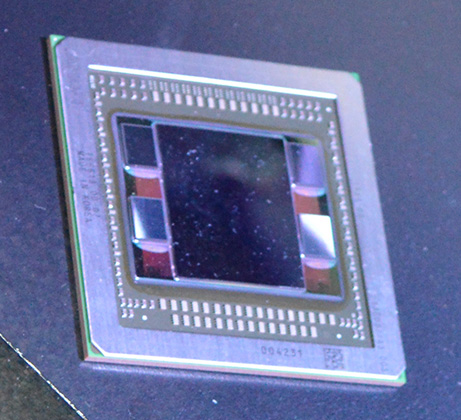

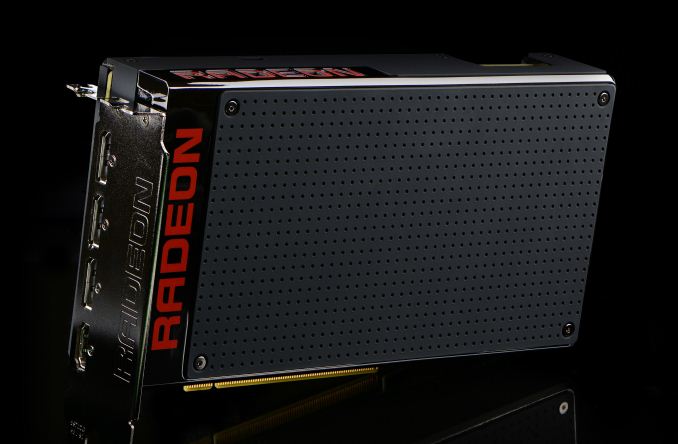

Catching up to the present, this month marks an important occasion for AMD with the launch of their new flagship GPU, Fiji, and the flagship video card based on it, the Radeon R9 Fury X. For AMD the launch of Fiji is not just another high-end GPU launch (their 3rd on the 28nm process), but it marks a significant shift for the company. Fiji is first and foremost a performance play, but it’s also new memory technology, new power optimization technologies, and more. In short it may be the last of the 28nm GPUs, but boy if it isn’t among the most important.

With the recent launch of the Fiji GPU I bring up the small die strategy not just because Fiji is anything but small – AMD has gone right to the reticle limit – but because it highlights how the GPU market has changed in the last seven years and how AMD has needed to respond. Since 2008 NVIDIA has continued to push big dies, but they’ve gotten smarter about it as well, producing increasingly efficient GPUs that have made it harder for a scrappy AMD to undercut NVIDIA. At the same time alternate frame rendering, the cornerstone of CrossFire and SLI, has become increasingly problematic as rendering techniques get less and less AFR-friendly, making dual GPU cards less viable than they once were. And finally, on the business side of matters, AMD’s market share of discrete GPUs is lower than it has been in over a decade, with AMD’s GPU plus APU sales now being estimated as being below just NVIDIA’s GPU sales.

Which is not to say I’m looking to paint a poor picture of the company – AMD Is nothing if not the perennial underdog who constantly manages to surprise us with what they can do with less – but this context is important in understanding why AMD is where they stand today, and why Fiji is in many ways such a monumental GPU for the company. The small die strategy is truly dead, and now AMD is gunning for NVIDIA’s flagship with the biggest, gamiest GPU they could possibly make. The goal? To recapture the performance crown that has been in NVIDIA’s hands for far too long, and to offer a flagship card of their own that doesn’t play second-fiddle to NVIDIA’s.

To get there AMD needs to face down several challenges. There is no getting around the fact that NVIDIA’s Maxwell 2 GPUs are very well done, very performant, and very efficient, and that between GM204 and GM200 AMD has their work cut out for them. Performance, power consumption, form factors; these all matter, and these are all issues that AMD is facing head-on with Fiji and the R9 Fury X.

At the same time however the playing field has never been more equal. We’re now in the 4th year of TSMC’s 28nm process and have a good chunk of another year left to go. AMD and NVIDIA have had an unprecedented amount of time to tweak their wares around what is now a very mature process, and that means that any kind of advantages for being a first-mover or being more aggressive are gone. As the end of the 28nm process’s reign at the top, NVIDIA and AMD now have to rely on their engineers and their architectures to see who can build the best GPU against the very limits of the 28nm process.

Overall, with GPU manufacturing technology having stagnated on the 28nm node, it’s very hard to talk about the GPU situation without talking about the manufacturing situation. For as much as the market situation has forced an evolution in AMD’s business practices, there is no escaping the fact that the current situation on the manufacturing process side has had an incredible, unprecedented effect on the evolution of discrete GPUs from a technology and architectural standpoint. So for AMD Fiji not only represents a shift towards large GPUs that can compete with NVIDIA’s best, but it represents the extensive efforts AMD has gone through to continue improving performance in the face of manufacturing limitations.

And with that we dive in to today’s review of the Radeon R9 Fury X. Launching this month is AMD’s new flagship card, backed by the full force of the Fiji GPU.

| AMD GPU Specification Comparison | ||||||

| AMD Radeon R9 Fury X | AMD Radeon R9 Fury | AMD Radeon R9 290X | AMD Radeon R9 290 | |||

| Stream Processors | 4096 | (Fewer) | 2816 | 2560 | ||

| Texture Units | 256 | (How much) | 176 | 160 | ||

| ROPs | 64 | (Depends) | 64 | 64 | ||

| Boost Clock | 1050MHz | (On Yields) | 1000MHz | 947MHz | ||

| Memory Clock | 1Gbps HBM | (Memory Too) | 5Gbps GDDR5 | 5Gbps GDDR5 | ||

| Memory Bus Width | 4096-bit | 4096-bit | 512-bit | 512-bit | ||

| VRAM | 4GB | 4GB | 4GB | 4GB | ||

| FP64 | 1/16 | 1/16 | 1/8 | 1/8 | ||

| TrueAudio | Y | Y | Y | Y | ||

| Transistor Count | 8.9B | 8.9B | 6.2B | 6.2B | ||

| Typical Board Power | 275W | (High) | 250W | 250W | ||

| Manufacturing Process | TSMC 28nm | TSMC 28nm | TSMC 28nm | TSMC 28nm | ||

| Architecture | GCN 1.2 | GCN 1.2 | GCN 1.1 | GCN 1.1 | ||

| GPU | Fiji | Fiji | Hawaii | Hawaii | ||

| Launch Date | 06/24/15 | 07/14/15 | 10/24/13 | 11/05/13 | ||

| Launch Price | $649 | $549 | $549 | $399 | ||

With 4096 SPs and coupled with the first implementation of High Bandwidth Memory, the R9 Fury X aims for the top. Over the coming pages we’ll get in to a deeper discussion on the architectural and other features found in the card, but the important point to take away right now it that it packs a lot of shaders, even more memory bandwidth, and is meant to offer AMD’s best performance yet. R9 Fury X will eventually be joined by 3 other Fiji-based parts in the coming months, but this month it’s all about AMD’s flagship card.

The R9 Fury X is launching at $649, which happens to be the same price as the card’s primary competition, the GeForce GTX 980 Ti. Launched at the end of May, the GTX 980 Ti is essentially a preemptive attack on the R9 Fury X from NVIDIA, offering performance close enough to NVIDIA’s GTX Titan X flagship that the difference is arguably immaterial. For AMD this means that while beating GTX Titan X would be nice, they really only need a win against the GTX 980 Ti, and as we’ll see the Fury X will make a good run at it, making this the closest AMD has come to an NVIDIA flagship card in quite some time.

Finally, from a market perspective, AMD will be going after a few different categories with the R9 Fury X. As competition for the GTX 980 Ti, AMD is focusing on 4K resolution gaming, based on a combination of the fact that 4K monitors are becoming increasingly affordable, 4K Freesync monitors are finally available, and relative to NVIDIA’s wares, AMD fares the best at 4K. Expect to see AMD also significantly play up the VR possibilities of the R9 Fury X, though the major VR headset, the Oculus Rift, won’t ship until Q1 of 2016. Finally, it has now been over three years since the launch of the original Radeon HD 7970, so for buyers looking for an update AMD’s first 28nm card, Fury X is in a good position to offer the kind of generational performance improvements that typically justify an upgrade.

458 Comments

View All Comments

looncraz - Thursday, July 2, 2015 - link

1. eDRAM takes up more space and more energy and is slower than HBM.2. HBM will make sense for GPUs/APUs, but not for use as system RAM.

3. Yes, almost a guarantee. But how long that will take is anybody's guess.

4. They don't "pay them" so to speak, they just have contractual restrictions during the game development phase that prevents AMD from getting an early enough and frequent enough snapshot of games so they can optimize their drivers in anticipation of the game's release. This makes nVidia look better in those games. The next hurdle is that GameWorks is intentionally designed to abuse nVidia's strengths over AMD and even their own older generation cards. Crysis 2's tessellation is the most blatant example.

Dribble - Monday, July 6, 2015 - link

"Crysis 2's tessellation is the most blatant example"No it wasn't. What you see in the 2D wireframe mode is completely different to what the 3D mode has to draw as it doesn't do the same culling. The whole thing was just another meaningless conspiracy theory.

mr_tawan - Friday, July 3, 2015 - link

> do you think nvidia pays companies to optimize for their gpus and put less focus on amd gpus? especially in 'the way it is meant to be played' sponsored gamesI don't think too many game developers checks the device ID and lower the game performance when it's not the sponser's card. However, I think through the developer relationship program (or something like that), the game with those logo tends to perform better with the respective GPU vendor as the game was developed with that vendor in mind, and with support form the vendor.

The game would be tested against the other vendors as well, but might not be as much as with the sponser.

mindbomb - Thursday, July 2, 2015 - link

Hi, I'd like to point out why the mantle performance was low. It was due to memory overcommitment lowering performance due to the 4GB of vram on the fury x, not due to a driver bug (it's a low level api anyway, there is not much for the driver to do). BF4's mantle renderer needs a surprisingly high amount of vram for optimal performance. This is also why the 2GB tonga struggles at 1080p.YukaKun - Thursday, July 2, 2015 - link

Good to know you're better now, Ryan.I really enjoyed the great length and depth of your review.

Cheers!

Socius - Thursday, July 2, 2015 - link

Curious as to why both toms hardware and anandtech were kind to the fury x. Is anandtech changing review style based on editorial requests from toms now? Because an extremely important point is stock overclock performance. And while the article mentions the tiny 4% performance boost the fury x gets from overclocking, it doesn't show what the 980ti can do. Or even more importantly...that the standard gtx 980 can overclock 30-40% and come out ahead of the fury x, leaving the fury x a rather expensive piece of limited hardware concept at best. Also important to mention that the video encoding test was pointless as Nvidia has moved away from CUDA accelerated video encoding in favour of NVENC hardware encoding. In fact a few drivers ago they had fully disabled CUDA accelerated encoding to promote the switchover.YukaKun - Thursday, July 2, 2015 - link

Then you must have selective reading, because they do mention it. In particular, they say if they just got a 7% OC, then the card will perform basically the same, and they it did.No need to do an OC to the 980ti in that scenario.

Plus, they also mention the Fury X is still locked for OC. Give MSI and Sapphire (maybe AMD as well) until they deliver on their promise of the Fury having better control.

Cheers!

YukaKun - Thursday, July 2, 2015 - link

* and then it did *Edit function when? :(

Cheers!

Socius - Thursday, July 2, 2015 - link

Again going back to the problem of "missing test data" in this review. Under a 11% GPU Clock OC (highest possible), which resulted in a net 5% FPS gain, the card hit nearly 400W (just the card, not total system) and 65C after a long session. Which means any more heat than this, and throttling comes into play even harder. This package is thermally restricted. That's why AMD went with an all in one cooler in the first place...because it wanted to clock it up as high as possible for consumers, but knew the design was a heat monster.Outside of full custom loops, you won't be able to get much more out of this design even with fully unlocked voltage, your issue is still heat first. This is why it's important to show the standard GTX 980 OC'd compared to the Fury X OC'd. Because that completely changes the value proposition for the Fury X. But both Tom's and Anandtech have been afraid to be harsh on AMD in their reviews.

chizow - Thursday, July 2, 2015 - link

Great point, it further backs the point myself and others have made that AMD already "overclocked" Fury X in an all out attempt to beat 980Ti and came close to hitting the chips thermal limits, necessitating the water cooler. We've seen in the past, especially with Hawaii, that lower operating temps = lower leakage = lower power draw under load, so its a very real possibility they could not have hit these frequencies stably with the full chip without WC.When you look at what we know about the Air-Cooled Fury, this is even more likely, as AMD's slides listed it as a 275W part, but it is cut down and/or clocked lower.