The Next Generation Open Compute Hardware: Tried and Tested

by Johan De Gelas & Wannes De Smet on April 28, 2015 12:00 PM ESTIntegrate: OpenRack

After Windmill and Watermark, the time was right another round of consolidation, bringing the rack design into the mix.

An issue with the Freedom servers was that the PSU was non-redundant (resulting in the Dragonstone design) and regularly had a larger power capacity than ever would be needed, which popped up on Facebook's efficiency radar. Adding another PSU in every server would mean an increased CAPEX and OPEX, because even when using an active/passive mode, the passive PSU is still using power.

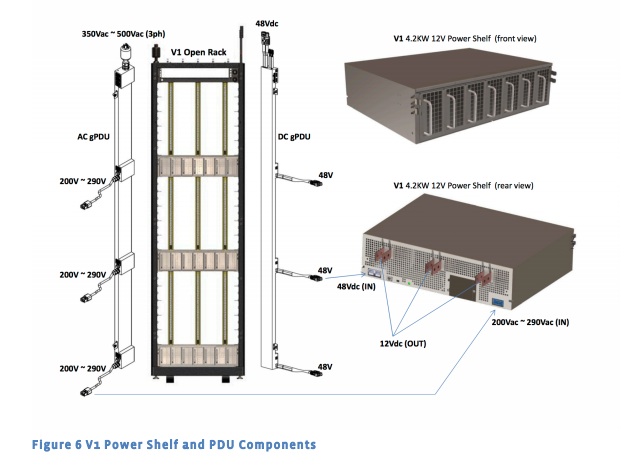

A logical conclusion then was that this problem could not be solved within the server chassis, but could instead be solved by grouping power supplies of multiple servers. This resulted in OpenRack v1, a rack built with grouped power supplies on 'power shelves' supplying 12.5V DC for a 4.2kW 'power zone'. Each power zone has one power shelf (3 OpenUnits high), a highly available group of power supplies with a 5+1 redundancy feeding 10 OU (OpenU, 1 OU = 48mm) rack units of equipment. When power demand is low, a number of PSUs are automatically powered off allowing the remaining PSUs to operate much closer to their optimal points in the efficiency curve.

Another key improvement over regular racks was the power distribution system, which got rid of power cables and their need to be (dis)connected each time a server is serviced. Power is provided by three vertical power rails called a bus bar, with a rail segment for each power zone. After fitting each server with a bus bar connector, you can now simply slide in the server, the connector hot-plugs into the rail at the back, done. An additional 2 OU equipment bay was placed at the top for switching equipment.

26 Comments

View All Comments

Kevin G - Tuesday, April 28, 2015 - link

Excellent article.The efficiency gains are apparent even using suboptimal PSU for benchmarking. (Though there are repeated concurrency values in the benchmarking tables. Is this intentional?)

I'm looking forward to seeing a more compute node hardware based around Xeon-D, ARM and potentially even POWER8 if we're lucky. Options are never a bad thing.

Kind of odd to see the Knox mass storage units, I would have thought that OCP storage would have gone the BackBlaze route with vertically mount disks for easier hot swap, density and cooling. All they'd need to develop would have been a proprietary backplane to handle the Kinetic disks from Seagate. Basic switching logic could also be put on the backplane so the only external networking would be high speed uplinks (40 Gbit QSFP+?).

Speaking of the Kinetic disks, how is redundancy handled with a network facing drive? Does it get replicated by the host generating the data to multiple network disks for a virtual RAID1 redundancy? Is there an aggregator that handles data replication, scrubbing, drive restoration and distribution, sort of like a poor man's SAN controller? Also do the Kinetic drives have two Ethernet interfaces to emulate multi-pathing in the event of a switch failure (quick Googling didn't give me an answer either way)?

The cold storage racks using Blu-ray discs in cartridges doesn't surprise me for archiving. The issue I'm puzzled with is the process how data gets moved to them. I've been under the impression that there was never enough write throughput to make migration meaningful. For a hypothetical example, by the time 20 TB of data has been written to the discs, over 20 TB has been generated that'd be added to the write queue. Essentially big data was too big to archive to disc or tape. Parallelism here would solve the throughput problem but that get expensive and takes more space in the data center that could be used for hot storage and compute.

Do the Knox storage and Wedge networking hardware use the same PDU connectivity as the compute units?

Are the 600 mm wide racks compatible use US Telecom rack width equipment (23" wide)? A few large OEMs offer equipment in that form factor and it'd be nice for a smaller company to mix and match hardware with OCP to suit their needs.

nils_ - Wednesday, April 29, 2015 - link

You can use something like Ceph or HDFS for data redundancy which is kind of like RAID over network.davegraham - Tuesday, April 28, 2015 - link

Also, Juniper Networks has an ONIE-compliant OCP switch called the OCX1100 which is the only Tier1 switch manufacturer (e.g. Cisco, Arista, Brocade) to provide such a device.floobit - Tuesday, April 28, 2015 - link

This is very nice work. One of the best articles I've seen here all year. I think this points at the future state of server computing, but I really wonder if the more traditional datacenter model (VMware on beefy blades with a proprietary FC-connected SAN) can be integrated with this massively-distributed webapp model. Load-balancing and failovering is presumably done in the app layer, removing the need for hypervisors. As pretty as Oracle's recent marketing materials are, I'm pretty sure they don't have an HR app that can be load-balanced on the app layer in alongside an expense app and an ERP app. Maybe in another 10 years. Then again, I have started to see business suites where they host the whole thing for you, and this could be a model for their underlying infrastructure.ggathagan - Tuesday, April 28, 2015 - link

In the original article on these servers, it was stated that the PSU's were run on 277v, as opposed to 208v.277v involves three phase power wiring, which is common in commercial buildings, but usually restricted to HVAC-related equipment and lighting.

That article stated that Facebook saved "about 3-4% of energy use, a result of lower power losses in the transmission lines."

If the OpenRack carries that design over, companies will have to add the cost of bringing power 277v to the rack in order to realize that gain in efficiency.

sor - Wednesday, April 29, 2015 - link

208 is 3 phase as well, generally 3x120v phases, with 208 tapping between phases or 120 available to neutral. Its very common for DC equipment. 277 to the rack IS less common, but you seemed to get hung up on the 3 phase part.Casper42 - Monday, May 4, 2015 - link

3 phase restricted to HVAC?Thats ridiculous, I see 3 Phase in DataCenters all the time.

And Server vendors are now selling 277vAC PSUs for exactly this reason that FB mentions. Instead of converting the 480v main to 220 or 208, you just take a 277 feed right off the 3 phase and use it.

clehene - Tuesday, April 28, 2015 - link

You mention a reported $2 Billion in savings, but the article you refer to states $1.2 Billion.FlushedBubblyJock - Tuesday, April 28, 2015 - link

One is the truth and the other is "NON Generally Accepted Accounting Procedures" aka it's lying equivalent.wannes - Wednesday, April 29, 2015 - link

Link corrected. Thanks!