The AMD FreeSync Review

by Jarred Walton on March 19, 2015 12:00 PM ESTFreeSync vs. G-SYNC Performance

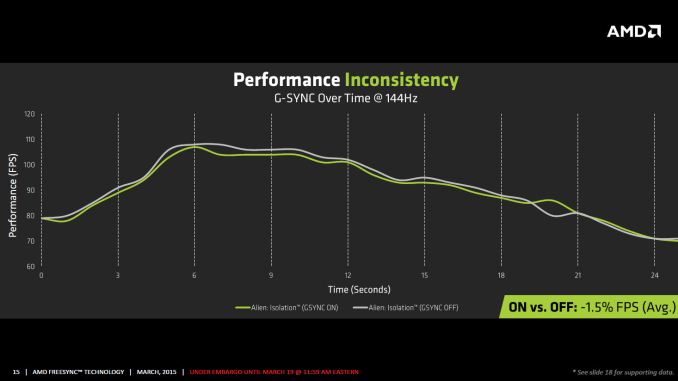

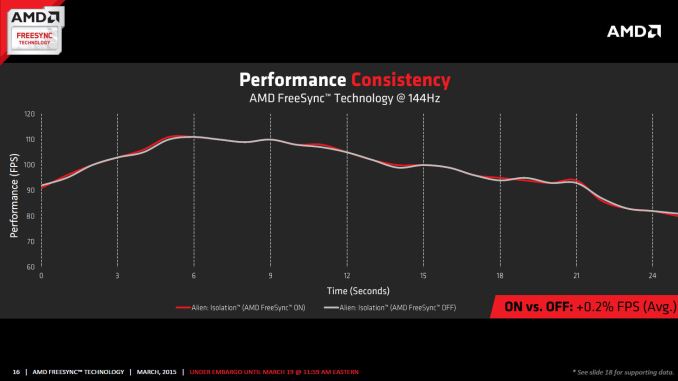

One item that piqued our interest during AMD’s presentation was a claim that there’s a performance hit with G-SYNC but none with FreeSync. NVIDIA has said as much in the past, though they also noted at the time that they were "working on eliminating the polling entirely" so things may have changed, but even so the difference was generally quite small – less than 3%, or basically not something you would notice without capturing frame rates. AMD did some testing however and presented the following two slides:

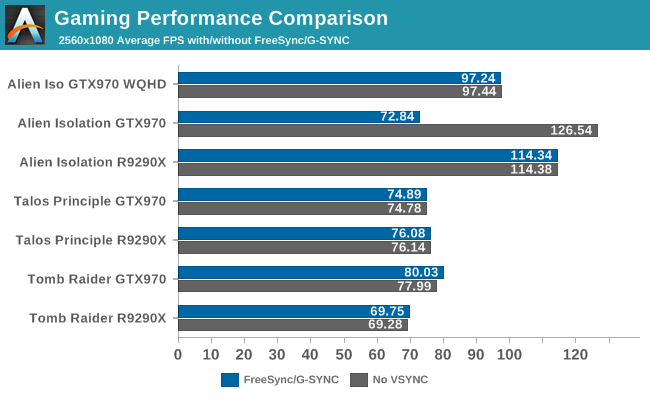

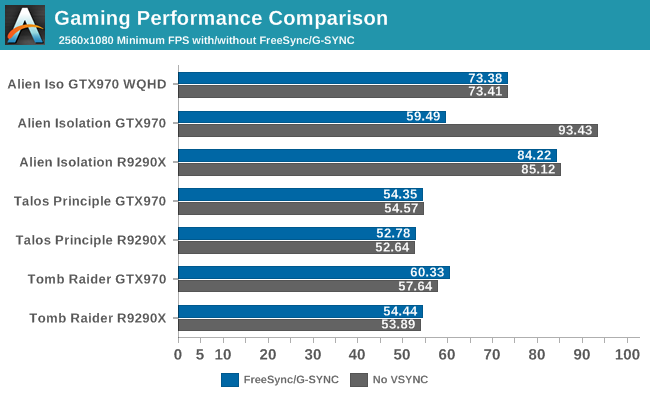

It’s probably safe to say that AMD is splitting hairs when they show a 1.5% performance drop in one specific scenario compared to a 0.2% performance gain, but we wanted to see if we could corroborate their findings. Having tested plenty of games, we already know that most games – even those with built-in benchmarks that tend to be very consistent – will have minor differences between benchmark runs. So we picked three games with deterministic benchmarks and ran with and without G-SYNC/FreeSync three times. The games we selected are Alien Isolation, The Talos Principle, and Tomb Raider. Here are the average and minimum frame rates from three runs:

Except for a glitch with testing Alien Isolation using a custom resolution, our results basically don’t show much of a difference between enabling/disabling G-SYNC/FreeSync – and that’s what we want to see. While NVIDIA showed a performance drop with Alien Isolation using G-SYNC, we weren’t able to reproduce that in our testing; in fact, we even showed a measurable 2.5% performance increase with G-SYNC and Tomb Raider. But again let’s be clear: 2.5% is not something you’ll notice in practice. FreeSync meanwhile shows results that are well within the margin of error.

What about that custom resolution problem on G-SYNC? We used the ASUS ROG Swift with the GTX 970, and we thought it might be useful to run the same resolution as the LG 34UM67 (2560x1080). Unfortunately, that didn’t work so well with Alien Isolation – the frame rates plummeted with G-SYNC enabled for some reason. Tomb Raider had a similar issue at first, but when we created additional custom resolutions with multiple refresh rates (60/85/100/120/144 Hz) the problem went away; we couldn't ever get Alien Isolation to run well with G-SYNC using our custome resolution, however. We’ve notified NVIDIA of the glitch, but note that when we tested Alien Isolation at the native WQHD setting the performance was virtually identical so this only seems to affect performance with custom resolutions and it is also game specific.

For those interested in a more detailed graph of the frame rates of the three runs (six total per game and setting, three with and three without G-SYNC/FreeSync), we’ve created a gallery of the frame rates over time. There’s so much overlap that mostly the top line is visible, but that just proves the point: there’s little difference other than the usual minor variations between benchmark runs. And in one of the games, Tomb Raider, even using the same settings shows a fair amount of variation between runs, though the average FPS is pretty consistent.

350 Comments

View All Comments

junky77 - Thursday, March 19, 2015 - link

Girls, what about laptops..medi03 - Thursday, March 19, 2015 - link

It's lovely how the first page of the article about FreeSync talks exclusively about nVidia.JarredWalton - Thursday, March 19, 2015 - link

It's background information that's highly pertinent, and if "the first page" means "the first 4 paragraphs" then you're right... but the last two talk mostly about FreeSync.Oxford Guy - Thursday, March 19, 2015 - link

I love how the pricing page doesn't anything to address a big problem with both FreeSync and G-Sync -- the assumption that people want to replace the monitors they already have or have money to throw away to do so.I bought an $800 BenQ BL3200PT 32" 1440 A-MVA panel and I am NOT going to just buy another monitor in order to get the latest thing graphics card companies have dreamt up.

Companies need to step up and offer consumers the ability to send in their panels for modification. Why haven't you even thought of that and mentioned it in your article? You guys, like the rest of the tech industry, just blithely support planned obsolescence at a ridiculous speed -- like with the way Intel never even bothered to update the firmware on the G1 ssd to give it TRIM support.

People have spent even more money than I did on high-quality monitors -- and very recently. It's frankly a disservice to the tech community to neglect to place even the slightest pressure on these companies to do more than tell people to buy new monitors to get basic features like this.

You guys need to start advocating for the consumer not just the tech companies that send you stuff.

JarredWalton - Thursday, March 19, 2015 - link

While we're at it: Why don't companies allow you to send in your old car to have it upgraded with a faster engine? Why can't I send in my five year old HDTV to have it turned into a Smart TV? I have some appliances that are getting old as well; I need Kenmore to let me bring in my refrigerator to have it upgraded as well, at a fraction of the cost of a new one!But seriously, modifying monitors is hardly a trivial affair and the only computer tech that allows upgrades without replacing the old part is... well, nothing. You want a faster CPU? Sure, you can upgrade, but the old one is now "useless". I guess you can add more RAM if you have empty slots, or more storage, or an add-in board for USB 3.1 or similar...on a desktop at least. The fact is you buy technology for what it currently offers, not for what it might offer in the future.

If you have a display you're happy with, don't worry about it -- wait a few years and then upgrade when it's time to do so.

Oxford Guy - Friday, March 20, 2015 - link

"Old" as in products still being sold today. Sure, bud.Oxford Guy - Friday, March 20, 2015 - link

Apple offered a free upgrade for Lisa 1 buyers to the Lisa 2 that included replacement internal floppy drives and a new fascia. Those sorts of facts, though, are likely to escape your attention because it's easier to just stick with the typical mindset the manufacturers, and tech sites, love to endorse blithely --- fill the landfills as quickly as possible with unnecessary "upgrade" purchases.Oxford Guy - Friday, March 20, 2015 - link

Macs also used to be able to have their motherboards replaced to upgrade them to a more current unit. "The only computer tech that allows upgrades without replacing the old part is... well, nothing." And whose mindset is responsible for that trend? Hmm? Once upon a time people could actually upgrade their equipment for a fee.Oxford Guy - Friday, March 20, 2015 - link

The silence about my example of the G1 ssd's firmware is also deafening. I'm sure it would have taken tremendous resources on Intel's part to offer a firmware patch!JarredWalton - Friday, March 20, 2015 - link

The G1 question is this: *could* Intel have fixed it via a firmware update? Maybe, or maybe Intel looked into it and found that the controller in the G1 simply wasn't designed to support TRIM, as TRIM didn't exist when the G1 was created. But "you're sure" it was just a bit of effort away, and since you were working at Intel's Client SSD department...oh, wait, you weren't. Given they doubled the DRAM from 16MB to 32MB and switched controller part numbers, it's probable that G1 *couldn't* be properly upgraded to support TRIM:http://www.anandtech.com/show/2808/2

So if that's the case, it's sounds a lot like Adaptive Sync -- the standard didn't exist when many current displays were created, and it can't simply be "patched in". Now you want existing displays that are already assembled to be pulled apart and upgraded. That would likely cost more money than just selling the displays at a discount, as they weren't designed to be easily disassembled and upgraded.

The reality of tech is that creating a product that can be upgraded costs time and resources, and when people try upgrading and mess up it ends up bringing in even more support calls and problems. So on everything smaller than a desktop, we've pretty much lost the ability to upgrade components -- at least in a reasonable and easy fashion.

Is it possible to make an upgradeable display? I suppose so, but what standards do you support to ensure future upgrades? Oh, you can't foresee the future so you have to make a modular display. Then you might have the option to swap out the scaler hardware, LCD panel, maybe even the power brick! And you also have a clunkier looking device because users need to be able to get inside to perform the upgrades.