Intel Xeon E5 Version 3: Up to 18 Haswell EP Cores

by Johan De Gelas on September 8, 2014 12:30 PM ESTUsing a Mobile Architecture Inside a 145W Server Chip

About 15 months after the appearance of the Haswell core in desktop products (June 2013), the "optimized-for-mobile" Haswell architecture is now being adopted into Intel server products.

Left to right: LGA1366 (Xeon 5600), LGA2011 (Xeon E5-2600v1/v2) and LGA2011v3 (E5-2600v3) socket.

Haswell is Intel's fourth tock, a new architecture on the same succesful 22nm process technology (the famous P1270 process) that was used for the Ivy Bridge EP or Xeon E5-2600 v2. Anand discussed the new Haswell architecture in great detail back in 2012, but as a refresher, let's quickly go over the improvements that the Haswell core brings.

Very little has changed in the front-end of the core compared to Ivy Bridge, with the exception of the usual branch prediction improvements and enlarged TLBs. As you might recall, it is the back-end, the execution part, that is largely improved in the Haswell architecture:

- Larger OoO Window (192 vs 168 entries)

- Deeper Load and Store buffers (72 vs 64, 42 vs 36)

- Larger scheduler (60 vs 54)

- The big splash: 8 instead of 6 execution ports: more execution resources for store address calculation, branches and integer processing.

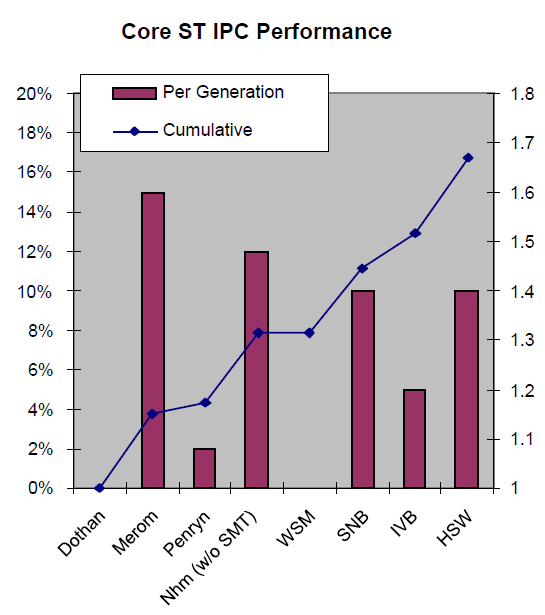

All in all, Intel calculated that integer processing at the same clock speed should be about 10% better than on Ivy Bridge (Xeon E5-2600 v2, launched September 2013), 15-16% better than on Sandy Bridge (Xeon E5-2600, March 2012), and 27% than Nehalem (Xeon 5500, March 2009).

Even better performance improvements can be achieved by recompiling software and using the AVX2 SIMD instructions. The original AVX ISA extension was mostly about speeding up floating point intensive workloads, but AVX2 makes the SIMD integer instructions capable of working with 256-bit registers.

Unfortunately, in a virtualized environment, these ISA extensions are sometimes more curse than blessing. Running AVX/SSE (and other ISA extensions) code can disable the best virtualization features such as high availability, load balancing, and live migration (vMotion). Therefore, administrators will typically force CPUs to "keep quiet" about their newest ISA extensions (VMware EVC). So if you want to integrate a Haswell EP server inside an existing Sandy Bridge EP server cluster, all the new features including AVX2 that were not present in the Sandy Bridge EP are not available. The results is that in virtualized clusters, ISA extensions are rarely used.

Instead, AVX2 code will typically run on a "native" OS. The best known use of AVX2 code is inside video encoders. However, the technology might still prove to be more useful to enterprises that don't work with pixels but with business data. Intel has demonstrated that the AVX2 instructions can also be used for accelerating the compression of data inside in-memory databases (SAP HANA, Microsoft Hekaton), so the integer flavor of AVX2 might become important for fast and massive data mining applications.

Last but not least, the new bit field manipulation and the use of 256-bit registers can speed up quite a few cryptographic algorithms. Large websites will probably be the application inside the datacenter that benefits quickly from AVX2. Simply using the right libraries might speed up RSA-2048 (opening a secure connection), SHA-256 (hashing), and AES-GCM. We will discuss this in more detail in our performance review.

Floating point

Floating point code should benefit too, as Intel has finally included Fused Multiply Add (FMA) instructions. Peak FLOP performance is doubled once again. This should benefit a whole range of HPC applications, which also tend to be recompiled much quicker than the traditional server applications. The L1 and L2 cache bandwidth has also been doubled to better cope with the needs of AVX2 instructions.

85 Comments

View All Comments

martinpw - Monday, September 8, 2014 - link

There is a nice tool called i7z (can google it). You need to run it as root to get the live CPU clock display.kepstin - Monday, September 8, 2014 - link

Most Linux distributions provide a tool called "turbostat" which prints statistical summaries of real clock speeds and c state usage on Intel cpus.kepstin - Monday, September 8, 2014 - link

Note that if turbostat is missing or too old (doesn't support your cpu), you can build it yourself pretty quick - grab the latest linux kernel source, cd to tools/power/x86/turbostat, and type 'make'. It'll build the tool in the current directory.julianb - Monday, September 8, 2014 - link

Finally the e5-xxx v3s have arrived. I too can't wait for the Cinebench and 3DS Max benchmark results.Any idea if now that they are out the e5-xxxx v2s will drop down in price?

Or Intel doesn't do that...

MrSpadge - Tuesday, September 9, 2014 - link

Correct, Intel does not really lower prices of older CPUs. They just gradually phase out.tromp - Monday, September 8, 2014 - link

As an additional test of the latency of the DRAM subsystem, could you please run the "make speedup" scaling benchmark of my Cuckoo Cycle proof-of-work system at https://github.com/tromp/cuckoo ?That will show if 72 threads (2 cpus with 18 hyperthreaded cores) suffice to saturate the DRAM subsystem with random accesses.

-John

Hulk - Monday, September 8, 2014 - link

I know this is not the workload these parts are designed for, but just for kicks I'd love to see some media encoding/video editing apps tested. Just to see what this thing can do with a well coded mainstream application. Or to see where the apps fades out core-wise.Assimilator87 - Monday, September 8, 2014 - link

Someone benchmark F@H bigadv on these, stat!iwod - Tuesday, September 9, 2014 - link

I am looking forward to 16 Core Native Die, 14nm Broadwell Next year, and DDR4 is matured with much better pricing.Brutalizer - Tuesday, September 9, 2014 - link

Yawn, the new upcoming SPARC M7 cpu has 32 cores. SPARC has had 16 cores for ages. Since some generations back, the SPARC cores are able to dedicate all resources to one thread if need be. This way the SPARC core can have one very strong thread, or massive throughput (many threads). The SPARC M7 cpu is 10 billion transistors:http://www.enterprisetech.com/2014/08/13/oracle-cr...

and it will be 3-4x faster than the current SPARC M6 (12 cores, 96 threads) which holds several world records today. The largest SPARC M7 server will have 32-sockets, 1024 cores, 64TB RAM and 8.192 threads. One SPARC M7 cpu will be as fast as an entire Sunfire 25K. :)

The largest Xeon E5 server will top out at 4-sockets probably. I think the Xeon E7 cpus top out at 8-socket servers. So, if you need massive RAM (more than 10TB) and massive performance, you need to venture into Unix server territory, such as SPARC or POWER. Only they have 32-socket servers capable of reaching the highest performance.

Of course, the SGI Altix/UV2000 servers have 10.000s of cores and 100TBs of RAM, but they are clusters, like a tiny supercomputer. Only doing HPC number crunching workloads. You will never find these large Linux clusters run SAP Enterprise workloads, there are no such SAP benchmarks, because clusters suck at non HPC workloads.

-Clusters are typically serving one user who picks which workload to run for the next days. All SGI benchmarks are HPC, not a single Enterprise benchmark exist for instance SAP or other Enterprise systems. They serve one user.

-Large SMP servers with as many as 32 sockets (or even 64-sockets!!!) are typically serving thousands of users, running Enterprise business workloads, such as SAP. They serve thousands of users.