The ARM vs x86 Wars Have Begun: In-Depth Power Analysis of Atom, Krait & Cortex A15

by Anand Lal Shimpi on January 4, 2013 7:32 AM EST- Posted in

- Tablets

- Intel

- Samsung

- Arm

- Cortex A15

- Smartphones

- Mobile

- SoCs

Late last month, Intel dropped by my office with a power engineer for a rare demonstration of its competitive position versus NVIDIA's Tegra 3 when it came to power consumption. Like most companies in the mobile space, Intel doesn't just rely on device level power testing to determine battery life. In order to ensure that its CPU, GPU, memory controller and even NAND are all as power efficient as possible, most companies will measure power consumption directly on a tablet or smartphone motherboard.

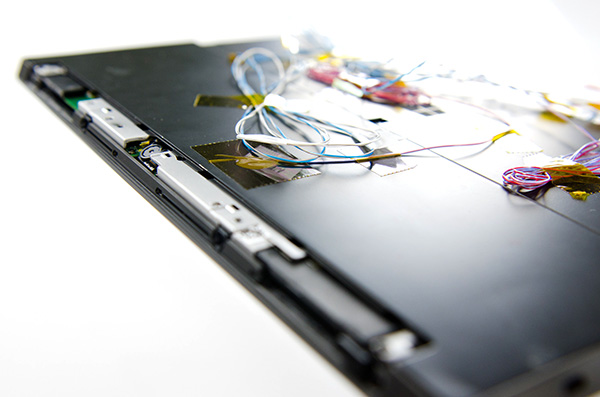

The process would be a piece of cake if you had measurement points already prepared on the board, but in most cases Intel (and its competitors) are taking apart a retail device and hunting for a way to measure CPU or GPU power. I described how it's done in the original article:

Measuring power at the battery gives you an idea of total platform power consumption including display, SoC, memory, network stack and everything else on the motherboard. This approach is useful for understanding how long a device will last on a single charge, but if you're a component vendor you typically care a little more about the specific power consumption of your competitors' components.

What follows is a good mixture of art and science. Intel's power engineers will take apart a competing device and probe whatever looks to be a power delivery or filtering circuit while running various workloads on the device itself. By correlating the type of workload to spikes in voltage in these circuits, you can figure out what components on a smartphone or tablet motherboard are likely responsible for delivering power to individual blocks of an SoC. Despite the high level of integration in modern mobile SoCs, the major players on the chip (e.g. CPU and GPU) tend to operate on their own independent voltage planes.

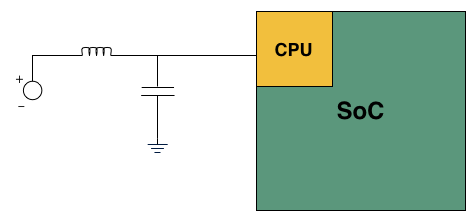

A basic LC filter

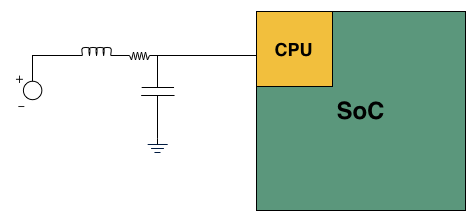

What usually happens is you'll find a standard LC filter (inductor + capacitor) supplying power to a block on the SoC. Once the right LC filter has been identified, all you need to do is lift the inductor, insert a very small resistor (2 - 20 mΩ) and measure the voltage drop across the resistor. With voltage and resistance values known, you can determine current and power. Using good external instruments (NI USB-6289) you can plot power over time and now get a good idea of the power consumption of individual IP blocks within an SoC.

Basic LC filter modified with an inline resistor

The previous article focused on an admittedly not too interesting comparison: Intel's Atom Z2760 (Clover Trail) versus NVIDIA's Tegra 3. After much pleading, Intel returned with two more tablets: a Dell XPS 10 using Qualcomm's APQ8060A SoC (dual-core 28nm Krait) and a Nexus 10 using Samsung's Exynos 5 Dual (dual-core 32nm Cortex A15). What was a walk in the park for Atom all of the sudden became much more challenging. Both of these SoCs are built on very modern, low power manufacturing processes and Intel no longer has a performance advantage compared to Exynos 5.

Just like last time, I ensured all displays were calibrated to our usual 200 nits setting and ensured the software and configurations were as close to equal as possible. Both tablets were purchased at retail by Intel, but I verified their performance against our own samples/data and noticed no meaningful deviation. Since I don't have a Dell XPS 10 of my own, I compared performance to the Samsung ATIV Tab and confirmed that things were at least performing as they should.

We'll start with the Qualcomm based Dell XPS 10...

140 Comments

View All Comments

Kidster3001 - Friday, January 4, 2013 - link

Samsung uses everyone's chips in their phones. Samsung, Qualcomm, TI... everyone's. I would not be surprised to see a Samsung phone with Atom in it eventually.jeffkibuule - Friday, January 4, 2013 - link

They've never used other non-Samsung SoCs by choice, especially in their high end phones. They only used Qualcomm MSM8960 in the US GS III because Qualcomm's separate baseband MDM9615 wasn't ready. As soon as it was, we saw the Galaxy Note II use Exynos again. Nvidia and TI chips have been used in the low end from Samsung, but that's not profitable to anyone.Intel needs a major design win from a tier one OEM willing to put its chip inside their flagship phone, and with most phone OEMs actually choosing to start designing their own ARM SoCs (including even LG and Huawei), that task is getting a lot harder than you might think.

felixyang - Saturday, January 5, 2013 - link

some versions of Samsung's GS2 use TI's OMAP.iwod - Saturday, January 5, 2013 - link

Exactly like what is said above. If they have a choice they would rather use everything they produce themselves. Simply Because Wasted Fabs Space is expensive.Icehawk - Friday, January 4, 2013 - link

I find these articles very interesting - however I'd really like to see an aggregate score/total for power usage, IOW what is the area under the curve? As discussed being quicker to complete at higher power can be more efficient - however when looking at a graph it is very hard to see what the total area is. Giving a total wattage used during the test (ie, area under curve) would give a much easier metric to read and it is the important #, not what the voltage maxes or minimums at but the overall usage over time/process IMO.extide - Friday, January 4, 2013 - link

There are indeed several graphs that display total power used in joules, which is the area under the curve of the watts graphs. Maybe you missed them ?jwcalla - Friday, January 4, 2013 - link

That's what the bar charts are showing.GeorgeH - Friday, January 4, 2013 - link

It's already there. A Watt is a Joule/Second, so the area under the power/time graphs is measured in Watts * Seconds = Joules.Veteranv2 - Friday, January 4, 2013 - link

Another Intel PR Article, it is getting really sad on this website.Now since you are still using Win8 which is garbage for ARM. Please us the correct software platform for ARM chips. I'd love to see those power measurements then.

Anandtech did it again. Pick the most favorable software platform for Intel, give the least favorable to ARM.

Way to go! Again....

Intel PR at its best...

Veteranv2 - Friday, January 4, 2013 - link

Oh wait its even better!They used totally different screens with almost 4 times the pixels on the nexus 10 and then says it requires more power to do benchmarks. Hahaha, this review gave me a good laugh. Even worse then the previous ones.

This might explain the lack of product overviews at the start.