The ARM vs x86 Wars Have Begun: In-Depth Power Analysis of Atom, Krait & Cortex A15

by Anand Lal Shimpi on January 4, 2013 7:32 AM EST- Posted in

- Tablets

- Intel

- Samsung

- Arm

- Cortex A15

- Smartphones

- Mobile

- SoCs

Late last month, Intel dropped by my office with a power engineer for a rare demonstration of its competitive position versus NVIDIA's Tegra 3 when it came to power consumption. Like most companies in the mobile space, Intel doesn't just rely on device level power testing to determine battery life. In order to ensure that its CPU, GPU, memory controller and even NAND are all as power efficient as possible, most companies will measure power consumption directly on a tablet or smartphone motherboard.

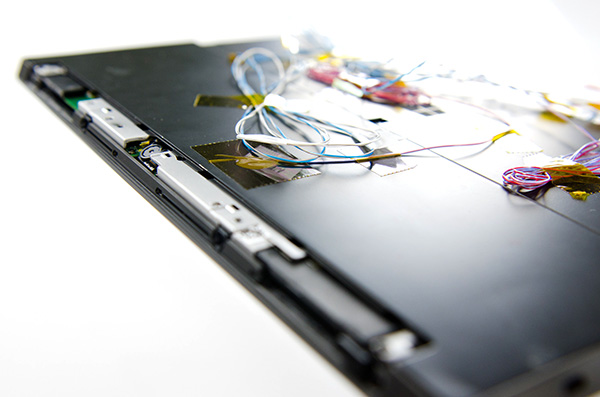

The process would be a piece of cake if you had measurement points already prepared on the board, but in most cases Intel (and its competitors) are taking apart a retail device and hunting for a way to measure CPU or GPU power. I described how it's done in the original article:

Measuring power at the battery gives you an idea of total platform power consumption including display, SoC, memory, network stack and everything else on the motherboard. This approach is useful for understanding how long a device will last on a single charge, but if you're a component vendor you typically care a little more about the specific power consumption of your competitors' components.

What follows is a good mixture of art and science. Intel's power engineers will take apart a competing device and probe whatever looks to be a power delivery or filtering circuit while running various workloads on the device itself. By correlating the type of workload to spikes in voltage in these circuits, you can figure out what components on a smartphone or tablet motherboard are likely responsible for delivering power to individual blocks of an SoC. Despite the high level of integration in modern mobile SoCs, the major players on the chip (e.g. CPU and GPU) tend to operate on their own independent voltage planes.

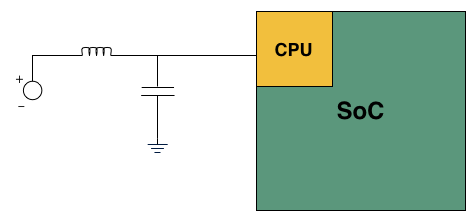

A basic LC filter

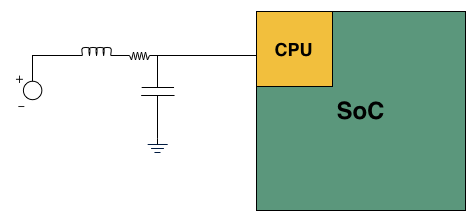

What usually happens is you'll find a standard LC filter (inductor + capacitor) supplying power to a block on the SoC. Once the right LC filter has been identified, all you need to do is lift the inductor, insert a very small resistor (2 - 20 mΩ) and measure the voltage drop across the resistor. With voltage and resistance values known, you can determine current and power. Using good external instruments (NI USB-6289) you can plot power over time and now get a good idea of the power consumption of individual IP blocks within an SoC.

Basic LC filter modified with an inline resistor

The previous article focused on an admittedly not too interesting comparison: Intel's Atom Z2760 (Clover Trail) versus NVIDIA's Tegra 3. After much pleading, Intel returned with two more tablets: a Dell XPS 10 using Qualcomm's APQ8060A SoC (dual-core 28nm Krait) and a Nexus 10 using Samsung's Exynos 5 Dual (dual-core 32nm Cortex A15). What was a walk in the park for Atom all of the sudden became much more challenging. Both of these SoCs are built on very modern, low power manufacturing processes and Intel no longer has a performance advantage compared to Exynos 5.

Just like last time, I ensured all displays were calibrated to our usual 200 nits setting and ensured the software and configurations were as close to equal as possible. Both tablets were purchased at retail by Intel, but I verified their performance against our own samples/data and noticed no meaningful deviation. Since I don't have a Dell XPS 10 of my own, I compared performance to the Samsung ATIV Tab and confirmed that things were at least performing as they should.

We'll start with the Qualcomm based Dell XPS 10...

140 Comments

View All Comments

powerarmour - Friday, January 4, 2013 - link

"Intel doesn't want to create a chip that cuts into it's very profitable mainstream CPU market."Indeed, they've left Cedar Trail to fester and die by totally withdrawing driver support :-

http://communities.intel.com/message/175069#175069

Quite a lot of desktop Atom hardware is still on the market, and they are trying their best to kill it off.

djgandy - Friday, January 4, 2013 - link

All that says to me is that they don't care about Win7 i.e. non tablets.Krysto - Friday, January 4, 2013 - link

Cortex A15 coupled with Cortex A7 will use half the power on average. Also, I told you before that Mali T604 is more efficient than PowerVR in the latest iPads, and that's why Apple managed to use a more powerful GPU - because it's more inefficient. They sacrificed energy efficiency for performance, because they can use a very large battery in the iPad.I saw you're trying hard to "prove something" about Intel lately, and I'm not sure why. Is Intel is biggest "client" when they pay you for reviews here? Is that why you're trying so hard to make them look good?

You're also always making unebelivable claims about what Intel chips will do in the future. Even if they get Haswell to 8W (is that for CPU only? The whole SoC? Is it peak TDP? Will it still need fans?), you do realize a Haswell chip costs as much as the whole BOM of an iPhone 5 right? Haswell chips will never arrive in smartphones, or in tablets that are competitive on price.

Tetracycloide - Friday, January 4, 2013 - link

You're always making "unebelivable" claims about what corruption does here. Do you have anything to back up your allegations to a normal person who would view any excitement about future possibilities as some kind of damning evidence that the writer must be on the take? It's like you think everyone that doesn't share your opinion of Intel is paid to have that opinion or something.trivik12 - Friday, January 4, 2013 - link

Haswell ULV is a SOC. So the platform TDP was < 8W. You like it or lot intel has the best process technology and ultimately they will produce a platform which is faster and lower TDP.That being said ARM will dominate the smartphone market and even majority of low end laptops. I see intel existing only in mid to higher end smartphone plus tablets > $500.

I am personally waiting for broadwell based tablet which should hopefully cut power even more in 14nm process.

djgandy - Friday, January 4, 2013 - link

You'd hope two brand new technologies would be better than two 3/4 year old ones wouldn't you. Clearly you are blinded by your love for ARM in the same way many here are blinded by love for Nvidia and actually consider Tegra 3 a competitive SOC.I don't think many people would be astonished to find that the T604, an architecture only released a few months back, is more efficient than PowerVR Series 5, dating back to 2008.

Why are people so shocked to find that Intel can make a low power chip? It's not some kind of magic, it is a business goal. Power is a trade off just like performance. When you have desktop systems the trade off for using more power is seen as a pro for a 40-50% performance gain.

mrdude - Friday, January 4, 2013 - link

He's spot on about the pricing issue, though. Intel isn't going to start selling Haswell SoCs for $30, and if they do then they'll quickly go out of business. It's a completely different business model that they're trying to compete with. The Tegra 3 costs $15-$25 (and way closer to that $15 to date) while Intel charges $70+ for their CPU+GPU, and that's before you get to the chipset, WiFi and the rest. A low-TDP Haswell chip might offer great performance and fit in the same form factor (tablets), but if the tablet ends up costing $800+ and isn't Apple, well... nobody cares.It's not just a matter of performance but performance-per-dollar and design wins. Intel can't afford to drop prices to competitive levels on their Core products unless they can supplement it with very high volume. For very high volume you need to sell a lot of competitive SoCs that can do it all at a very reasonable price. The Tegra 3 was a big success not because it was an amazing performer, but because it offered decent performance for a very low price tag. Can Intel afford to do that with their cash cow business slipping? Remember that x86 seeing drops in sales and PCs aren't exactly doing very well right now. Intel already had to drop their margins and they've let fabs run idle and sent home engineers at their 14nm fab in Ireland all the while processor prices haven't decreased even a tiny bit. Those aren't signs of a company that's willing to compete on price

Homeles - Friday, January 4, 2013 - link

I'm more than willing to pay for the performance premium.mrdude - Friday, January 4, 2013 - link

While you may be willing to fork over that much cash, most people won't. If you don't believe me, check out the recent sales figures of Win8 devices. The Win8 tablets (excluding Surface RT) don't even make up 1% of all Win8 products sold. That's not poor, that's absolutely horrible. On the other side the cheap Android tablets and smartphones have been gaining significant market share and outselling even the iPhone and iPads. Price matters. A lot. Furthermore, device makers/OEMs are more likely to go with the cheaper SoC if the experience is roughly equal. Remember that a majority of tablet and smartphone buyers don't browse Anandtech for benchmarks but buy based on things like display quality or whether it's got a nice look (or brand name, in the case of Apple). If an OEM can take that $60 saved and put it towards a better display, a larger battery or more NAND then that means a lot more in differentiating yourself from the competition than being 10-15% faster in X benchmark.People forget that these are SoCs and not CPUs. They also forget that these aren't DIY computers but tablets. Think about how much people complain when they see a $900 Ultrabook with a crappy 1366x768 TN display but those same people don't utter a word about how Intel's ULVs cost the same as their 35W parts. If the Intel chip was cheaper you'd probably have a better display or a cheaper price tag. This same notion extends to tablets and smartphones.

Qualcomm is in a place where they can offer something everybody wants; their LTE is second to none. What does Intel have to offer to warrant Intel prices? Currently Intel's chipsets cost as much as an entire Tegra 3 SoC. x86 PC/server and ARM SoCs are in a completely different universe when it comes to pricing, and unless you've got something special (see Qualcomm or Apple) or you're making and selling the device (Samsung), then you're going to have a very rough time of it.

jeffkro - Saturday, January 5, 2013 - link

I paid $15 to "upgrade" my laptop and have since gone back to win 7. A lot of people simply don't want win 8 at any cost.