The Bulldozer Aftermath: Delving Even Deeper

by Johan De Gelas on May 30, 2012 1:15 AM ESTIt has been months since AMD's Bulldozer architecture surprised the hardware enthusiast community with performance all over the place. The opinions vary wildly from “server benchmarks are here, and they're a catastrophe” to “Best Server Processor of 2011”. The least you can say is that the idiosyncrasies of AMD's latest CPU architecture have stirred up a lot of dust.

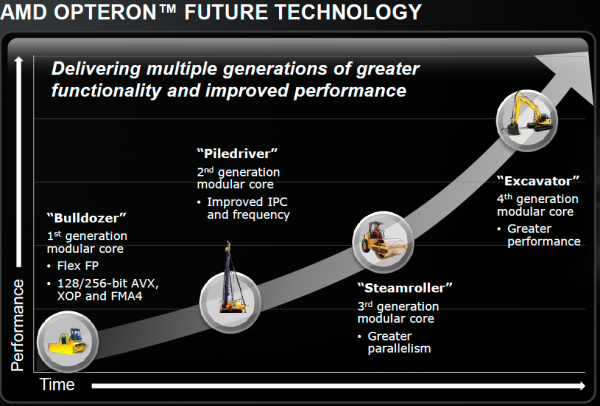

Now that the dust has settled, the Bulldozer chips now account for more than half of Opteron shipments and revenues. Since AMD's Financial Analyst Day (February 2, 2012), we have new code names: the improved Bulldozer architecture "Piledriver" will power the "Abu Dhabi" chip, a replacement for the current top server chip "Interlagos". AMD is clearly committed to the new "Bulldozer" direction: fitting as many cores as possible into a certain power envelope to improve thread throughput, while trying to "hold the line" on single-threaded performance.

In theory, the new 16-core Interlagos should have offered somewhere around a 33% boost in most highly-threaded applications. The reality is unfortunately not that rosy: in many highly-threaded server applications such as OLAP databases and virtualization, the new Opteron 6200 fails to impress and is only a few percent faster than it's older brother the 12-core Magny-Cours. There are even times where the older Opteron is faster.

Some, including sources inside AMD, have blamed Global Foundries for not delivering higher clocked SKUs. Sure, the clock speed targets for Interlagos were probably closer to 3GHz instead of 2.3GHz. But that does not explain why the extra integer cores do not deliver. We were promised up to 50% higher performance thanks to the 33% extra cores, but we got 20% at the most.

The combination of low single-threaded performance, the failure to really outperform the previous generation in highly-threaded applications, the relatively high power consumption at full load, and the fact that the CPU is designed for high clock speeds gives a lot of people a certain sense of Déjà vu: is this AMD's version of the Pentum 4 ?

One of our readers, "Iketh", spoke up and voiced the opinion of many of our readers:

" Unfortunately, the thought still in the back of my mind while reading was why did AMD reinvent the Pentium 4? I just don't get it."

Another reader nicknamed "Clagmaster" commented:

"A core this complex in my opinion has not been optimized to its fullest potential. Expect better performance when AMD introduces later steppings of this core with regard to power consumption and higher clock frequencies."

Although there have already been quite a few attempts to understand what Bulldozer is all about, we cannot help but not feel that many questions are still unanswered. Since this architecture is the foundation of AMD's server, workstation, and notebook future (Trinity is based on the improved Bulldozer core with the codename "Piledriver"), it is interesting enough to dig a little deeper. Did AMD take a wrong turn with this architecture? And if not, can the first implementation "Bulldozer" be fixed relatively easily?

We decided to delve deeper into the SAP and SPEC CPU2006 results, as well as profiling our own benchmarks. Using the profiling data and correlating it with what we know about AMD's Bulldozer and Intel's Sandy Bridge, we attempt to solve the puzzle.

84 Comments

View All Comments

shodanshok - Thursday, May 31, 2012 - link

Mmm... the link was malformed in the previous message.The correct one is: http://www.ilsistemista.net/index.php/hardware-ana...

Thanks.

name99 - Thursday, May 31, 2012 - link

"First of all, in most applications, an OOO processor can easily hide the 4-cycle latency of an L1 cache."I know you guys are interested in the question --- why does Bulldozer frequently suck? --- rather than the question ---why is Sandy Bridge so much better? --- but it is this latter question that interests me the most.

What strikes me, on going through all this data (including information that is NOT in the article, and on my experience back in the day when I was writing assembly and counting cycles) is that the "eventual cost" of misses that go all the way to RAM is not covered in the article, and I suspect this is a large part of the issue.

What I mean here is the following: consider an extremely simple model --- an L1 hit takes 1 cycle, an L1 miss that goes to RAM takes 100 cycles. Then a 97% L1 hit rate takes a total of basically 400 cycles; a 99% L1 hit rate takes 200 cycles --- apparently minor differences have a huge effect! But that's not the point I want to focus on.

Let's make the model more complicated. First let's make L1 hit cost more realistic --- 4 cycles. As the article says, this is, for the most part, trivially hidden by the OoO engine. But then why can't the OoO engine also hide all or most of the cost of all those cycles to RAM?

And that, I think, is where the Intel advantage is. They do such a good job with their OoO engine.

At a gross level, OoO engines all look kinda the same --- look at a PPC 750 and an IB and, at a superficial level, they look similar. But firstly the IB has just so much larger buffers (what, 168 or so, compared to the 750s what, 6 or so) that, of course, it has a vastly larger stock of instructions it can keep chewing through as it waits for the RAM.

But, you say, AMD also has large buffers now. Yes, but it's not only the raw buffers. Whenever you start looking at these chips, you discover all sorts of weird limitations on what they can actually do to use all those buffers. I've no idea what the current exact limitations are, but the sort of thing you would have in the past is that maybe all the buffers are flushed on an interrupt or system call; or there'd be strange conditions that could occur where, although in theory the integer engine could keep going past a blocking FP instruction, it turned out to be easier to prevent some race condition by freezing the integer engine under these conditions.

Secondly while you're executing other instructions, waiting on your RAM, you may well execute a few more load/store instructions that again miss in RAM. How well do you handle these? Can you just keep firing out these load/stores, or do you block at the second (or third, or fourth)? Frequently these load-stores refer to the same cache-line that's already in play from the first L1 miss, and how do you handle that? the truly dumb thing, of course, is to send out ANOTHER memory request. Smarter is to suppress that, but you're still using load-store entries in the main "miss to RAM" data structures. Smarter still is to be aware that this line will be coming eventually, and use auxiliary data structures to hold info about this load/store.

It's these sorts of technical details, which don't appear in the gross specs (and sometimes not even in the detailed CPU descriptions) that make so much difference. They are obviously astonishingly difficult to get right. Intel has the manpower to worry about every one of them, AMD does not.

Point is --- if I had to look for a single difference between the the two, that's what I'd be looking at --- how much time is REALLY wasted waiting on DRAM in SB vs on Bulldozer.

misiu_mp - Monday, June 11, 2012 - link

It is the compiler's and Out-Of-Order engine's job to order loads, stores and other instructions to minimize the total execution time.So making sure no stupid and unnecessary loads are being committed is what the OOO mechanism normally does.

There is no reason to suspect it is fundamentally broken in Bulldozer.

IceDread - Friday, June 1, 2012 - link

It really is simple, Amd did a Huge mistake.The product is a bust, simple as that.

The next generation or the generations after that might be a whole different matter, but guess what? No one cares. It wont help the poor souls that bought this busted product.

It's annoying that Amd could not do better because now Intel reigns supreme and competes with itself .

mikato - Friday, June 1, 2012 - link

I know I shouldn't feed the trolls but...You say next generations might be a whole different matter - well what do you think is the point of learning about the Bulldozer architecture? The next generations are based on it.

IceDread - Monday, June 4, 2012 - link

What is the point of releasing a product that does not outperform it's predecessor?Hope that people will purchase the product anyway and learn it?

Which companies would be interested in this, how many? Why would they invest money into this?

_vor_ - Saturday, June 2, 2012 - link

Yes. I too would be interested in exactly what aspects you think Bulldozer failed and your design ideas and approach on how you would fix them. Do tell.wiyosaya - Friday, June 1, 2012 - link

Personally, I think it is always nice to see in-depth articles like this that explain the details of the structure of a processor.To me, it sounds like AMD has a foundation that with a few well-directed tweaks, may put them in contention with Intel again in the CPU arena. Though AMD has said that they are through competing with Intel, I truly hope this is not the case. Perhaps this is a marketing tactic remove focus from themselves after the enthusiast arena panned BD and its siblings.

I've built my systems with AMD for a long time; however, this time I went with Intel because I thought they had the better value. Perhaps the future will bring me back to AMD, however, I cannot see doing so right now simply because Intel has become the "value" line over AMD.

With an i7-3820 in my most recent rig, I think I picked the SB-E value processor. I run more than games, and some of what I run takes advantage of quad-channel memory.

In any event, I'm set for a while. Perhaps AMD will once again produce a superior product by the time I am ready for my next build.

jamyryals - Friday, June 1, 2012 - link

What a great read, thanks!SocketF - Friday, June 1, 2012 - link

Hi Johan,thanks for the test, it is great.

However, on page 9 you have some trouble with percentage calculations. You wrote:

quote:

-------------------

We get a 65% speed up (2x 0.71 vs 0.86), which is somewhat lower than the 80% predicted by the AMD slides discussing CMT.

-------------------

This numbers are totally correct and within AMD's predictions. AMD promised 80% performance for the CMT-Bulldozer module, compared to an hypothetical Bulldozer CMP core, i.e. 2 (single) cores.

So you have to double your single-thread results, to get the score of 2 (single) Bulldozer cores (2 CMP cores). That gives: 0.86 x 2 = 1.72

Now compare that to the real performance of 2 CMT cores of one module, which is 0.71 x 2 = 1.42

1.42 are 82.6% of 1.72, which is better than AMD's 80% claim. Thus their claim holds. Everything's fine, don't worry.

Source of AMD's claim is e.g. here:

http://techreport.com/r.x/bulldozer-uarch/bulldoze...

(sorry, didn't find it on anandtech)

Please update your article accordingly.

Oh and one last question, why did you add up the SMT scores but not the CMT scores? Seems odd, an IPC of "two threads", This is just weired. Furthermore it is somehow useless, because you cannot compare it directly with the CMT scores. A diagram should visualize the results not force the reader to do some re-calculations.

Thanks again

Erik