Apple's M1 Pro, M1 Max SoCs Investigated: New Performance and Efficiency Heights

by Andrei Frumusanu on October 25, 2021 9:00 AM EST- Posted in

- Laptops

- Apple

- MacBook

- Apple M1 Pro

- Apple M1 Max

CPU ST Performance: Not Much Change from M1

Apple didn’t talk much about core performance of the new M1 Pro and Max, and this is likely because it hasn’t really changed all that much compared to the M1. We’re still seeing the same Firestrom performance cores, and they’re still clocked at 3.23GHz. The new chip has more caches, and more DRAM bandwidth, but under ST scenarios we’re not expecting large differences.

When we first tested the M1 last year, we had compiled SPEC under Apple’s Xcode compiler, and we lacked a Fortran compiler. We’ve moved onto a vanilla LLVM11 toolchain and making use of GFortran (GCC11) for the numbers published here, allowing us more apple-to-apples comparisons. The figures don’t change much for the C/C++ workloads, but we get a more complete set of figures for the suite due to the Fortran workloads. We keep flags very simple at just “-Ofast” and nothing else.

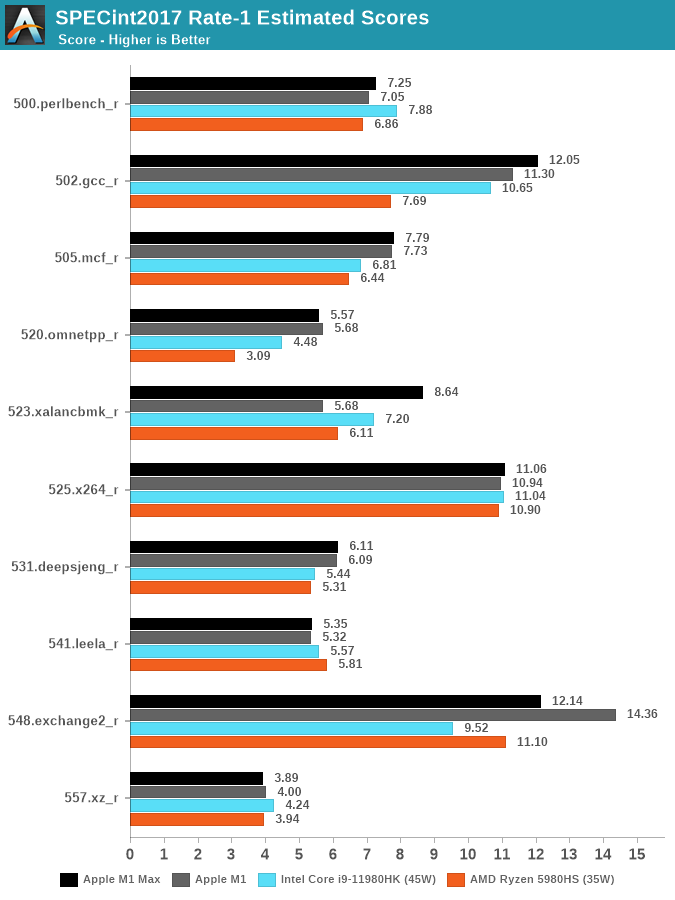

In SPECint2017, the differences to the M1 are small. 523.xalancbmk is showcasing a large performance improvement, however I don’t think this is due to changes on the chip, but rather a change in Apple’s memory allocator in macOS 12. Unfortunately, we no longer have an M1 device available to us, so these are still older figures from earlier in the year on macOS 11.

Against the competition, the M1 Max either has a significant performance lead, or is able to at least reach parity with the best AMD and Intel have to offer. The chip however doesn’t change the landscape all too much.

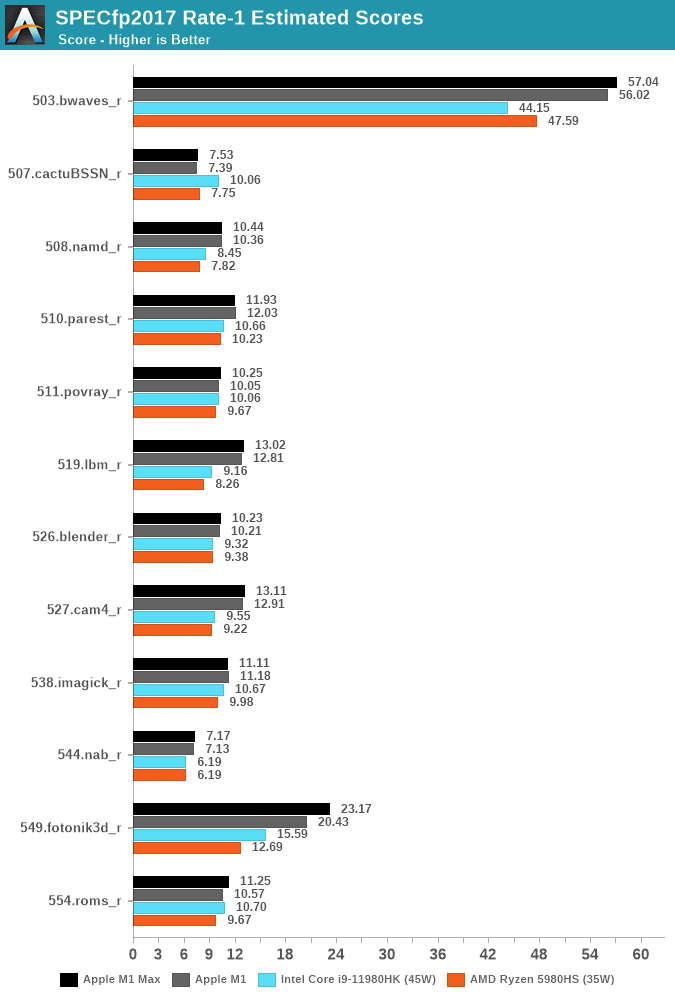

SPECfp2017 also doesn’t change dramatically, 549.fotonik3d does score quite a bit better than the M1, which could be tied to the more available DRAM bandwidth as this workloads puts extreme stress on the memory subsystem, but otherwise the scores change quite little compared to the M1, which is still on average quite ahead of the laptop competition.

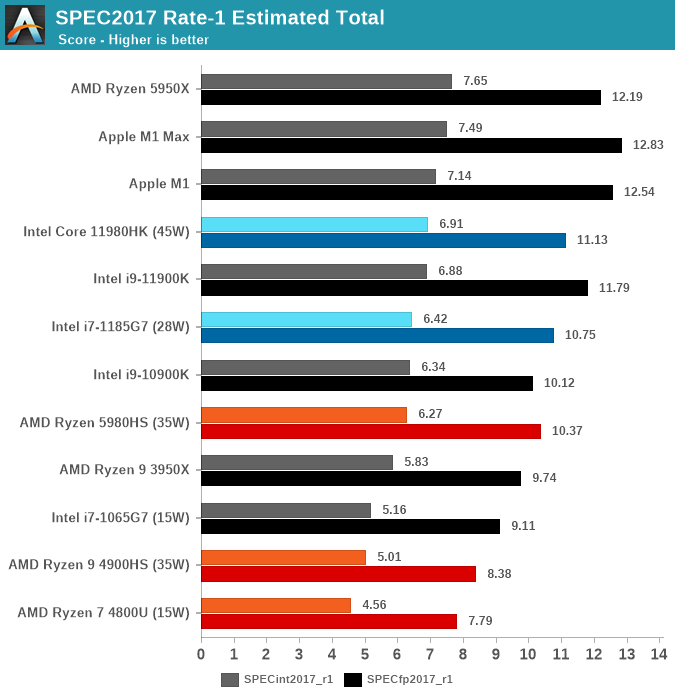

The M1 Max lands as the top performing laptop chip in SPECint2017, just shy of being the best CPU overall which still goes to the 5950X, but is able to take and maintain the crown from the M1 in the FP suite.

Overall, the new M1 Max doesn’t deliver any large surprises on single-threaded performance metrics, which is also something we didn’t expect the chip to achieve.

493 Comments

View All Comments

celeste_P - Tuesday, October 26, 2021 - link

Does any one know where can I find the policy about translating/reprinting the article? Do AnandTech allow such behavior? What are the policies that one needs to follow?This article is quite interesting and I want to translate/publish it on Chinese website to share with a broader range of people

colinstalter - Wednesday, October 27, 2021 - link

Why not just share the URL on the Chinese page? Do people in China not have translator functions built into their web browsers like Chrome does?celeste_P - Wednesday, October 27, 2021 - link

Of course they do XDBut as you can imagine, the quality of machine translation won't be that great, especially considering all these domain specific terms within this article.

ABR - Tuesday, October 26, 2021 - link

An excellent review.ajmas - Tuesday, October 26, 2021 - link

Given the number of games already available and running on iOS, I wonder how much work would be involved in making them available on macOS?As for effective performance, I am eagerly waiting to see what the real world tests reveal, since specs only say so much.

mandirabl - Wednesday, October 27, 2021 - link

As a developer, technically you don't have to do much, just re-compile the game and check another box (for Mac), basically.The problem is: iOS games are mostly touch-focused, whereas macOS is mouse-first. So they have to check if that translates without changing anything. If it does, it's a matter of a couple of minutes. If it doesn't translate well ... they have a choice to release it anyway or blocking access on macOS. Yes, developers have to actually decide against releasing their app/game for macOS - if they don't do anything in that regard, the app/game simply shows up in an App Store search on a Mac.

Kevin45 - Tuesday, October 26, 2021 - link

Apple's goal is very simple: If you are going to provide SW tools for Pro users of the MacOS platform, you write to Metal - period.It IS the most superior way to take advantage of what Apple has laid out to developers and Apple's Pro users absolutely want the HW tools they buy to be max'd out by the developers.

Apple has taken an approach Intel and AMD cannot. Unified memory design aside, Apple has looked at it's creative markets and developed sub-cores, which for this Creative focus segment, Apple markets as it's "Media Engine" which has hardware h.264 and hardware ProRes compute, which just crush these formats and codecs.

The argument "Yah, but the CPU and GPU cores aren't the most powerful that one can buy." is still. They don't need to be because they have dedicated cores to where the power needs to be. Sure, in a Wintel world, or Linux space, more powerful GPU and CPU cores is all they've got. So when talking those worlds indeed that's the correct argument. Not when talking Apple HW with Apple silicon.

Intel has fought nVIDIA to have their beefier and beefier cores do heavy lifting, while nVIDA wants the GPU to be the most important play in the mix. Apple has broken out their SoC into many sub-sets to meet the high compute needs of it's user base.

Now more than ever, developers that have drug their feet, need to get onboard. As companies continue to show off - such as Apple with FCP, Motion and Compressor optimized apps for the hardware, even DaVinci (niche player but powerful), they put pressure on other players such as sloth-boy Adobe, to get going and truly write for Apple's tools that take advantage of such well thought out HW + SW combo.

richardnpaul - Tuesday, October 26, 2021 - link

The article comes across a bit fanbioy. (yes, yes I know that this is usually the case here but I just wanted to say it out loud again). See below for why.You have covered in depth things like how the increased L3 design between Zen2 and 3 can cause big jumps in performance and what was missing here was discussion of how the 24/48MB cache between the memory interface impacts performance especially when using the GPU (we've seen this last year AMD's designs doing exactly this to improve performance of their designs by reducing the impact of calling out to the slow GDDR6 RAM.)

The GPU is nothing special. 10Tflops at 1.3GHz puts it around the same class as a Vega64, a 14nm design, which similarly used RAM packaged on an interposer with the GPU (being 14nm it was big, 5nm makes it much more reasonable). With the buffer cache I'd expect it might perform better, also the CPUs will bump up performance (just look at how much more FPS you get with Zen3 over Zen2 and with Zen3 with vcache it'll be another 15% more on top from exactly the same GPU hardware and that's with the CPU and GPU having to talk over PCI-E).

Also, Apple have made themselves second class gaming citizens with their decision to build Mantle and enforce it as the only API (I may be mistaken here but as far as I'm aware the whole reason for Molten is because you have to use Metal on MacOS and developers have introduced this Vulkan to Metal shim to ease porting). Also, as I understand it, you can't connect external dGPUs via Thunderbolt to provide comparisons. Apple's vendor lock-in at it's worst (have I mentioned that Apple are their own worst enemy a lot of the time?)

As such the gaming performance doesn't surprise me, this is a technically much slower and inferior GPU to AMD and nVIDIAs current designs on an older process (7nm and 8nm respectively). The cost is that whilst these are faster, they're larger and more power hungry though a die shrink of bring something like an AMD 6600 based chip into the same ballpark.

Also on the 512bit memory interface I'd probably look at it more like 384bit plus 128bit, which is the GPU plus the usual CPU interfaces. The CPU is always gojng to contend for some of that 512bit interface, so you're never going to see 512bit for the GPU, on the other hand, you get what ever the cpu doesn't use for free, which is a great bonus of this design, and if the CPU needs more than a 128bit interface can manage it has access to that too if the GPU isn't heavily loaded on the memory interface.

I kind of expect you guys to cover all this though in the article, not have me railing at the lack of it in the comments section.

richardnpaul - Tuesday, October 26, 2021 - link

Oh and you failed to ever mention that the trade-off of the design is that you need to buy all the RAM you'll ever need up front because it's soldered to the SoC package. The reason that we don't normally see such designs is that the trade-off is potentially expensive unsaleable parts. The cost of these laptops are way above the usual and whilst they have some really nice tech this is one of the other downsides of this design (and the 5nm node and the amount of silicon).OreoCookie - Tuesday, October 26, 2021 - link

Or perhaps Anandtech gave it a glowing review simply because the M1 Max is fast and energy efficient at the same time? In memory intensive benchmarks it was 2-5 x faster than the x86 competition while being more energy efficient. What more do you want?And the article *was* including a Zen 3 mobile part in its comparison and the M1 Max was faster while consuming less energy. Since the V-Cache version of Zen 3 hasn't been released yet, there are no benchmarks for Anandtech to release as they either haven't been run yet or are under embargo.

Lastly, this article is about some of the low-level capabilities of the hardware, not vendor lock-in or whether Metal is better or worse than Vulkan. They did not even test the ML accelerator or hardware codec bits (which is completely fair).