Intel’s Integrated Graphics Mini-Review: Is Rocket Lake Core 11th Gen Competitive?

by Dr. Ian Cutress on May 7, 2021 10:20 AM EST

In the last few months we have tested the latest x86 integrated graphics options on the desktop from AMD, with some surprising results about how performant a platform with integrated graphics can be. In this review, we’re doing a similar test but with Intel’s latest Rocket Lake Core 11th Gen processors. These processors feature Intel’s Xe-LP graphics, which were touted as ‘next-generation’ when they launched with Intel’s mobile-focused Tiger Lake platform. However, the version implemented on Rocket Lake has fewer graphics units, slower memory, but a nice healthy power budget to maximize. Lo, Intel set forth for battle.

When a CPU meets GPU

Intel initially started integrating graphics onto its systems in 1999, by pairing the chipset with some form of video output. In 2010, the company moved from chipset graphics to on-board processor graphics, enabling the graphics hardware to take advantage of a much faster bandwidth to main memory as well as a much lower latency. Intel’s consumer processors now feature integrated graphics as the default configuration, with Intel at times dedicating more of the processor design to graphics than to actual cores.

| Intel CPUs: IGP as a % of Die Area | ||||||

| AnandTech | Example | Launched | Cores | IGP | Size | IGP as Die Area % |

| Sandy Bridge | i7-2600K | Jan 2011 | 4 | Gen6 | GT2 | 11% |

| Ivy Bridge | i7-3770K | April 2012 | 4 | Gen7 | GT2 | 29% |

| Haswell | i7-4770K | June 2013 | 4 | Gen7.5 | GT2 | 29% |

| Broadwell | i7-5775C | June 2015 | 4 | Gen8 | GT3e | 48% |

| Skylake | i7-6700K | Aug 2015 | 4 | Gen9 | GT2 | 36% |

| Kaby Lake | i7-7700K | Jan 2017 | 4 | Gen9 | GT2 | 36% |

| Coffee Lake | i7-8700K | Sept 2017 | 6 | Gen9 | GT2 | 30% |

| Coffee Lake | i9-9900K | Oct 2018 | 8 | Gen9 | GT2 | 26% |

| Comet Lake | i9-10900K | April 2020 | 10 | Gen9 | 24 EUs | 22% |

| Rocket Lake | i9-11900K | March 2021 | 8 | Xe-LP | 32 EUs | 21% |

| Mobile CPUs | ||||||

| Ice Lake-U | i7-1065G7 | Aug 2019 | 4 | Gen11 | 64 EUs | 36% |

| Tiger Lake-U | i7-1185G7 | Sept 2020 | 4 | Xe-LP | 96 EUs | 32% |

All the way from Intel’s first integrated graphics to its 2020 product line, Intel was reliant on its ‘Gen’ design. We saw a number of iterations over the years, with updates to the function and processing ratios, with Gen11 featuring heavily in Intel’s first production 10nm processor, Ice Lake.

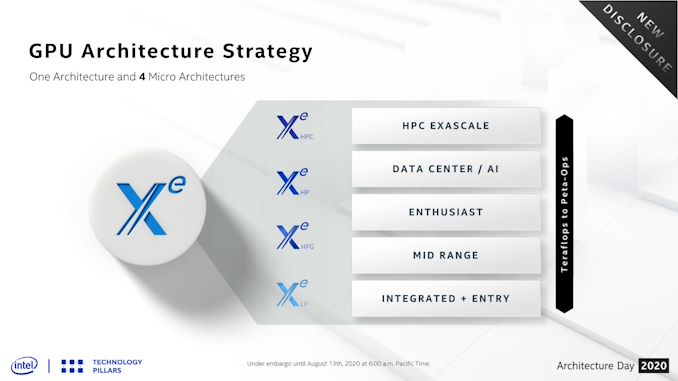

The latest graphics design however is different. No longer called ‘Gen’, Intel upcycled its design with additional compute, more features, and an extended effort for the design to scale from mobile compute all the way up to supercomputers. This new graphics family, known as Xe, is now the foundation of Intel’s graphics portfolio. It comes in four main flavors:

- Xe-HPC for High Performance Computing in Supercomputers

- Xe-HP for High Performance and Optimized FP64

- Xe-HPG for High Performance Gaming with Ray Tracing

- Xe-LP for Low Power for Integrated and Entry Level

Intel has initially rolled out its LP designs into the market place, first with its Tiger Lake mobile processors, then with its Xe MAX entry level notebook graphics card, and now with Rocket Lake.

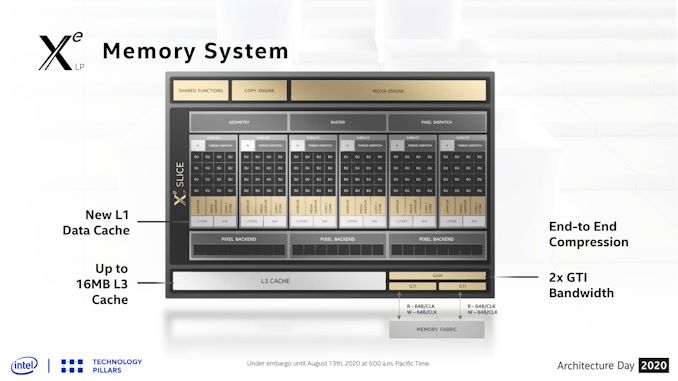

Xe-LP, A Quick Refresher

Intel’s LP improves on the previous Gen11 graphics by reorganizing the base structure of the design. Rather than 7 logic units per execution unit, we now have 8, and LP’s front-end can dispatch up two triangles per clock rather than one. The default design of LP involves 96 execution units, split into a centralized ‘slice’ that has all the geometry features and fixed function hardware, and up to 6 ‘sub-slices’ each with 16 logic units and 64 KiB of L1 cache. Each variant of LP can then have up to 96 execution units in a 6x16 configuration.

Execution units now work in pairs, rather than on their own, with a thread scheduler shared between each pair. Even with this change, each individual execution unit has moved to an 8+2 wide design, with the first 8 working on FP/INT and the final two on complex math. Previously we saw something more akin to a 4+4 design, so Intel has rebalanced the math engine while also making in larger per unit. This new 8+2 design actually decreases the potential of some arithmetic directly blocking the FP pipes, improving throughput particularly in graphics and compute workloads.

The full Tiger Lake LP solution has all 96 execution units, with six sub-slices each of 16 execution units (6x16), Rocket Lake is neutered by comparison. Rocket Lake has 4 sub-slices, which would suggest a 64 execution unit design, but actually half of those EUs are disabled per sub-slice, and the final result is a 32 EU implementation (4x8). The two lowest Rocket Lake processors have only a 3x8 design. By having only half of each sub-slide active, this should in theory give more cache per thread during operation, and provides less cache pressure. Intel has enabled this flexibility presumably to provide a lift in edge-case graphics workloads for the parts that have fractional sub-slices enabled.

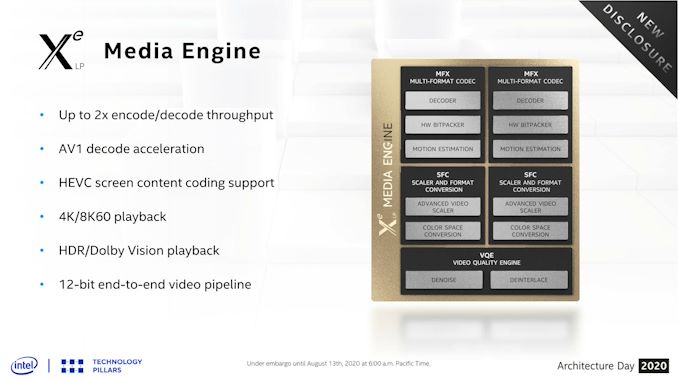

Xe-LP also comes with a revamped media engine. Along with a 12-bit end-to-end video pipeline enabling HDR, there is also HEVC coding support and AV1 decode, the latter of which is a royalty-free codec providing reported similar or better quality than HEVC. Intel is the first desktop IGP solution to provide AV1 accelerated decode support.

Rocket Lake Comparisons

For this review, we are using the Core i9-11900K, Core i7-11700K, and Core i5-11600K. These three are the highest power processors in Intel’s Rocket Lake lineup, and as a result they support the highest configuration of LP graphics that Intel provides on Rocket Lake. All three processors have a 4x8 configuration, and a turbo frequency up to 1300 MHz.

| Intel Integrated Graphics | |||||

| AnandTech | Core i9 11900K |

Core i7 11700K |

Core i5 11600K |

Core i9 10900K |

|

| Cores | 8 / 16 | 8 / 16 | 6 / 12 | 10 / 20 | |

| Base Freq | 3500 MHz | 3600 MHz | 3900 MHz | 3700 MHz | |

| 1T Turbo | 5300 MHz | 5000 MHz | 4900 MHz | 5300 MHz | |

| GPU uArch | Xe-LP | Xe-LP | Xe-LP | Gen 11 | |

| GPU EUs | 32 EUs | 32 EUs | 32 EUs | 24 EUs | |

| GPU Base | 350 MHz | 350 MHz | 350 MHz | 350 MHz | |

| GPU Turbo | 1300 MHz | 1300 MHz | 1300 MHz | 1200 MHz | |

| Memory | DDR4-3200 | DDR4-3200 | DDR4-3200 | DDR4-2933 | |

| Cost (1ku) | $539 | $399 | $262 | $488 | |

Our comparison points are going to be Intel’s previous generation Gen11 graphics, as tested on the Core i9-10900K which has a 24 Execution Unit design, AMD’s latest desktop processors, a number of Intel’s mobile processors, and a discrete graphics option with the GT1030.

In all situations, we will be testing with JEDEC memory. Graphics loves memory bandwidth, and CPU memory controllers are slow by comparison to mobile processors or discrete cards; while a GPU might love 300 GB/s from some GDDR memory, a CPU with two channels of DDR4-3200 will only have 51.2 GB/s. Also, that memory bank needs to be shared between CPU and GPU, making it all the more complex. The use case for most of these processors on integrated graphics will often be in prebuilt systems designed to a price. That being said, if the price of Ethereum keeps increasing, integrated graphics might be the only thing we have left.

The goal for our testing comes in two flavors: Best Case and Best Experience. This means for most benchmarks we will be testing at 720p Low and 1080p Max, as this is the area in which integrated graphics is used. If a design can’t perform at 720p Low, then it won’t be going anywhere soon, however if we can achieve good results at 1080p Max in certain games, then integrated graphics lends itself as a competitive option against the basic discrete graphics solutions.

If you would like to see the full CPU review of these Rocket Lake processors, please read our review:

Intel Rocket Lake (14nm) Review: Core i9-11900K, Core i7-11700K, and Core i5-11600K

Pages In This Review

- Analysis and Competition

- Integrated Graphics Gaming

- Conclusions and Final Words

165 Comments

View All Comments

GeoffreyA - Monday, May 10, 2021 - link

180 or 130 nm. Yikes. No wonder.mode_13h - Tuesday, May 11, 2021 - link

Thanks for the details. I was confused about which generation he meant. If he'd have supplied even half the specifics you did, that could've been avoided.Also, dragging up examples from the 2000's just seems like an egregious stretch to engage in Intel-bashing that's basically irrelevant to the topic at hand.

mode_13h - Wednesday, May 12, 2021 - link

There's another thing that bugs me about his post, and I figured out what it is. Everything he doesn't like seems to be the result of greed or collusion. Whatever happened to plain old incompetence?And even competent organizations and teams sometimes build a chip with a fatal bug or run behind schedule and miss their market window. Maybe they *planned* on having a suitable GPU, but the plan fell through and they misjudged the suitability of their fallback solution? Intel has certainly shown itself to be fallible, time and again.

GeoffreyA - Wednesday, May 12, 2021 - link

Incompetence and folly have worked against these companies over and over again. The engineering has almost always been brilliant but the decisions have often been wrong.yeeeeman - Friday, May 7, 2021 - link

Silvermont was quite good in terms of power and performance. It actually competed very well with the snapdragon 835 at the time and damn the process was efficient. The vcore was 0.35v! and on the igpu you can play rocket league at low stting 720p with 20 fps and 3w total chip power. If that isn't amazing for a 2014 chip then I don't know what it is. Intel actually was very good in between 2006 core 2 duo and 2015 with Skylake. Their process was superior to the competition and the designs are quite good also.mode_13h - Friday, May 7, 2021 - link

Thanks for the details.SarahKerrigan - Saturday, May 8, 2021 - link

Silvermont was fine, though not great. The original Atom core family was utterly godawful - dual-issue in-order, and clocked like butt.mode_13h - Sunday, May 9, 2021 - link

The 1st gen had hyperthreading, which Intel left out of all subsequent generations.Gracemont is supposed to be pretty good, but then it's a lot more complex, as well.

Oxford Guy - Sunday, May 9, 2021 - link

The only thing that's really noteworthy about the first generation is how much of a bait and switch the combination of the CPU and chipset + GPU was — and how the netbook hype succeededIt was literally paying for pain (the CPU's ideological purist design — pursuing power efficiency too much at the expense of time efficiency) and getting much more pain without (often) knowing it (the disgustingly inefficient chipset + GPU).

As for the hyperthreading... my very hazy recollection is that it didn't do much to enhance the chip's performance. As to why it was dumped for later iterations — probably corporate greed (i.e. segmentation). Greed is the only reason why Atom was such a disgusting product. Had the CPU been paired with a chipset + GPU designed according to the same purist ideological goal it would have been vastly more acceptable.

As it was, the CPU was the tech world's equivalent of the 'active ingredient' scam used in pesticides. For example, one fungicide's ostensible active ingredient is 27,000 times less toxic to a species of bee than one of the 'inert' ingredients used in a formulation.

watersb - Sunday, May 9, 2021 - link

The first ever Atom platform did indeed use a chipset that used way more power than the CPU itself. 965G I think. I built a lab of those as tiny desktop platform for my daughter's school at the time. They were good enough for basic Google Earth at full screen, 1024x768.The Next Atom I had was a Something Trail tablet, the HP Stream 7 of 2014. These were crippled by using only one of the two available memory channels, which was devastating to the overall platform performance. Low end chips can push pixels at low spec 7-inch displays, if they don't have to block waiting for DRAM. The low power, small CPU cache Atom pretty much requires a decent pipe to RAM, otherwise you blow the power budget just sitting there.

The most recent Atoms I have used are HP Stream 11, N2000 series little Windows laptops. Perfect for little kids, especially low income families who were caught short this past year, trying to provide one laptop per child as the schools went to remote-only last year.

Currently the Atom N4000 series makes for a decent Chromebook platform for remote learning.

So I can get stuff done on Atom laptops. Not competitive to ARM ones, performance or power efficiency, but my MacBook Pro M1 cost the same as that 10-seat school lab. Both the Mac and the lab are very good value for the money. Neither choice will get you Crysis or Tomb Raider.