Intel Core i7-11700K Review: Blasting Off with Rocket Lake

by Dr. Ian Cutress on March 5, 2021 4:30 PM EST- Posted in

- CPUs

- Intel

- 14nm

- Xe-LP

- Rocket Lake

- Cypress Cove

- i7-11700K

CPU Tests: Microbenchmarks

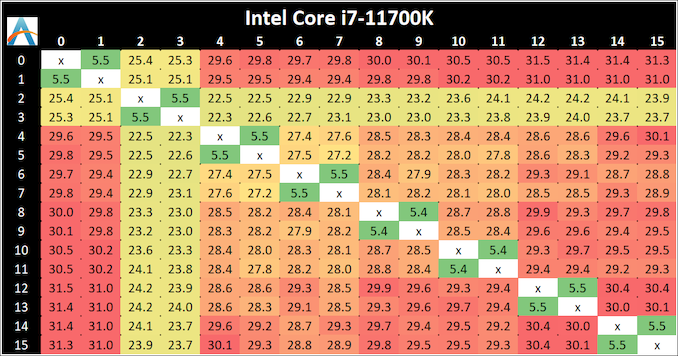

Core-to-Core Latency

As the core count of modern CPUs is growing, we are reaching a time when the time to access each core from a different core is no longer a constant. Even before the advent of heterogeneous SoC designs, processors built on large rings or meshes can have different latencies to access the nearest core compared to the furthest core. This rings true especially in multi-socket server environments.

But modern CPUs, even desktop and consumer CPUs, can have variable access latency to get to another core. For example, in the first generation Threadripper CPUs, we had four chips on the package, each with 8 threads, and each with a different core-to-core latency depending on if it was on-die or off-die. This gets more complex with products like Lakefield, which has two different communication buses depending on which core is talking to which.

If you are a regular reader of AnandTech’s CPU reviews, you will recognize our Core-to-Core latency test. It’s a great way to show exactly how groups of cores are laid out on the silicon. This is a custom in-house test built by Andrei, and we know there are competing tests out there, but we feel ours is the most accurate to how quick an access between two cores can happen.

The core-to-core numbers are interesting, being worse (higher) than the previous generation across the board. Here we are seeing, mostly, 28-30 nanoseconds, compared to 18-24 nanoseconds with the 10700K. This is part of the L3 latency regression, as shown in our next tests.

One pair of threads here are very fast to access all cores, some 5 ns faster than any others, which again makes the layout more puzzling.

Update 1: With microcode 0x34, we saw no update to the core-to-core latencies.

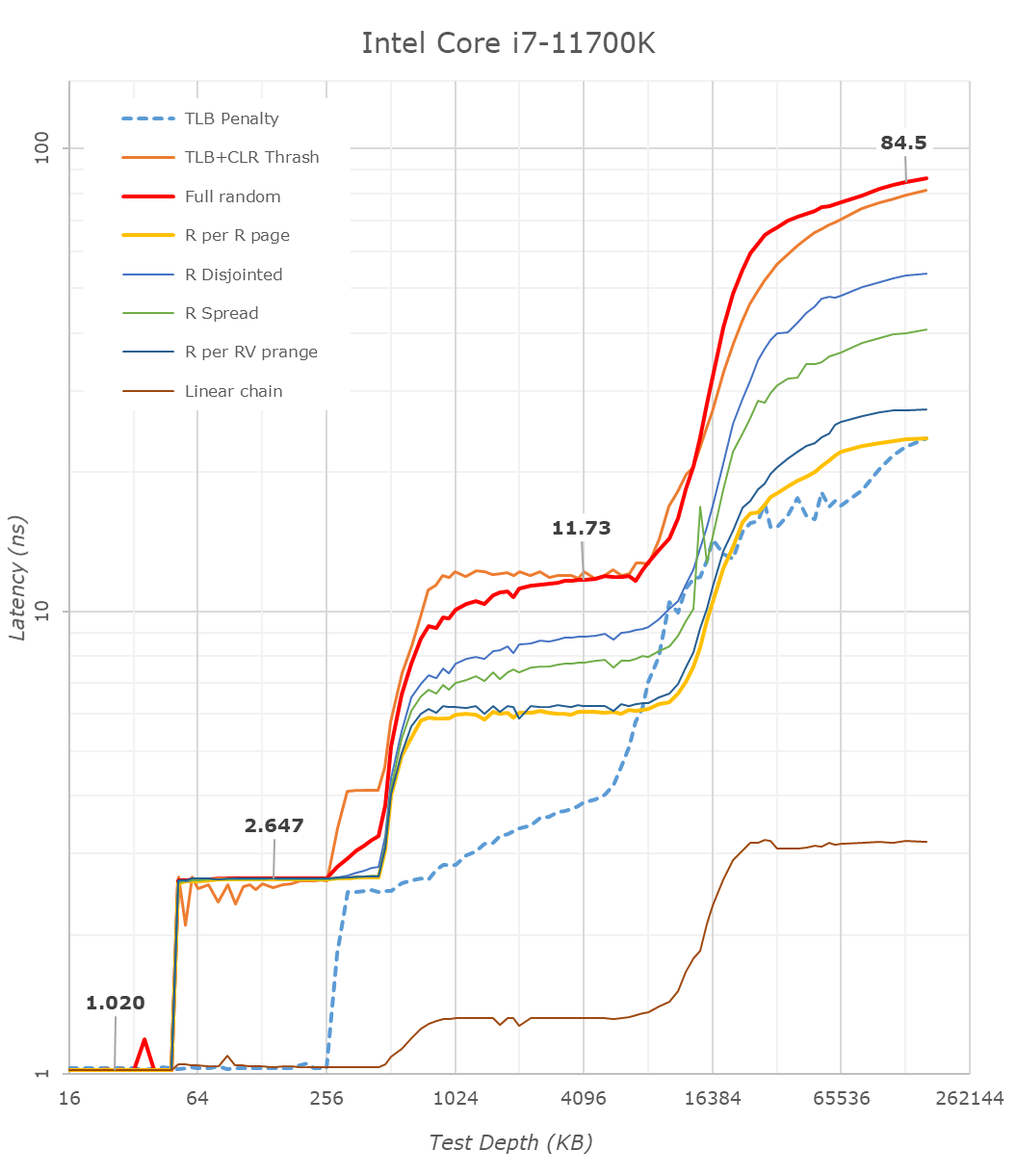

Cache-to-DRAM Latency

This is another in-house test built by Andrei, which showcases the access latency at all the points in the cache hierarchy for a single core. We start at 2 KiB, and probe the latency all the way through to 256 MB, which for most CPUs sits inside the DRAM (before you start saying 64-core TR has 256 MB of L3, it’s only 16 MB per core, so at 20 MB you are in DRAM).

Part of this test helps us understand the range of latencies for accessing a given level of cache, but also the transition between the cache levels gives insight into how different parts of the cache microarchitecture work, such as TLBs. As CPU microarchitects look at interesting and novel ways to design caches upon caches inside caches, this basic test proves to be very valuable.

Looking at the rough graph of the 11700K and the general boundaries of the cache hierarchies, we again see the changes of the microarchitecture that had first debuted in Intel’s Sunny Cove cores, such as the move from an L1D cache from 32KB to 48KB, as well as the doubling of the L2 cache from 256KB to 512KB.

The L3 cache on these parts look to be unchanged from a capacity perspective, featuring the same 16MB which is shared amongst the 8 cores of the chip.

On the DRAM side of things, we’re not seeing much change, albeit there is a small 2.1ns generational regression at the full random 128MB measurement point. We’re using identical RAM sticks at the same timings between the measurements here.

It’s to be noted that these slight regressions are also found across the cache hierarchies, with the new CPU, although it’s clocked slightly higher here, shows worse absolute latency than its predecessor, it’s also to be noted that AMD’s newest Zen3 based designs showcase also lower latency across the board.

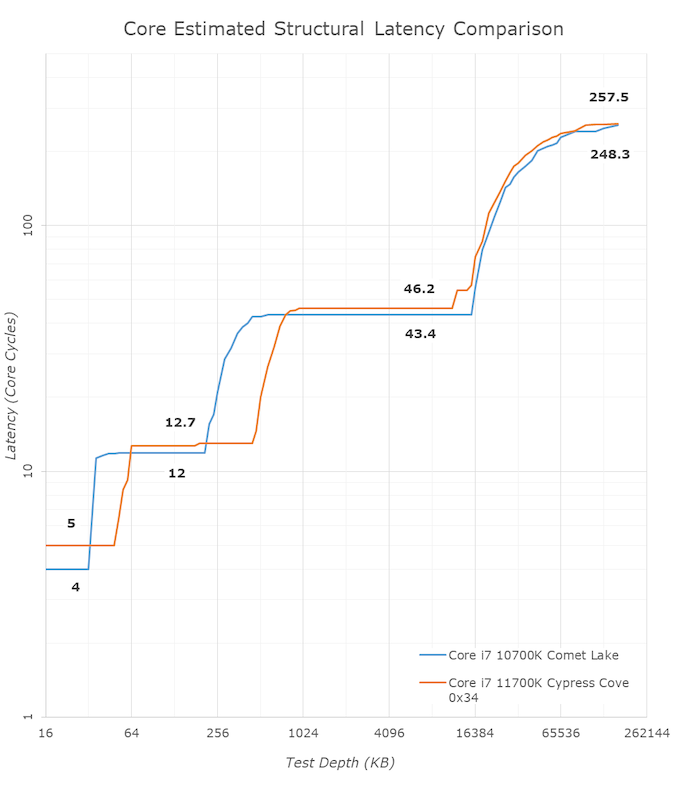

With the new graph of the Core i7-11700K with microcode 0x34, the same cache structures are observed, however we are seeing better performance with L3.

The L1 cache structure is the same, and the L2 is of a similar latency. In our previous test, the L3 latency was 50.9 cycles, but with the new microcode is now at 45.1 cycles, and is now more in line with the L3 cache on Comet Lake.

Out at DRAM, our 128 MB point reduced from 82.4 nanoseconds to 72.8 nanoseconds, which is a 12% reduction, but not the +40% reduction that other media outlets are reporting as we feel our tools are more accurate. Similarly, for DRAM bandwidth, we are seeing a +12% memory bandwidth increase between 0x2C and 0x34, not the +50% bandwidth others are claiming. (BIOS 0x1B however, was significantly lower than this, resulting in a +50% bandwidth increase from 0x1B to 0x34.)

In the previous edition of our article, we questioned the previous L3 cycle being a larger than estimated regression. With the updated microcode, the smaller difference is still a regression, but more in line with our expectations. We are waiting to hear back from Intel what differences in the microcode encouraged this change.

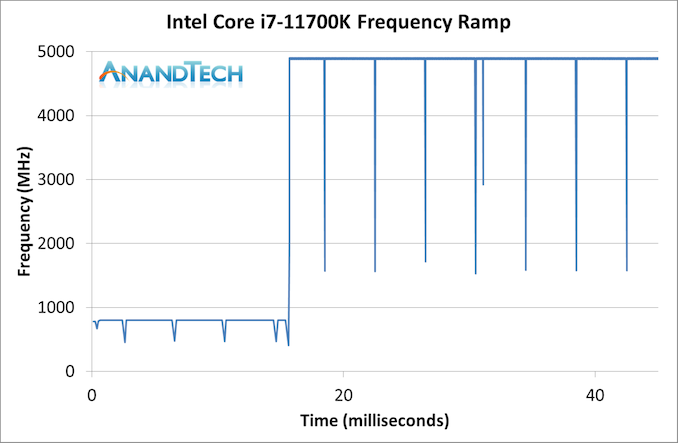

Frequency Ramping

Both AMD and Intel over the past few years have introduced features to their processors that speed up the time from when a CPU moves from idle into a high powered state. The effect of this means that users can get peak performance quicker, but the biggest knock-on effect for this is with battery life in mobile devices, especially if a system can turbo up quick and turbo down quick, ensuring that it stays in the lowest and most efficient power state for as long as possible.

Intel’s technology is called SpeedShift, although SpeedShift was not enabled until Skylake.

One of the issues though with this technology is that sometimes the adjustments in frequency can be so fast, software cannot detect them. If the frequency is changing on the order of microseconds, but your software is only probing frequency in milliseconds (or seconds), then quick changes will be missed. Not only that, as an observer probing the frequency, you could be affecting the actual turbo performance. When the CPU is changing frequency, it essentially has to pause all compute while it aligns the frequency rate of the whole core.

We wrote an extensive review analysis piece on this, called ‘Reaching for Turbo: Aligning Perception with AMD’s Frequency Metrics’, due to an issue where users were not observing the peak turbo speeds for AMD’s processors.

We got around the issue by making the frequency probing the workload causing the turbo. The software is able to detect frequency adjustments on a microsecond scale, so we can see how well a system can get to those boost frequencies. Our Frequency Ramp tool has already been in use in a number of reviews.

Our ramp test shows a jump straight from 800 MHz up to 4900 MHz in around 17 milliseconds, or a frame at 60 Hz.

541 Comments

View All Comments

GeoffreyA - Tuesday, March 9, 2021 - link

Cage of their own making: I like that phrase. Well, I think for Intel, the best path is to design a microarchitecture from scratch, which I'm sure they're doing. Possibly Intel Israel. Sunny Cove, descending all the way from P6, is not holding up any more.Zim - Sunday, March 7, 2021 - link

Time to be me some more AMD stock.alhopper - Sunday, March 7, 2021 - link

What an awesome deep-dive technical expose/review of this processor. No stone left un-turned - incredibly detailed investigation and easily understood explanations. With this level of detail every reader can make up their own mind about the pros/cons of this product - there's no need for me to offer an opinion.Many thanks for this well written treasure trove of technical information and the graphics are wonderful. Kudos to the entire team and keep up the Good Work (TM) !!

poohbear - Sunday, March 7, 2021 - link

Wow how the tables have turned. I remember for AMD's Bulldozer review, which was supposed to be AMD's answer to Core, Bulldozer was similar to Rocket Lake...it consumed MORE power and was SLOWER than the competition. Well, here's to Intel making a comeback with Alder Lake, but 2021 clearly belongs to AMD.Hifihedgehog - Monday, March 8, 2021 - link

Alder Lake, just confirmed in a lineup leak to use a heavy amount of little or low power cores, first has to ensure that they get heterogenous core architecture working in the Windows scheduler. The biggest roadblock Microsoft claimed to have already fixed it with Lakefield but even after they did and additionally Cinebench, per Intel’s special emergency request, to recompile a special update to R20 after that, Lakefield was an absolute failure in performance compared to a Y-class (or M notated) Core product. Personally, I have little faith in Microsoft and I also do not expect software companies recompiling every last piece of software made in the last decade either just to accommodate a radical new design all for Intel’s sake. Developers would much rather assume a simple, vanilla core architecture than an unbalanced one with little and big cores and therein lies a huge risk factor for Intel. I foresee a huge misfire here because Microsoft isn’t exactly a good company to bet on refixing what they claimed they have already fixed with Lakefield.GeoffreyA - Monday, March 8, 2021 - link

I'm sceptical of this whole fast/slow core approach myself and believe it's a mistake. They will learn the hard way. Perhaps it works for Apple, I don't know, but Intel and AMD are better of spending their efforts in cutting down power in their ordinary cores dynamically.Hifihedgehog - Monday, March 8, 2021 - link

It works for Apple because they have a iron-tight grasp on the full stack which requires developers to use their latest compilation and development tools to get their apps to the App Store and ultimately in users’ hands. You can run pretty much anything under the sun for 2 decades plus on Windows and without a recompile, you will never utilize their cores correctly. The Windows scheduler as it stands is garbage tier compared to Linux with a plain vanilla homogenous core design so I expect very little from Microsoft unless they actually redesign their scheduler from the ground up. Don’t hold your breath on that one.GeoffreyA - Monday, March 8, 2021 - link

The whole idea smacks of Bulldozer, and that means disaster.blppt - Monday, March 8, 2021 - link

Only if their single thread performance sucks (which from all I've seen, it won't)---Bulldozer's problem was being behind in manufacturing process and requiring a highly-threaded application to shine.Hifihedgehog - Tuesday, March 9, 2021 - link

Well, the problem then is how smartly it assigns threads to the right cores and the Windows scheduler still has serious problems with that to this very day. If it doesn’t do that right, it could appear like an overclocked Core i3 in comparison to Ryzen and that is where the Bulldozer analogy is fitting. AMD banked on their unique topology getting OS and development optimization down the road—FineWine to coin a phrase—but that never materialized.