Intel Core i7-11700K Review: Blasting Off with Rocket Lake

by Dr. Ian Cutress on March 5, 2021 4:30 PM EST- Posted in

- CPUs

- Intel

- 14nm

- Xe-LP

- Rocket Lake

- Cypress Cove

- i7-11700K

CPU Tests: Microbenchmarks

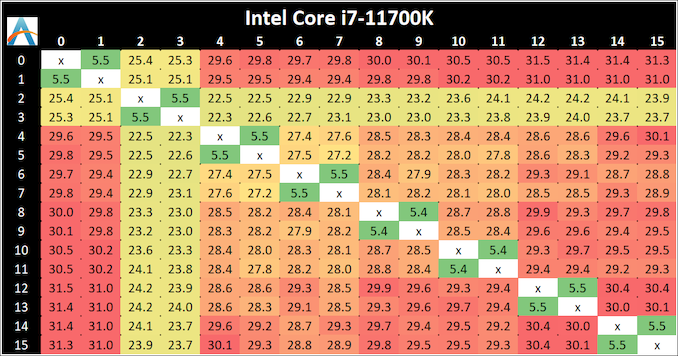

Core-to-Core Latency

As the core count of modern CPUs is growing, we are reaching a time when the time to access each core from a different core is no longer a constant. Even before the advent of heterogeneous SoC designs, processors built on large rings or meshes can have different latencies to access the nearest core compared to the furthest core. This rings true especially in multi-socket server environments.

But modern CPUs, even desktop and consumer CPUs, can have variable access latency to get to another core. For example, in the first generation Threadripper CPUs, we had four chips on the package, each with 8 threads, and each with a different core-to-core latency depending on if it was on-die or off-die. This gets more complex with products like Lakefield, which has two different communication buses depending on which core is talking to which.

If you are a regular reader of AnandTech’s CPU reviews, you will recognize our Core-to-Core latency test. It’s a great way to show exactly how groups of cores are laid out on the silicon. This is a custom in-house test built by Andrei, and we know there are competing tests out there, but we feel ours is the most accurate to how quick an access between two cores can happen.

The core-to-core numbers are interesting, being worse (higher) than the previous generation across the board. Here we are seeing, mostly, 28-30 nanoseconds, compared to 18-24 nanoseconds with the 10700K. This is part of the L3 latency regression, as shown in our next tests.

One pair of threads here are very fast to access all cores, some 5 ns faster than any others, which again makes the layout more puzzling.

Update 1: With microcode 0x34, we saw no update to the core-to-core latencies.

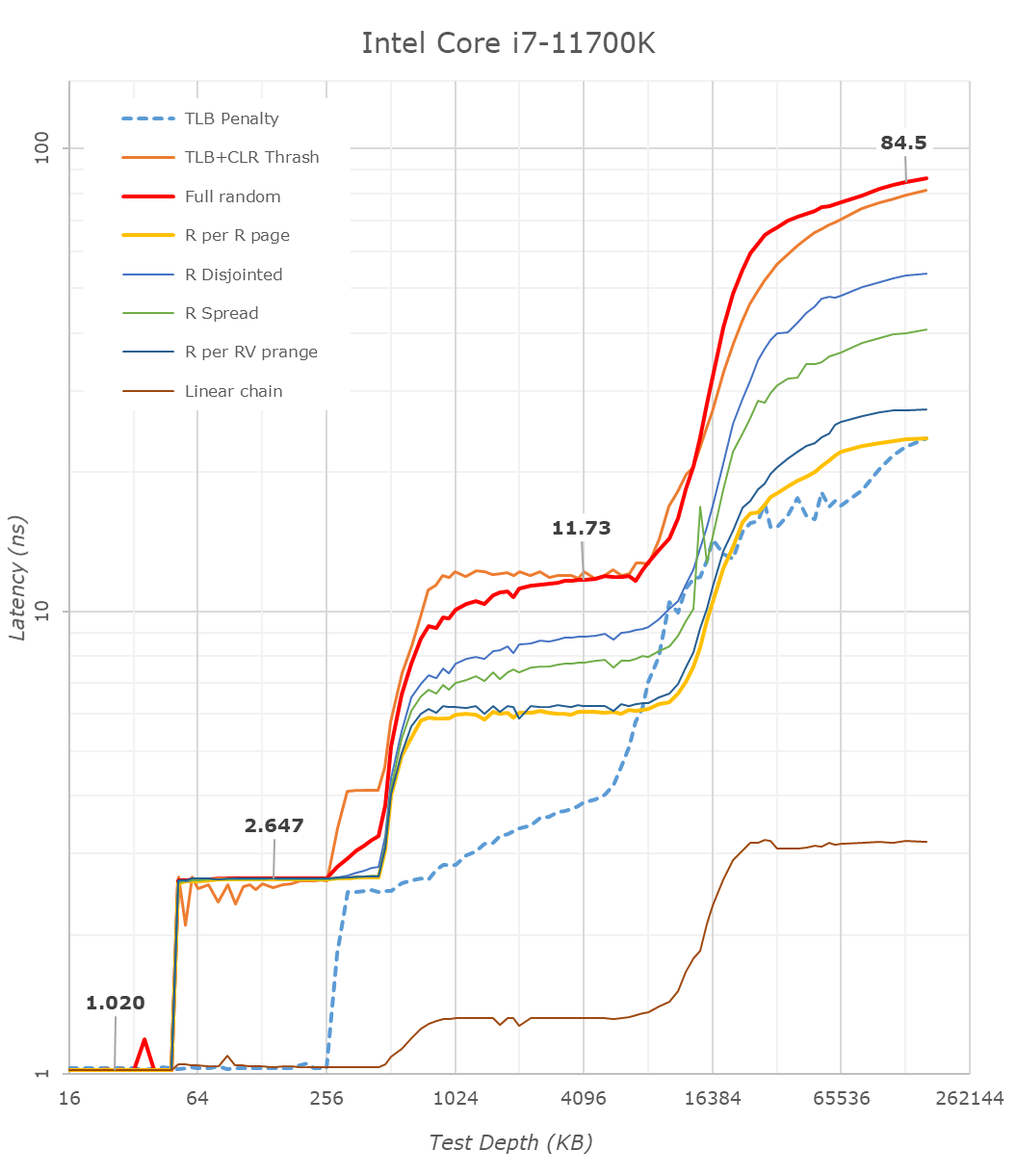

Cache-to-DRAM Latency

This is another in-house test built by Andrei, which showcases the access latency at all the points in the cache hierarchy for a single core. We start at 2 KiB, and probe the latency all the way through to 256 MB, which for most CPUs sits inside the DRAM (before you start saying 64-core TR has 256 MB of L3, it’s only 16 MB per core, so at 20 MB you are in DRAM).

Part of this test helps us understand the range of latencies for accessing a given level of cache, but also the transition between the cache levels gives insight into how different parts of the cache microarchitecture work, such as TLBs. As CPU microarchitects look at interesting and novel ways to design caches upon caches inside caches, this basic test proves to be very valuable.

Looking at the rough graph of the 11700K and the general boundaries of the cache hierarchies, we again see the changes of the microarchitecture that had first debuted in Intel’s Sunny Cove cores, such as the move from an L1D cache from 32KB to 48KB, as well as the doubling of the L2 cache from 256KB to 512KB.

The L3 cache on these parts look to be unchanged from a capacity perspective, featuring the same 16MB which is shared amongst the 8 cores of the chip.

On the DRAM side of things, we’re not seeing much change, albeit there is a small 2.1ns generational regression at the full random 128MB measurement point. We’re using identical RAM sticks at the same timings between the measurements here.

It’s to be noted that these slight regressions are also found across the cache hierarchies, with the new CPU, although it’s clocked slightly higher here, shows worse absolute latency than its predecessor, it’s also to be noted that AMD’s newest Zen3 based designs showcase also lower latency across the board.

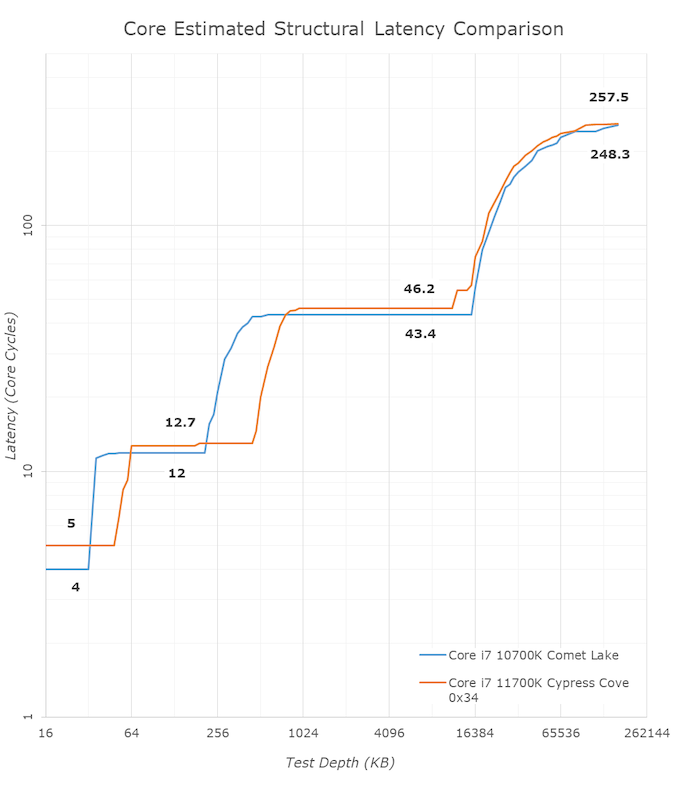

With the new graph of the Core i7-11700K with microcode 0x34, the same cache structures are observed, however we are seeing better performance with L3.

The L1 cache structure is the same, and the L2 is of a similar latency. In our previous test, the L3 latency was 50.9 cycles, but with the new microcode is now at 45.1 cycles, and is now more in line with the L3 cache on Comet Lake.

Out at DRAM, our 128 MB point reduced from 82.4 nanoseconds to 72.8 nanoseconds, which is a 12% reduction, but not the +40% reduction that other media outlets are reporting as we feel our tools are more accurate. Similarly, for DRAM bandwidth, we are seeing a +12% memory bandwidth increase between 0x2C and 0x34, not the +50% bandwidth others are claiming. (BIOS 0x1B however, was significantly lower than this, resulting in a +50% bandwidth increase from 0x1B to 0x34.)

In the previous edition of our article, we questioned the previous L3 cycle being a larger than estimated regression. With the updated microcode, the smaller difference is still a regression, but more in line with our expectations. We are waiting to hear back from Intel what differences in the microcode encouraged this change.

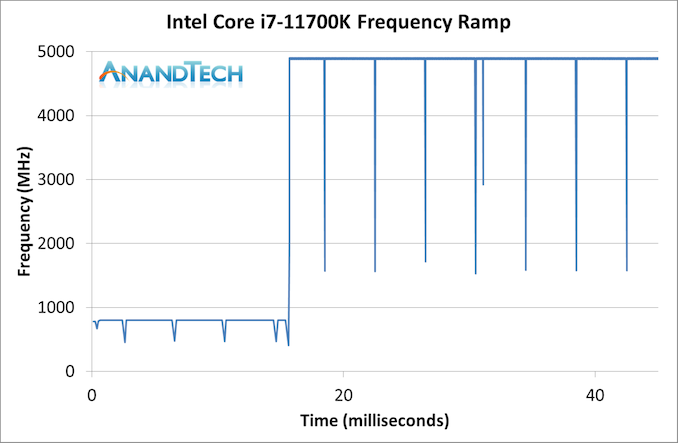

Frequency Ramping

Both AMD and Intel over the past few years have introduced features to their processors that speed up the time from when a CPU moves from idle into a high powered state. The effect of this means that users can get peak performance quicker, but the biggest knock-on effect for this is with battery life in mobile devices, especially if a system can turbo up quick and turbo down quick, ensuring that it stays in the lowest and most efficient power state for as long as possible.

Intel’s technology is called SpeedShift, although SpeedShift was not enabled until Skylake.

One of the issues though with this technology is that sometimes the adjustments in frequency can be so fast, software cannot detect them. If the frequency is changing on the order of microseconds, but your software is only probing frequency in milliseconds (or seconds), then quick changes will be missed. Not only that, as an observer probing the frequency, you could be affecting the actual turbo performance. When the CPU is changing frequency, it essentially has to pause all compute while it aligns the frequency rate of the whole core.

We wrote an extensive review analysis piece on this, called ‘Reaching for Turbo: Aligning Perception with AMD’s Frequency Metrics’, due to an issue where users were not observing the peak turbo speeds for AMD’s processors.

We got around the issue by making the frequency probing the workload causing the turbo. The software is able to detect frequency adjustments on a microsecond scale, so we can see how well a system can get to those boost frequencies. Our Frequency Ramp tool has already been in use in a number of reviews.

Our ramp test shows a jump straight from 800 MHz up to 4900 MHz in around 17 milliseconds, or a frame at 60 Hz.

541 Comments

View All Comments

shabby - Sunday, March 7, 2021 - link

I hear intel will be bringing back btx to cope with the 300w+ 11900k...Doug1820 - Sunday, March 7, 2021 - link

Rocket Lake is looking more and more like Netburst 2.0.Oxford Guy - Sunday, March 7, 2021 - link

Someone literally wrote ‘I commend Intel for this release’. Commend? Perhaps the word originally sought was condemn?This product exists due to inadequate competition. It is a gift from monopolization. While the mantra of the corporation is ‘sell less for more’, it’s adequate competition that’s supposed to be its saving grace. That hasn’t been the case in many areas of tech for a long time.

Not only is AMD not enough competition, it took this long for the company to finally beat Intel badly. If Intel had managed its business better it would still be competitive.

And, to top things off, just like in GPUs (dire lack of competition) it’s extremely profitable to fail. Nvidia is failing to meet gamer demand, for various reasons that come down to inadequate competition. Despite that, it is taking record money. Intel is profitable despite failing in the consumer desktop CPU market.

Allison Kilkenny joked that America is special because one can ‘fail upward’. But, really, tech has a huge problem with inadequate competition — a global one.

People see brief glimmers of competition and mistake it for adequate competition. This is not the first time AMD had the better CPU design and we all know how that turned out.

Being held captive by one and 1/2 companies (the typical tech competition ratio) isn’t at all close to approaching ideal.

If huge profitability while failing very badly to meet demand is the recipe for a good state of business...

GeoffreyA - Monday, March 8, 2021 - link

I believe that was me. "While it hasn't displaced Ryzen, I commend Intel for the effort that Rocket Lake is."I should have worded it better, I agree, but what I meant was, instead of just releasing another Skylake refresh, they did the unthinkable, in an attempt to release something: porting Sunny Cove to their legacy node. "We will fail, but we'll try anyway, futile though our efforts may be." Their being the underdog at present makes me feel a bit sorry for them, but then again, when we consider Intel's bank account, we realise they don't need our sympathy.

For my part, I am not an Intel shill or proponent. In fact, from my teenage years I've had a distaste towards them, and as I see it, Rocket Lake is a disappointment: still behind Zen 3, using far more power, slower graphics than Tiger Lake. There's practically no good in it. For the elusive Sunny Cove, I had expected a lot more. Despite that, I do commend them for making an attempt, something in life that's more important than winning.

GeoffreyA - Monday, March 8, 2021 - link

As for the business/economic side of the matter, I don't understand these things too much to comment, but from that side, Intel has always been wretched; and all these companies are only out to make profit. We've even learned that those who offer something for nothing---it comes at a cost (Google, Facebook, etc.).Spunjji - Monday, March 8, 2021 - link

100%Oxford Guy - Tuesday, March 9, 2021 - link

'all these companies are only out to make profit'Which means consumers need to get their heads out of the sand and fight for value for their dollars.

Spunjji - Monday, March 8, 2021 - link

Intel are very much not the underdog - they're still the 800 lb gorilla, they've just got themselves trapped inside a cage of their own making. Tiger Lake is an example of the fact that they're still capable of first-tier core designs; they're just not (currently) capable of bringing them to market on a competitive process.Hifihedgehog - Tuesday, March 9, 2021 - link

They are 800-lbs on a fixed rations in self-imposed solitary confinement. Watch them slim down in a jiffy to as skinny as a rail if Alder Lake fails to impress. It was a slippery slope with Bulldozer. The only issue with Intel is the sheer amount of corporate waste. If they go down, others will be ready to swoop in and pick up the layoff broken pieces—pieces, once surrendered, that never again be recovered without a fight. Intel has never been fully challenged but they just might fall into Bulldozer-like obscurity this time around.GeoffreyA - Tuesday, March 9, 2021 - link

"self-imposed solitary confinement"Hard to believe how the tables have turned in a matter of four years, and worse for Intel, this isn't the slack, "we've done a good job, let's rest" AMD of the K8 days. This time round, they're relentlessly executing, with a vigour I don't remember them possessing. Whether it's Lisa or just the pain they went through in the Bulldozer days, I don't know, but it's magnificent to watch.