The NVIDIA Turing GPU Architecture Deep Dive: Prelude to GeForce RTX

by Nate Oh on September 14, 2018 12:30 PM ESTUnpacking 'RTX', 'NGX', and Game Support

One of the more complicated aspects of GeForce RTX and Turing is not only the 'RTX' branding, but how all of Turing's features are collectively called the NVIDIA RTX platform. To recap, here is a quick list of the separate but similarly named groupings:

- NVIDIA RTX Platform - general platform encompassing all Turing features, including advanced shaders

- NVIDIA RTX Raytracing technology - name for ray tracing technology under RTX platform

- GameWorks Raytracing - raytracing denoiser module for GameWorks SDK

- GeForce RTX - the brand connected with games using NVIDIA RTX real time ray tracing

- GeForce RTX - the brand for graphics cards

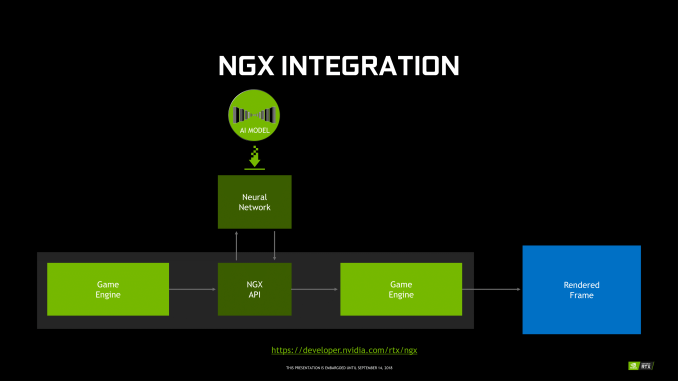

For NGX, it technically falls under the RTX platform, and includes Deep Learning Super Sampling (DLSS). Using a deep neural network (DNN) specific to the game and trained on super high quality 64x supersampled images, or 'ground truth' images, DLSS uses tensor cores to infer high quality antialiased results. In the standard mode, DLSS renders at a lower input sample count, typically 2x less but may depend on the game, and then infers a result, which at target resolution is similar quality to TAA result. A DLSS 2X mode exists, where the input is rendered at the final target resolution and then combined with a larger DLSS network.

Fortunately, GFE is not required for NGX features to work, and all the necessary NGX files will be available via the standard Game Ready drivers, though it's not clear how often DNNs for particular games would be updated.

In the case of RTX-OPS, it describes a workload for a frame where both RT and Tensor Cores are utilized; currently, the classic scenario would be with a game with real time ray tracing and DLSS. So by definition, it only accurately measures that type of workload. However, this metric currently does not apply to any game, as DXR has not yet released. For the time being, the metric does not describe performance any publicly available game.

In sum, then the upcoming game support aligns with the following table.

| Planned NVIDIA Turing Feature Support for Games | |||||

| Game | Real Time Raytracing | Deep Learning Supersampling (DLSS) | Turing Advanced Shading | ||

| Ark: Survival Evolved | Yes | ||||

| Assetto Corsa Competizione | Yes | ||||

| Atomic Heart | Yes | Yes | |||

| Battlefield V | Yes | ||||

| Control | Yes | ||||

| Dauntless | Yes | ||||

| Darksiders III | Yes | ||||

| Deliver Us The Moon: Fortuna | Yes | ||||

| Enlisted | Yes | ||||

| Fear The Wolves | Yes | ||||

| Final Fantasy XV | Yes | ||||

| Fractured Lands | Yes | ||||

| Hellblade: Senua's Sacrifice | Yes | ||||

| Hitman 2 | Yes | ||||

| In Death | Yes | ||||

| Islands of Nyne | Yes | ||||

| Justice | Yes | Yes | |||

| JX3 | Yes | Yes | |||

| KINETIK | Yes | ||||

| MechWarrior 5: Mercenaries | Yes | Yes | |||

| Metro Exodus | Yes | ||||

| Outpost Zero | Yes | ||||

| Overkill's The Walking Dead | Yes | ||||

| PlayerUnknown Battlegrounds | Yes | ||||

| ProjectDH | Yes | ||||

| Remnant: From the Ashes | Yes | ||||

| SCUM | Yes | ||||

| Serious Sam 4: Planet Badass | Yes | ||||

| Shadow of the Tomb Raider | Yes | ||||

| Stormdivers | Yes | ||||

| The Forge Arena | Yes | ||||

| We Happy Few | Yes | ||||

| Wolfenstein II | Yes | ||||

111 Comments

View All Comments

Alistair - Sunday, September 16, 2018 - link

Except for the GTX 780 was the worse nVidia release ever, at a terrible price. Nice try ignoring every other card in the last 10 years.markiz - Monday, September 17, 2018 - link

How can it be the same segment of the market, if the prices are, as you claim, double+?I mean, that claim makes no sense. It's not same segment. it's higher tier.

I mean, who is to say what kind of an advancement in GPU and games have people supposed to be getting?

Buy a 500$ card and max settings as far as they go and call it a day.

If you are

Ej24 - Monday, September 17, 2018 - link

The R&D for smaller manufacturing nodes hasn't scaled linearly. It's been almost exponential in terms of $/Sq.mm to develop each new node. That's why we need die shrinks to cram more transistors per square mm, and why some nodes were skipped because the economics didn't work out, like 20/22nm gpu's never existed. You're assuming that manufacturers have fixed costs that have never changed. The cost of a semiconductor fab, and R&D for new nodes has ballooned much much faster than inflation. That's why we've seen the number of fabs plummet with every new node. There used to be dozens of fabs in the 90nm days and before. Now it's looking like only 3 or 4 will be producing 7nm and below. It's just gotten too expensive for anyone to compete.milkod2001 - Tuesday, September 18, 2018 - link

All those ridiculous prices started when AMD have announced 7970 at $550 plus. NV had mid range card to compete with it: GTX 680 at the same price. And then NV Titan high end cards were introduced at $1000 plus. Since then we pay past high end prices for mid range cards.futrtrubl - Wednesday, September 19, 2018 - link

Just a bit on your math. You say $1 accounting for inflation of 2.7% over 18 years is now just less than $1.50. Maybe you are doing it as $1 * 18 * 1.027 to get that which is incorrect for inflation. It compounds, so it should be $1 * ( 1.027^18) which comes to ~$1.62. Likewise at 5% over 18 years it becomes $2.41.Da W - Sunday, September 16, 2018 - link

Since when does inflation work in the semiconductor industry?Holliday75 - Monday, September 17, 2018 - link

I was wondering the same thing. Smaller, faster, cheaper. For some reason here its the opposite....for 2 out of 3.Yojimbo - Saturday, September 15, 2018 - link

"You must literally live under a rock while also being absurdly naive.It's never been this way in the 20 years that i've been following GPUs. These new RTX GPUs are ridiculously expensive, way more than ever, and the prices will not be changing much at all when there's literally zero competition. The GPU space right now is worse than it's ever been before in history."

No, if you go back and look at historical GPU prices, adjusted for inflation, there have been other times that newly released graphics cards were either as expensive or more expensive. The 700 series is the most recent example of cards that were as expensive as the 20 series is.

eddman - Saturday, September 15, 2018 - link

No.https://i.imgur.com/ZZnTS5V.png

This chart was made last year based on 2017 dollar value, but it still applies. 20 series cards have the highest launch prices in the past 18 years by a large margin.

eddman - Saturday, September 15, 2018 - link

There is one card that surpasses that, 8800 Ultra. It was nothing more than a slightly OCed 8800 GTX. Nvidia simply released it to extract as much money as possible, and that was made possible because of lack of proper competition from ATI/AMD in that time period.