Examining Soft Machines' Architecture: An Element of VISC to Improving IPC

by Ian Cutress on February 12, 2016 8:00 AM EST- Posted in

- CPUs

- Arm

- x86

- Architecture

- Soft Machines

- IPC

Last week, Soft Machines announced that their 'VISC' architecture was available for licensing, following the announcement of the original concepts over a year ago. VISC, in a nutshell, is designed as a solution to improving the number of instructions per clock a single thread can process in a given time, which potentially makes it a very interesting design in an era where IPC gains are harder and harder to realize.

The concepts behind their new ‘VISC’ architecture, which splits the workload of a single linear thread across multiple cores, are intriguing and exciting. But as with any new fundamental change in computer processing, subject to a large barrage of questions. We were invited to a presentation and call with the President and Chief Technical Officer Mohammed Abdallah and the VP Marketing and Business Mark Casey, and I put a number of questions on the lips of analysts to them.

Identifying Single Thread Performance Bottlenecks

Any discussion about processor performance over the last couple of decades has involved several factors, including getting better performance through an increased power budget, a higher frequency, extracting instruction level parallelism (ILP), getting better at minimizing delays through better branch prediction, or adding more cores and improving thread level parallelism (TLP). Each of these methods have varying degrees of success at increasing performance – long-time readers will remember the Pentium 4 days of hitting a frequency and power wall which then switched the focus to efficiency. Some tasks, like graphics, are inherently parallel and can take advantage of multiple hundreds or thousands of cores, or the software can be optimized. However, the nature of most software code and instructions is that they are single threaded by nature, and their performance relies on how fast the instructions can be processed within a single thread.

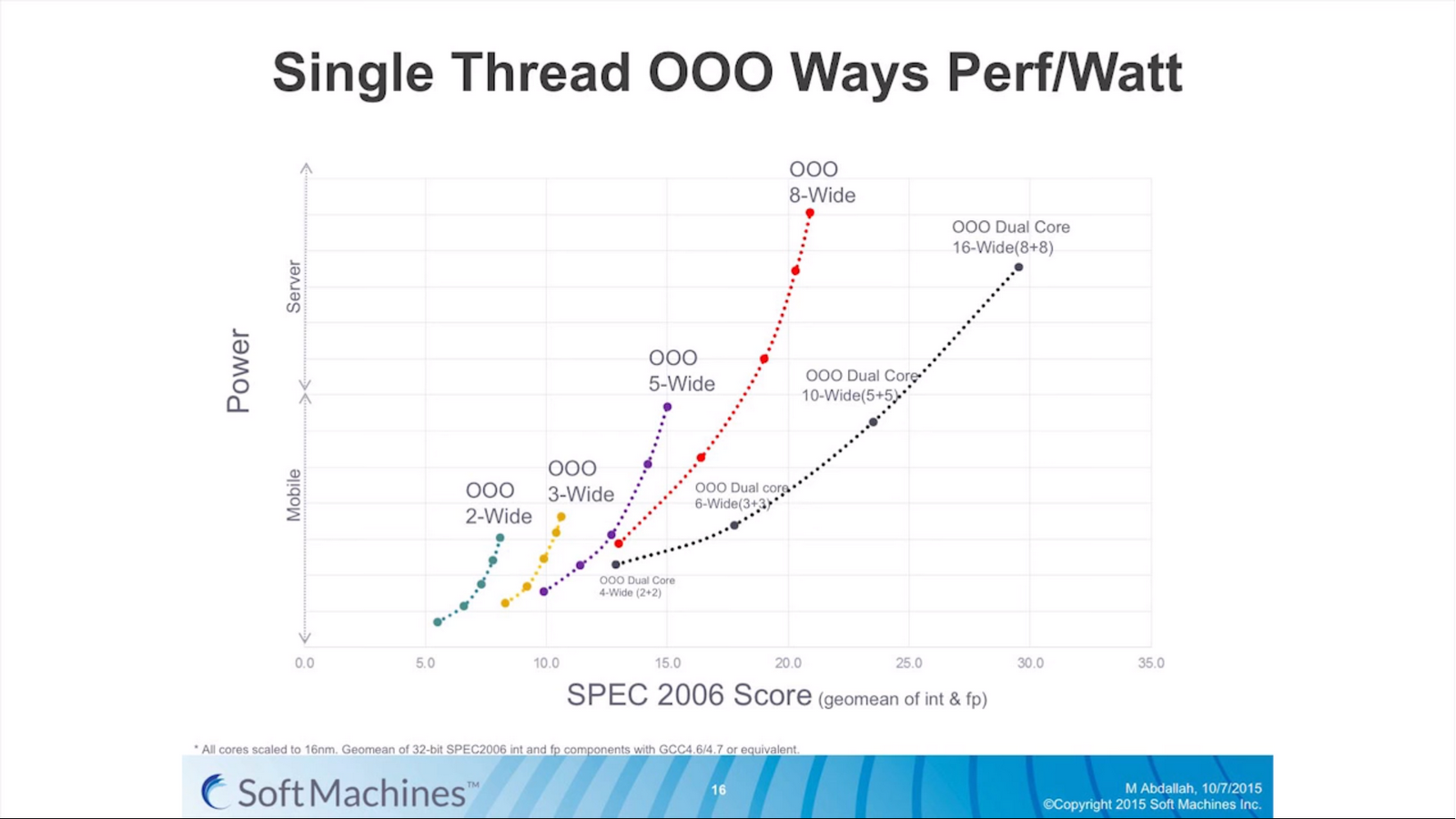

The main way of increasing performance, or in this case the instructions per unit frequency (instructions per clock, or IPC), is to expand the CPU architecture to allow more commands to be processed at once. Moving from a 3-wide out-of-order architecture to a 5-wide out-of-order architecture theoretically allows for a 66% increase in instruction throughput if (and only if) the code is sufficiently dense enough to extract those operations, and the other features in the architecture can ensure all the operations are fed every clock cycle.

The problem with moving to a wider architecture is typically power and design complexity. As shown by various chip designs over the years, the wider the architecture the more silicon has to be set aside for assets like buffers, re-order windows and caching. If there is a silicon budget and enough power headroom, we see designs like the six-wide Intel Skylake cores or the seven wide NVIDIA Denver cores able to extract peak performance when code is written that matches the hardware. However the potential downside of a wide architecture is that it remains inefficient for sets of instructions that only need a 2-wide or a 3-wide architecture. Alternatively, if multiple programs or threads want to use the hardware, then a single core is inaccessible to additional threads while the first thread is still in use (though this can be avoided somewhat by simultaneous multithreading or SMT which will let another thread have access when the first has encountered a stall such as waiting for L1/L2 memory).

As a result, modern designs also include a number of cores to handle the multile thread/multiple program scenario. Generally speaking this works well, especially with high-performance cores, but it becomes a bit of an issue itself when much of the world’s hardware is actually composed of many cores that have poor single threaded performance. Older Core 2 / Conroe systems, basic Bulldozer, or ARM Cortex-A7 designs are (still) widely used and often ship with multiple cores to allow for multiple programs at once. And while they can scale up with additional threads to the number of cores they offer, if any single or lightly-threaded software needs more performance, those extra cores are not used or are only minimally beneficial overall.

This brings us to Soft Machines, whose VISC architecture aims to change this.

Meet VISC

I should start by saying that despite the similarities to other architectural names, VISC is not an acronym. I asked directly and it is merely a noun for the purposes of trademarking. People can interpret it as a ‘virtual instruction set computing’ or something similar, but the company doesn’t apply any acronym to the letters.

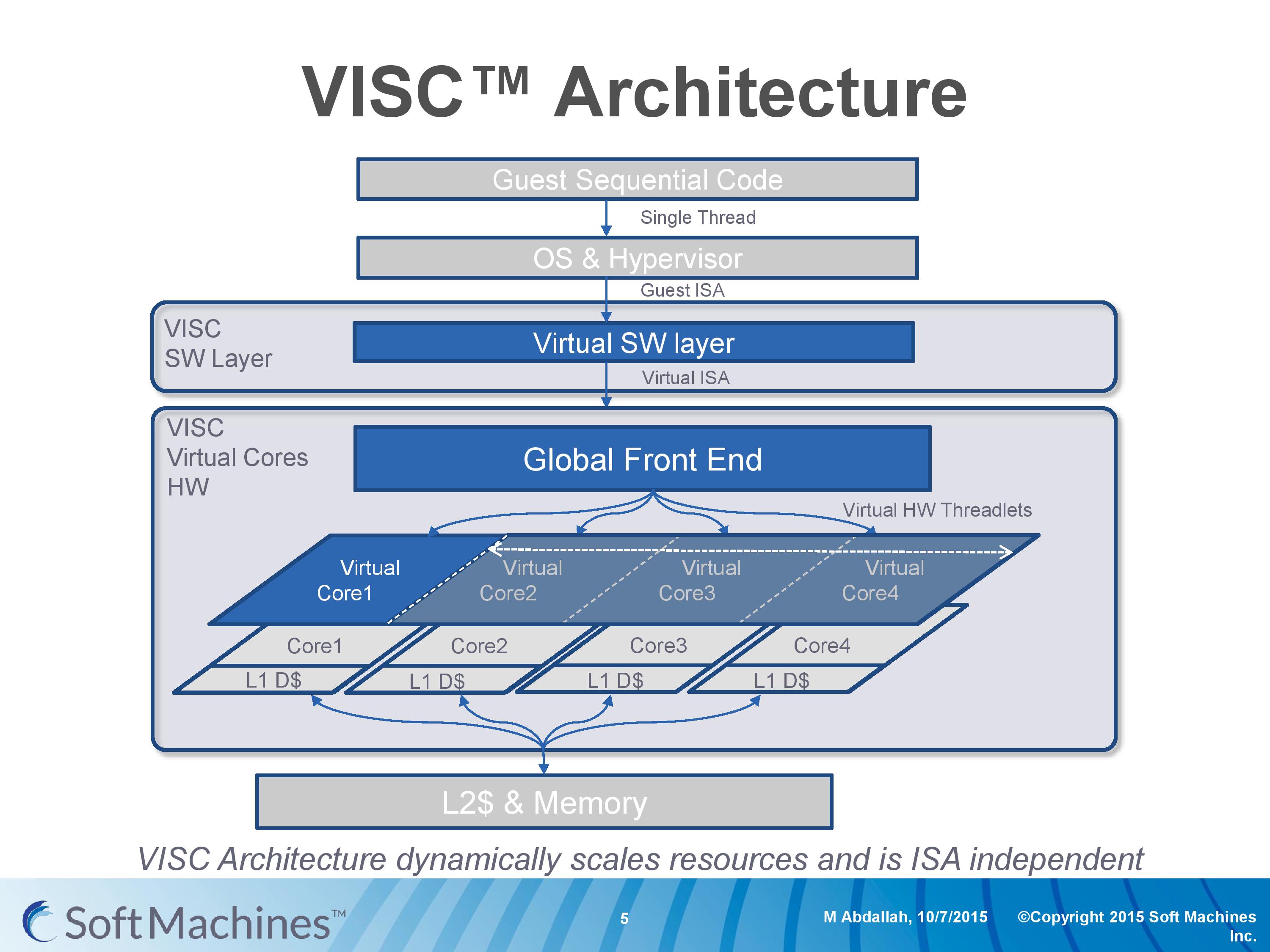

But a virtual instruction set is a good description here. For the most part, processor architectures were traditionally built around either CISC (complex) or RISC (reduced) instruction sets and execution models, while more modern designs (e.g. Intel Core) are increasingly a mix, or so-called ‘CRISC’ design. The difference between CISC and RISC boils down to the fact that simpler designs can be more power efficient, but complex designs can do more complicated things in fewer cycles, all the while CRISC essentially meets the two paradigms in the middle in an attempt to gain the benefits of both, though not without inheriting some of the drawbacks as well. VISC, for lack of a better description, is a RISC design using a custom instruction set over a translation layer which allows a single thread of operations to be dispatched over multiple physical cores. The base diagram looks something like this:

Here is an example of a VISC design with four physical cores. The design can handle four ‘virtual cores’ or threads as well, but what makes the VISC design different is that when the virtual core has a thread of instructions, it can use the resources of any physical core. Thus, if each physical core is a 4-wide out-of-order design, if a thread running on a virtual core can utilize the resources of all four cores essentially making a giant 16-wide design, then under VISC can do so.

This should instantly throw up a number of questions on ‘What!? How?! Why?! Power? Frequency? Performance? Efficiency? Complexity?’ and as well as many others in the industry, we had the same questions.

97 Comments

View All Comments

extide - Friday, February 12, 2016 - link

Because a compiler can only schedule instructions to the CPU's front end. This is scheduling of instructions to different ports on the back end of the cpu. The compiler can't tell the CPU what port an instruction goes down, the CPU picks that. THe compiler only gets to pick what instructions are issues, and in what order, and of course, modern CPU's can even change that order if they deem it faster to do so.Exophase - Friday, February 12, 2016 - link

To have any realistic chance of working the threading speculation/detection has to have a large dynamic component (detecting threadlets as they become desirable at runtime) and has to have architectural support for very lightweight thread splitting, merging, and inter-thread communication.That can't be provided by compilers targeting existing instruction sets.

AlexTi - Friday, February 12, 2016 - link

Thanks, I think I got the point finally. This looks similar to what instruction scheduler currently does for execution units in conventional CPU. Virtualization layer + CPUs will be a kind of very wide core. Right?It was already noted in the article, making curent CPU wider is problematic and not universally beneficial. So this new engine should be much more efficient than current implementations.

Good thing is that we'll see eventually :)

Senti - Friday, February 12, 2016 - link

Bullshit. I have no idea how technically incompetent writers should be to reprint that marketing nonsense again and again.First of all, this brings absolutely no advantages over existing fat core + SMT concept. More IPC per core with more pipelines is not done because it's hard to do without that 'virtual cores' nonsense, but simply because there are not enough actually independent instructions that can be automatically extracted from real code during parts where performance matters.

"Alternatively, if multiple programs or threads want to use the hardware, then a single core is inaccessible to additional threads while the first thread is still in use (though this can be avoided somewhat by simultaneous multithreading or SMT which will let another thread have access when the first has encountered a stall such as waiting for L1/L2 memory)." - total lies. That describes coarse-grained multithreading which is not very popular atm. For example, Intel HT allows usually 2 threads to execute simultaneously dynamically sharing pipelines of the same core all the time. POWER8 uses 8 'virtual' threads per core.

Why no one splits instructions from the same thread over several cores (other than the obvious reason that there are not enough independent instructions to split)? Almost quote from the text: "cross-core communication adds latency and reduces performance".

Instruction set emulation? Far from new concept. Why not popular? Reason is very simple: significant overhead. Try translating something non-trivial like AVX/NEON instructions to some generic internal instruction set.

Finally, the last point: everyone can draw cute performance graphs and huge numbers in marketing presentations, but how about giving actually working chips for independent reviews of performance and power efficiency on real code?

vladx - Sunday, February 14, 2016 - link

Skim the article again, there's a roadmap so let's see how things will go from here.Exophase - Friday, February 12, 2016 - link

There's another big question with their power measurements. They take differences between idle and 1C and 1C and 2C to cancel out the static contribution of other peripherals. But this still ignores the dynamic contribution.For example, we can look at Cortex-A72, where ARM claims that one core at 2.5GHz on the TSMC 16FF+ process will consume about 750 mW. In Kirin 950, the power consumption appears to be about 900 mW at 2.3GHz. Is ARM exaggerating or is Huawei's implementation inferior to ARM's expectations? The discrepancy can actually be pretty easily explained by losses in the PMIC/VRMs, the SoC's memory controller, and the DRAM - all components which use more power the more the CPU load increases.

This is especially a factor for wall measurements because they take into effect an additional AC/DC convertor. While it's possible that Soft Machines included these figures in their power estimations I doubt it since they didn't mention it, and like ARM it's more practical and beneficial for them to work with core power estimations only.

So there could easily be another 25+% that the non-VISC platforms are being penalized.

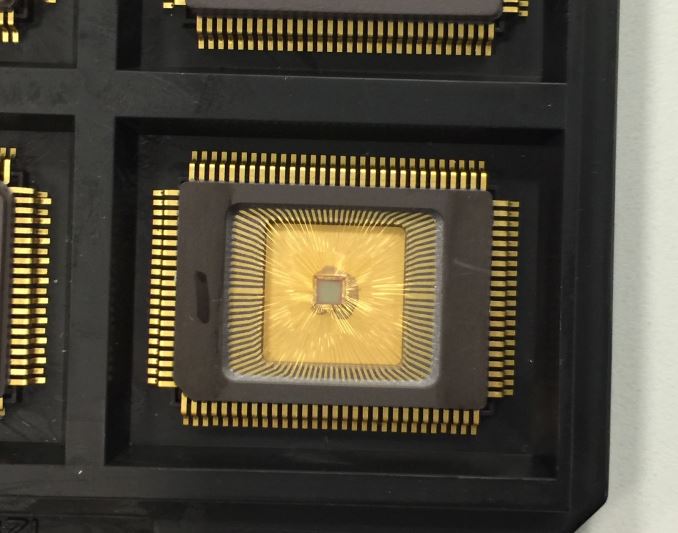

Something else that raises a red flag to me is the 16FF+ test chip. There are only 100 pins. When you take out power, ground, and various control signal are accounted for that leaves a very small interface either to a memory controller or memory (if the controller is integrated). Even a single channel 32-bit interface would be a hard fit. So does this chip really represent both realistic power consumption or realistic performance? I think they're trading one for the other on this one and that makes me question the applicability of the power numbers they've given for it.

Arnulf - Friday, February 12, 2016 - link

Since one cannot buy these "scaled" chips, IMHO it'd make more sense for SMI to publish performance per watt figures of real hardware and let the market decide whether their concept is attractive enough. Yes, Intel may have process node advantage, yes, different CPUs are targetting different performance and power profiles but at least it's a straight comparison and if VISC doesn't beat its entire competition at at least one metric then it's destined to fail anyway.Oh and the remark in the article regarding "VISC advantage" because of it using twice the number of cores while running a single thread in tests - who cares as long as it comes out on top in performance per watt? If they can beat other CPUs by using more cores, kudos to them!

ppi - Saturday, February 13, 2016 - link

Regarding core count, I would direct you to recent AT article on Android usage of multiple cores. Simplified conclusion may be, that Android tends to utilise 4 cores pretty well.In real world, this significantly reduces impact of distributing single thread over multiple cores.

kgardas - Friday, February 12, 2016 - link

Interesting stuff, but to be honest, combining "simpler" cores into more complex is also done by software on SPARC64. At least Fujitsu mentions this on some of their hotchip presentation for SPARC64 VII. So you have 4 cores CPU with 4-wide core and you can combine this by software (compiler) into 8-wide or more depending on your needs for instruction parallelism.Another thing is that something like that is IIRC also supported by POWER8 where you do have a lot of duplicated resources, but not enough so in case 1 thread is able to consume all core resources you may switch-off 7 others. IIRC IBM's compilers contains some optimizations for this too.

Pity think you have mentioned Itanium only in this negative way. Honestly speaking Itanium design was really great and really pity that Intel stopped developing it and not provided any OoO designs on this architecture. If Denver will be successful we will see, but NVidia still counts with it for some designs which may be interesting in a light that they are using ARM's core (A57) for some time now and don't need Denver that much. Also automotive does not care if Denver is there or not yet nVidia pushes it there so I would bet they needs to have really good reason for it. Perhaps their VLIV is good for some special tasks...

So to me whole this looks like they are on another round for money.

Oxford Guy - Friday, February 12, 2016 - link

"Honestly speaking Itanium design was really great and really pity that Intel stopped developing it"The market disagreed so, if you're right, it's a pity the market dictates product success to such a degree.