ASRock X99 WS-E/10G Motherboard Review: Dual 10GBase-T for Prosumers

by Ian Cutress on December 15, 2014 10:00 AM EST- Posted in

- Motherboards

- IT Computing

- Intel

- ASRock

- Enterprise

- X99

- 10GBase-T

Testing the 10GBase-T

Many thanks to Brett Howse for his help!

As an indication of how far away from mainstream adoption of 10GBase-T we are, our testing setup was not ready to receive 10GBase-T – we have no switches or other PCIe cards in house to test the ports. Apart from that, one of the downsides of testing network ports as a whole is that any test out one machine and into another, meaning that and any result will always be at the whims of the lowest common denominator between the two systems. The easiest way to test is therefore to essentially loop back on itself. As the X540-BT2 is a dual port solution, this was ideal for the testing scenario.

We set up the system with ESXi and two Windows Server 2012 VMs, each allocated with 8 threads, 16 GB of DRAM and one of the 10GBase-T ports with custom IPs. We then used LAN Speed Test to set up a server on one VM and a client on the other. LAN Speed Test allows us to simulate multiple clients through the same cable, effectively probing our ports similar to a SOHO/SMB environment.

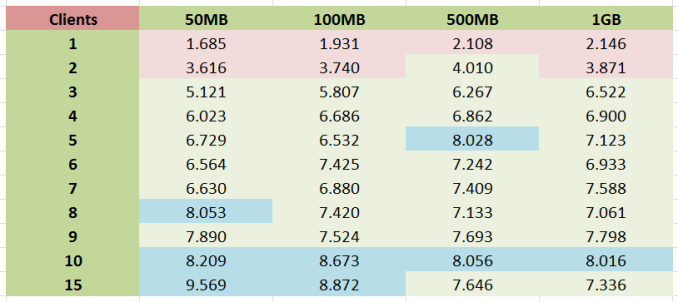

We organized a series of send/receive commands to go through the connections with differing numbers of streams and differing amounts of data per stream. The system was set to repeat for 10 minutes, and the peak transfer rate was recorded. Results shown are in Gbps.

There are several key points to note with these results.

- Firstly, single client speed never broke 2.2 Gbps. This puts an absolute limit on individual point-to-point communication speed in our system setup.

- Next, with between 4-9 clients the speed is fairly consistent between 6.7 and 8 Gbps, no matter what the size of the transfer is.

- Thus in order to get peak throughput, at least 10 concurrent connections need to be made. This is when 8+ Gbps is observed.

- With a small number of clients, a longer transfer results in higher overall speed. However with a larger amount of clients, faster transfers results in higher peak speed.

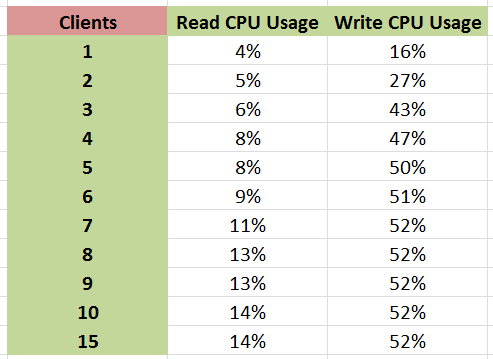

As part of the test, we also examined CPU usage when each stream was set for 1GB transfers. Normally CPU usage for a standard 1Gbit Ethernet port is minimal although one of the factors that some companies like to point to for increased gaming rates. Normally this rarely goes above a few percent on a quad core system, but with the X540-BT2 that changed, especially when the system was being hammered.

As the main VM alternated between reading and writing from the server VM, reading CPU usage peaked after 10 concurrent clients but write CPU usage shot up very quickly to 50% and stayed there. This might explain the slow increases in peak performance we observed, if the software simply ran out of threads and was only geared for four threads.

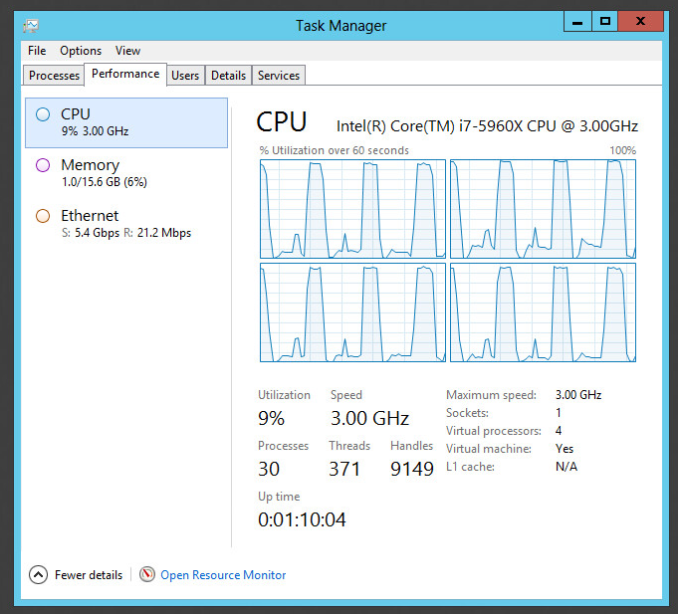

With a 4 core VM we saw 100% usage during writes, and above was the result in terms of CPU performance monitoring.

This marks an interesting juncture, suggesting that a faster single threaded CPU could deliver better performance and that the X540T-BT2 would be better attached to a Haswell/Broadwell platform – at least in our testing scenario. The truth of the matter is that fast connectivity technology runs on optimized FPGAs because general purpose CPUs cannot keep up with what is needed.

So where does leave the X99 WS-E/10G? The best example I could propose is in that SOHO/SMB environment where the system is connected to a 10Gbit/1Gbit mixed switch that has 24+ clients that all need to access the system, either as a virtualized workspace, some form of storage or a joint streaming venture. As the former, it allows the rest of the office to use very basic machines and rely on the grunt of the virtualized environment to perform tasks.

Additional: As mentioned in the comments, this is almost the complete out-of-the-box scenario where the only thing configured is the RAMDisk transfers and multiple stream application, whereas most users might be limited by the SSD speed. Jammrock in the comments has posted a list in helping to optimize the connection solely for individual point-to-point transfers and is worth a read.

45 Comments

View All Comments

koekkoe - Wednesday, December 17, 2014 - link

One usage scenario: iSCSI storage, (especially when used also for booting) greatly benefits from 10G, because on 1G you're limited to 125MB/s, and big 16/24 disc arrays like EqualLogic can easily saturate also 10G bandwidth.petar_b - Thursday, December 18, 2014 - link

Xtreme11 used LSI SAS controller, it was awesome feature, I would happily pay for decent controller instead of slow SATA marvel ports - each time we add one more sata disk, overall disk transfer speed significantly drops. Thanks to LSI we can have 8 SSD SATA on SAS and they all perform 400MB/s even if used simultaneously. Marvel was dropping as low as 50MB/s with 8 SSD simultaneously used. What a lame.akula2 - Thursday, December 18, 2014 - link

I didn't prefer that board either -- not everything should be integrated from hardware scalability and fallback point of views. I'd prefer to build from a board such as Asus X99-E WS without filling up completely, and eventually choke it up!atomt - Saturday, December 20, 2014 - link

"It doesn't increase your internet performance"I beg to differ. 10Gbps internet is available for residential connections in my area. :-D

AngelosC - Wednesday, January 7, 2015 - link

Several things bother me with this review:1) Did I miss it or is there really no mention on how the VMs were accessing the X540? Was it running SR-IOV? Or VMXNET3? What network drivers were loaded in the VMs?

2) 10GE being the major selling point of the mobo but it was only tested using "LAN Speed Test" with results summarized into a simple chart? I suggest you could have also tested using netperf or iperf, showing results also from other OSes like CentOS? Performance difference between UDP and TCP/IP streams? If you just create packets and send, then receive packets and discard (as in the case of iperf3), you probably wouldn't have run into problem of having to place a file on a RAM disk and some other issues. And then if you ran iperf on Linux, you could have ran on bare metal, taking the VMS overhead out of the equation.

3) For sake of correctness, would you please clarify whether it was a X540-AT2 or X540-BT2?

To be frank, this review is below the standard I'd expect from AnandTech.