Intel Xeon E5 Version 3: Up to 18 Haswell EP Cores

by Johan De Gelas on September 8, 2014 12:30 PM ESTUsing a Mobile Architecture Inside a 145W Server Chip

About 15 months after the appearance of the Haswell core in desktop products (June 2013), the "optimized-for-mobile" Haswell architecture is now being adopted into Intel server products.

Left to right: LGA1366 (Xeon 5600), LGA2011 (Xeon E5-2600v1/v2) and LGA2011v3 (E5-2600v3) socket.

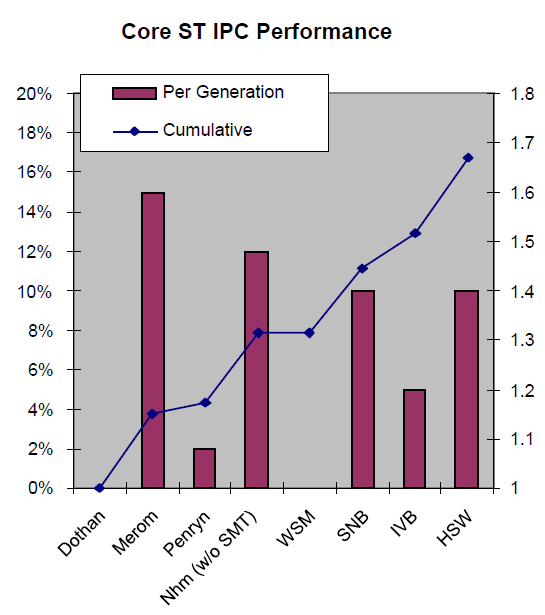

Haswell is Intel's fourth tock, a new architecture on the same succesful 22nm process technology (the famous P1270 process) that was used for the Ivy Bridge EP or Xeon E5-2600 v2. Anand discussed the new Haswell architecture in great detail back in 2012, but as a refresher, let's quickly go over the improvements that the Haswell core brings.

Very little has changed in the front-end of the core compared to Ivy Bridge, with the exception of the usual branch prediction improvements and enlarged TLBs. As you might recall, it is the back-end, the execution part, that is largely improved in the Haswell architecture:

- Larger OoO Window (192 vs 168 entries)

- Deeper Load and Store buffers (72 vs 64, 42 vs 36)

- Larger scheduler (60 vs 54)

- The big splash: 8 instead of 6 execution ports: more execution resources for store address calculation, branches and integer processing.

All in all, Intel calculated that integer processing at the same clock speed should be about 10% better than on Ivy Bridge (Xeon E5-2600 v2, launched September 2013), 15-16% better than on Sandy Bridge (Xeon E5-2600, March 2012), and 27% than Nehalem (Xeon 5500, March 2009).

Even better performance improvements can be achieved by recompiling software and using the AVX2 SIMD instructions. The original AVX ISA extension was mostly about speeding up floating point intensive workloads, but AVX2 makes the SIMD integer instructions capable of working with 256-bit registers.

Unfortunately, in a virtualized environment, these ISA extensions are sometimes more curse than blessing. Running AVX/SSE (and other ISA extensions) code can disable the best virtualization features such as high availability, load balancing, and live migration (vMotion). Therefore, administrators will typically force CPUs to "keep quiet" about their newest ISA extensions (VMware EVC). So if you want to integrate a Haswell EP server inside an existing Sandy Bridge EP server cluster, all the new features including AVX2 that were not present in the Sandy Bridge EP are not available. The results is that in virtualized clusters, ISA extensions are rarely used.

Instead, AVX2 code will typically run on a "native" OS. The best known use of AVX2 code is inside video encoders. However, the technology might still prove to be more useful to enterprises that don't work with pixels but with business data. Intel has demonstrated that the AVX2 instructions can also be used for accelerating the compression of data inside in-memory databases (SAP HANA, Microsoft Hekaton), so the integer flavor of AVX2 might become important for fast and massive data mining applications.

Last but not least, the new bit field manipulation and the use of 256-bit registers can speed up quite a few cryptographic algorithms. Large websites will probably be the application inside the datacenter that benefits quickly from AVX2. Simply using the right libraries might speed up RSA-2048 (opening a secure connection), SHA-256 (hashing), and AES-GCM. We will discuss this in more detail in our performance review.

Floating point

Floating point code should benefit too, as Intel has finally included Fused Multiply Add (FMA) instructions. Peak FLOP performance is doubled once again. This should benefit a whole range of HPC applications, which also tend to be recompiled much quicker than the traditional server applications. The L1 and L2 cache bandwidth has also been doubled to better cope with the needs of AVX2 instructions.

85 Comments

View All Comments

bsd228 - Friday, September 12, 2014 - link

Now go price memory for M class Sun servers...even small upgrades are 5 figures and going 4 years back, a mid sized M4000 type server was going to cost you around 100k with moderate amounts of memory.And take up a large portion of the rack. Whereas you can stick two of these 18 core guys in a 1U server and have 10 of them (180 cores) for around the same sort of money.

Big iron still has its place, but the economics will always be lousy.

platinumjsi - Tuesday, September 9, 2014 - link

ASRock are selling boards with DDR3 support, any idea how that works?http://www.asrockrack.com/general/productdetail.as...

TiGr1982 - Tuesday, September 9, 2014 - link

Well... ASRock is generally famous "marrying" different gen hardware.But here, since this is about DDR RAM, governed by the CPU itself (because memory controller is inside the CPU), then my only guess is Xeon E5 v3 may have dual-mode memory controller (supporting either DDR4 or DDR3), similarly as Phenom II had back in 2009-2011, which supported either DDR2 or DDR3, depending on where you plugged it in.

If so, then probably just the performance of E5 v3 with DDR3 may be somewhat inferior in comparison with DDR4.

alpha754293 - Tuesday, September 9, 2014 - link

No LS-DYNA runs? And yes, for HPC applications, you actually CAN have too many cores (because you can't keep the working cores pegged with work/something to do, so you end up with a lot of data migration between cores, which is bad, since moving data means that you're not doing any useful work ON the data).And how you decompose the domain (for both LS-DYNA and CFD makes a HUGE difference on total runtime performance).

JohanAnandtech - Tuesday, September 9, 2014 - link

No, I hope to get that one done in the more Windows/ESXi oriented review.Klimax - Tuesday, September 9, 2014 - link

Nice review. Next stop: Windows Server. (And MS-SQL..)JohanAnandtech - Tuesday, September 9, 2014 - link

Agreed. PCIe Flash and SQL server look like a nice combination to test this new Xeons.TiGr1982 - Tuesday, September 9, 2014 - link

Xeon 5500 series (Nehalem-EP): up to 4 cores (45 nm)Xeon 5600 series (Westmere-EP): up to 6 cores (32 nm)

Xeon E5 v1 (Sandy Bridge-EP): up to 8 cores (32 nm)

Xeon E5 v2 (Ivy Bridge-EP): up to 12 cores (22 nm)

Xeon E5 v3 (Haswell-EP): up to 18 cores (22 nm)

So, in this progression, core count increases by 50% (1.5 times) almost each generation.

So, what's gonna be next:

Xeon E5 v4 (Broadwell-EP): up to 27 cores (14 nm) ?

Maybe four rows with 5 cores and one row with 7 cores (4 x 5 + 7 = 27) ?

wallysb01 - Wednesday, September 10, 2014 - link

My money is on 24 cores.SuperVeloce - Tuesday, September 9, 2014 - link

What's the story with 2637v3? Only 4 cores and the same freqency and $1k price as 6core 2637v2? By far the most pointless cpu on the list.