Free Cooling: the Server Side of the Story

by Johan De Gelas on February 11, 2014 7:00 AM EST- Posted in

- Cloud Computing

- IT Computing

- Intel

- Xeon

- Ivy Bridge EP

- Supermicro

Hurdles for Free Cooling

It is indeed a lot easier for Facebook, Google and Microsoft to operate data centers with "free cooling". After all, the servers inside those data centers are basically "expendable"; there is no need to make sure that an individual server does not fail. The applications running on top of those servers can handle an occasional server failure easily. That is in sharp contrast with a data center that hosts servers of hundreds of different customers, where the availability of a small server cluster is of the utmost importance and regulated by an SLA (Service Level Agreement). The internet giants also have full control over both facilities and IT equipment.

There are other concerns and humidity is one of the most important ones. Too much humidity and your equipment is threatened by condensation. Conversely, if the data center air is too dry, electrostatic discharge can wreak havoc.

Still, the humidity of the outside air is not a problem for free cooling as many data centers can be outfitted with a water-side economizer. Cold water replaces the refrigerant, pumps and a closed circuit replace the compressor. The hot return water passes through the outdoor pipes of the heat exchangers. If the outdoor air is cold enough, the water-side system can cool the water back to the desired temperature.

Google's data center in Belgium uses water-side cooling so well that it

does not need any additional cooling. (source: google)

Most of the "free cooling" systems are "assisting cooling systems". In many situations they do not perform well enough to guarantee the typical 20-25°C (68-77 °F) inlet temperature the whole year around that CRACs can offer.

All you need is ... a mild climate

But do we really need to guarantee a rather low 20-25°C inlet temperature for our IT equipment all year round? It is a very important question as the temperature in large parts of the worlds can be cooled with free cooling if the server inlet temperature does not need to be so low.

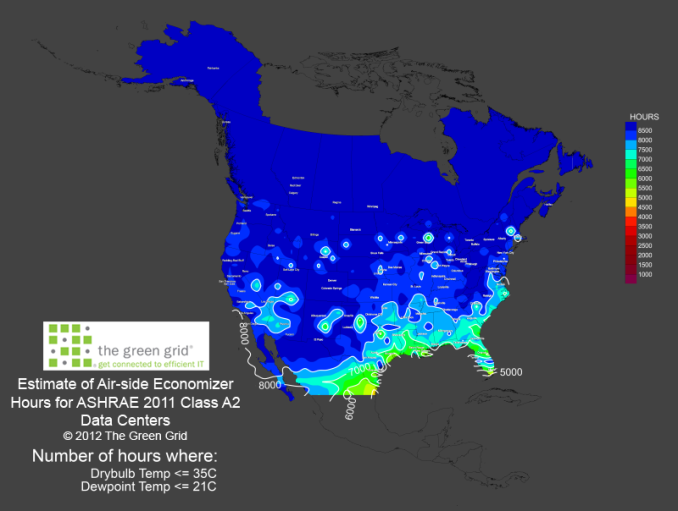

The Green Grid, a non-profit organization, uses data from the Weatherbank to calculate the amount of time that a data center can use air-side "free cooling" to keep the inlet temperature below 35°C. To make this more visual, they publish the data in a colorful way. Dark blue means that air-side economizers can be efficient for 8500 hours per year, which is basically year round. Here is the map of North-America:

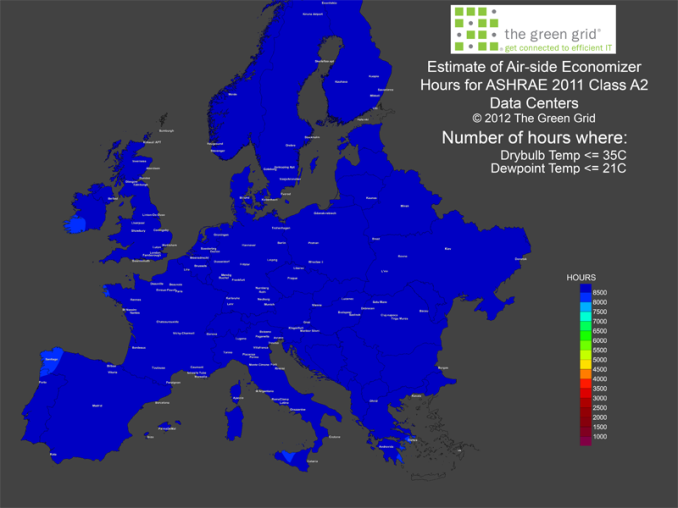

About 75% of North-America can use free cooling if the maximum inlet temperature is raised to 35°C (95 °F). In Europe, the situation is even better:

Although I have my doubts about the accuracy of the map (the south of Spain and Greece see a lot more hot days than the south of Ireland), it looks like 99% of Europe can make use of free cooling. So how do our current servers cope with an inlet temperature up to 35 °C ?

48 Comments

View All Comments

bobbozzo - Tuesday, February 11, 2014 - link

"The main energy gobblers are the CRACs"Actually, the IT equipment (servers & networking) use more power than the cooling equipment.

ref: http://www.electronics-cooling.com/2010/12/energy-...

"The IT equipment usually consumes about 45-55% of the total electricity, and total cooling energy consumption is roughly 30-40% of the total energy use"

Thanks for the article though.

JohanAnandtech - Wednesday, February 12, 2014 - link

That is the whole point, isn't it? IT equipment uses power to be productive, everything else is supporting the IT equipment and thus overhead that you have to minimize. From the facility power, CRACs are the most important power gobblers.bobbozzo - Tuesday, February 11, 2014 - link

So, who is volunteering to work in a datacenter with 35-40C cool aisles and 40-45C hot aisles?Thud2 - Wednesday, February 12, 2014 - link

80,0000, that's sounds like a lot.CharonPDX - Monday, February 17, 2014 - link

See also Intel's long-term research into it, at their New Mexico data center: http://www.intel.com/content/www/us/en/data-center...puffpio - Tuesday, February 18, 2014 - link

On the first page you mention "The "single-tenant" data centers of Facebook, Google, Microsoft and Yahoo that use "free cooling" to its full potential are able to achieve an astonishing PUE of 1.15-1."This article says that Facebook has a achieved a PUE of 1.07 (https://www.facebook.com/note.php?note_id=10150148...

lwatcdr - Thursday, February 20, 2014 - link

So I wonder when Google will build a data center in say North Dakota. Combine the ample wind power with cold and it looks like a perfect place for a green data center.Kranthi Ranadheer - Monday, April 17, 2017 - link

Hi Guys,Does anyone by chance have a recorded data of Temperature and processor's speed in a server room? Or can someone give me the information about the high-end and low-end values measured in any of the server rooms respectively, considering the equation temperature v/s processor's speed?