Inside AnandTech 2013: The Hardware

by Anand Lal Shimpi on March 12, 2013 9:40 AM EST- Posted in

- IT Computing

- CPUs

- Intel

- Enterprise

By the end of 2010 we realized two things. First, the server infrastructure that powered AnandTech was getting very old and we were seeing an increase in component failures, leading to higher than desired downtime. Secondly, our growth over the previous years had begun to tax our existing hardware. We needed an upgrade.

Ever since we started managing our own infrastructure back in the late 90s, the bulk of our hardware has always been provided by our sponsors in exchange for exposure on the site. It also gives them a public case study, which isn't always possible depending on who you're selling to. We always determine what parts we go after and the rules of engagement are simple: if there's a failure, it's a public one. The latter stipulation tends to worry some, and we'll get to that in one of the other posts.

These days there's an tempting alternative: deploying our infrastructure in the cloud. With low (to us) hardware costs however, doing it internally still makes more sense. Furthermore, it also allows us to do things like do performance analysis and create enterprise level benchmarks using our own environment.

Spinning up new cloud instances at Amazon did have it appeal though. We needed to embrace virtualization and the ease of deployment benefits that came with it. The days of one box per application were over, and we had more than enough hardware to begin to consolidate multiple services per box.

We actually moved to our new hardware and software infrastructure last year. With everything going on last year, I never got the chance to actually talk about what our network ended up looking like. With the debut of our redesign, I had another chance to do just that. What will follow are some quick posts looking at storage, CPU and power characteristics of our new environment compared to our old one.

To put things in perspective. The last major hardware upgrade we did at AnandTech was back in the 2006 - 2007 timeframe. Our Forums database server had 16 AMD Opteron cores inside, it's just that we needed 8 dual-core CPUs to get there. The world changed over the past several years, and our new environment is much higher performing, more power efficient and definitely more reliable.

In this post I want to go over, at a high level, the hardware behind the current phase of our infrastructure deployment. In the subsequent posts (including another one that went live today) I'll offer some performance and power comparisons, as well as some insight into why we picked each component.

I'd also like to take this opportunity to thank Ionity, the host of our infrastructure for the past 12 months. We've been through a number of hosts over the years, and Ionity marks the best yet. Performance is typically pretty easy to guarantee when it comes to any hosting provider at a decent datacenter, but it's really service, response time and competence of response that become the differentiating factors for us. Ionity delivered on all fronts, which is why we're there and plan on continuing to be so for years to come.

Out with the Old

Our old infrastructure featured more than 20 servers, a combination of 1U dual-core application servers and some large 4U - 5U database servers. We had to rely on external storage devices in order to give us the number of spindles we needed in order to deliver the performance our workload demanded. Oh how times have changed.

For the new infrastructure we settled on a total of 12 boxes, 6 of which are deployed now and another 6 that we'll likely deploy over the next year for geographic diversity as well as to offer additional capacity. That alone gives you an idea of the increase in compute density that we have today vs. 6 years ago: what once required 20 servers and more than a single rack can easily be done in 6 servers and half a rack (at lower power consumption too).

Of the six, a single box currently acts as a spare - the remaining five are divided as follows: two are on database duty, while the remaining three act as our application servers.

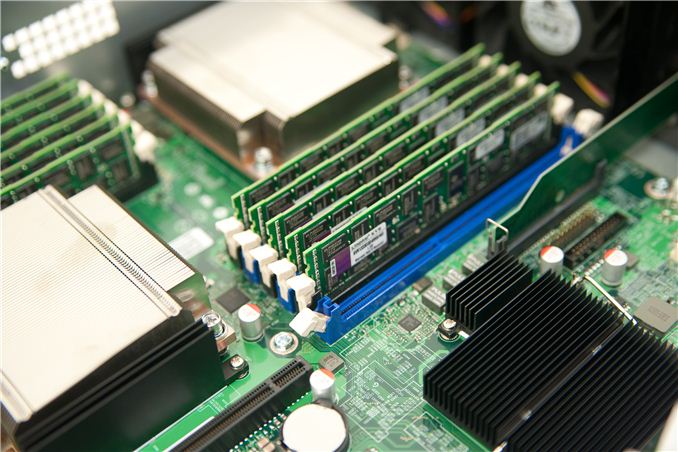

Since we were bringing our own hardware, we needed relatively barebones server solutions. We settled on Intel's SR2625, a fairly standard 2U rackmount with support for the Intel Xeon L5640 CPUs (32nm Westmere Xeons) we would be using. Each box is home to two of these processors, each of which features 6-cores and a 12MB L3 cache.

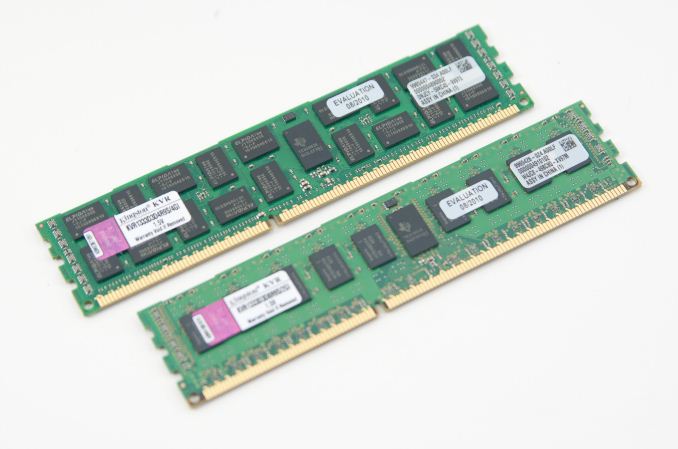

Each database server features 48GB of Kingston DDR3-1333 while the application servers use 36GB each. At the time that we speced out our consolidation plans, we didn't need a ton of memory but going forward it's likely something we'll have to address.

When it comes to storage, the decision was made early on to go all solid-state. The problem we ran into there is most SSD makers at the time didn't want to risk a public failure of their SSDs in our environment. Our first choice declined to participate at the time due to our requirement of making any serious component failures public. Things are different today as the overall quality of all SSDs has improved tremendously, but back then we were left with one option: Intel.

Our application servers use 160GB Intel X25-M G2s, while our database servers use 64GB Intel X25-Es. The world has since moved to enterprise grade MLC in favor of SLC NAND, but at the time the X25-Es were our best bet to guarantee write endurance for our database servers. As I later discovered, using heavily overprovisioned X25-M G2s would've been fine for a few years, but even I wanted to be more cautious back then.

The application servers each use 6 x X25-M G2s, while the database servers use 6 x X25-Es. To keep the environment simple, I opted against using any external RAID controllers - everything here is driven by the on-board Intel SATA controllers. We need multiple SSDs not for performance reasons but rather to get the capacities we need. Given that we migrated from a many-drive HDD array, the fact that we only need a couple of SSDs worth of performance per box isn't too surprising.

Storage capacity is our biggest constraint today. We actually had to move our image hosting needs to our Ionity's cloud environment due to our current capacity constraints. NAND lithographies have shrunk dramatically since the days of the X25-Es and X25-Ms, so we'll likely move image hosting back on to a few very large capacity drives this year.

That's the high level overview of what we're running on, I also posted some performance data for the improvement we saw in going to SSDs in our environment here.

17 Comments

View All Comments

Shadowmaster625 - Wednesday, March 13, 2013 - link

Those X-25E's are really coming down in price on the second-hand market. I guess a lot of people must be upgrading them and putting them for sale on sites like ebay.lwatcdr - Thursday, March 14, 2013 - link

Why Westmere over Sandy Bridge-e?MrCoyote - Sunday, March 17, 2013 - link

Anand,Can you elaborate more on how you were able to get sponsors for the hardware and able to get the required financing when starting Anandtech back in the 90's? I am wanting to start my own film post-production studio but the banks in my area are uncooperative and won't provide any financing. At least not the large amount I need to start this kind of business and buy all the equipment. I also need to figure out how to get clients from Hollywood and local film crews pointed to my studio.

Any suggestions would be appreciated.

SatishJ - Friday, March 22, 2013 - link

Can you please share some statistics about the website load - concurrent users, page views in a day etc ?wang2013 - Tuesday, March 26, 2013 - link

これは、あなたが信頼未満であるそれらのディレクトリ内の会社を見つけることができることを意味します。それはそのようなあなたのクレジットカード番号や社会保障番号などの個人情報を配ること<a href="http://www.nikezu.com/timberland-c-452.html"&... スニーカー</a>

になると常識を働かせてください。服を購入する場所を見つけると、一つは、彼らが着たいものを決定する必要があります。冬が終わるとして、夏前には、誰もが幸せな色合いを探します。それが今は少し遠いように思えるかもしれませんが、たった一ヶ月かそこらでこの変更を探します。あなたは卸売衣類を購入している場合は、これらの明るく元気なトーンを考える。彼らはいつもイースターヶ月ほど適しています!その他の傾向としては、アクティブな摩耗の衣類のインスピレーションは、春のスタイルになることは間違いありません。これらのインスピレーションピースは古いに若いから、すべての年齢層で利用できるようになります。キーは快適であるものです。とき卸売衣服ショッピングあなたは快適、スポーツスタイルの選択で間違った方向になることは決して<a href="http://www.nikeinjapan.com/nike-8-c-32_37.html&quo... ヴェイパー 8</a>ありません。あなただけのスタイルに何があるかわからない場合は、有名人が公共の場で着ているものを見つけるためにいくつかの研究を行う。金持ちや有名にしている人々は通常内"で衣類の種類を決定し、この春には、ルールの例外ではありません。人気のファッションや娯楽雑誌を拾い読みすると、また、あなたの現在の人気の傾向についてのヒントをたくさん与える。あなたはまた、あな<a href="http://www.sunkutu.com/nike-lebron-x-541100006-p-4... LEBRON X ナイキ レブロン 10 レブロン?ジェームス バスケットボー BLACK/UNVERSITY RED/METALLIC SILVER 送料無料 541100-006</a>

たの買い物はナイキShoxのスニーカーの偉大wardrobe.The最初の行を意味することができます前に、格好悪いが(念のためにあなたがわからない。)これらのヒントをお探しているものの絵を与えるために含まれていないことに注意を払う必要があります今年1964年に発売されました。彼らは世界的なスポーツウェアのトレーダーだけでなく、他のアール関連する機器のメーカー。彼らは運動靴とアパレルに関連付けられている世界トップクラスのサプライヤー格だ。実質的に任意の重要なスポーツは、個々の証人を記念し、このブランドがちょうど間強調表示されていない時間はほとんどありませんヤー。一般的なスポーツ選手のプレンティはすでにいくつかの時間のための電子メディア今日の周りのナイキの商品を支持してきた。それ世界中で大規模な部門を持っているプラ??スの若者や男性と、ほぼすべての年齢層の女性とセックスの多くはに忠実であるこのブランドは、それがすべてのこれらの年のように大幅にブランドとして生きてきたことを考える。これは、小売に自社製品を販売していますそのような中で独立したディストリビューターのミックスとしてだけでなく、小売店となり、売上高が含まれていますナイキ直営小売、経由アカウント、世界中で世界中の170以上の国。それは靴に向かって標識されたブランドですので、靴からフィニッシュ値は頻繁に実質的に大きく価格にまとめている。

wang2013 - Tuesday, April 9, 2013 - link

<P>エアジョーダンでしょう。上のエアジョーダンの靴で、1つは常にすべての時間を良い感じになります。<a href="http://www.worldsportsbrand.com/サッカーユニフォームナショナルチーム...、注意してください。あなたはナイキヨルダンの靴のいずれかを着用するときは、インスタント有名人になるため:すべてのショットは慎<a href="http://www.airjordanba.com/air-force-c-943.html&qu... force 1</a>重に見守っている、すべての動きが観客とあなたが法廷の内外で行うすべての喜びですが、人々のための画像と動画でキャプチャされますごちそうに。エアジョーダンの靴は、すべてのバスケットボール選手に不可欠であるためです。それ以外の場合は、これまでバスケットボールハードコートに足を設定する最大の選手にちなんで命名されませ<a href="<a href="http://www.airjordankuz.com">ナイキ エア</a>ん。デニス·ロッドマンは、かつてマイケルジョーダンは彼の私生活で、彼はバスケットボールを果たしている方法で、両方の大きな影響であると述べた。ワームは、彼はすべてのゲームで、様々な方法で染色された彼の荒いゲーム、ピアス、カラフルな髪のために知られていた彼はNBAで足を踏み入れたとき、彼が最初に知られていた。<a href="http://www.glassesjapan.com/oakley-BC-c-1098.html&... オークリー ワイヤー OAKLEY オークリー ワイヤー スニーカー</a>彼は権限を持ついくつかの選手や人々とランイン多数の手抜きと、いくつかのもつれになったとして、裁判所の外では、ロッドマンは同然であった。それから彼は、スコッティーピッペンと一緒にヨルダンの副官の1になるためにシカゴ·ブルズに移籍した彼の決定的な瞬間が来た。</p>wang2013 - Friday, April 26, 2013 - link

CASIOは、現代の、最先端の時計、http://www.evisujpshop.com">エヴィス ジーンズ激安これらのタイプカシオプロトレック、他の人々の間にバロメーター冒険フォームや属性のために作られたビューとしてのと革新の道をリードしています。カシオの結果の多くは、その技術と組立技術革新ではなく、積極的な広告やマーケティングと収入戦略上だけでなく、主に基づいている。カシオの時計を開始する70年代半ばをn個で生産されていると、彼らは、大規模なhttp://www.glassesjpbrand.com">激安サングラスステンレス鋼で生産されていた。その後、カシオはそれらのチェックとして状況材料をプラスチックを採用し始め、それは年1977年にあった。プラスチックのような状況では、コンポーネントの支出を減少させ、その結果として、カシオは幅広い現在の市場に提供することができる魅力価格の時計を提供できる。彼らは年1977年に彼らの非常に最初のプラスチック製の時計を開発しました。すべての最後のカシオローマ法王は、設計量とモジュール量を持っています。4。彼らはありません本当にただ専門小売店に変更され、左によって:完全に我々は、ダンキンドーナツ、スターバックス、Panera Breadの、ケーキのみの店、http://www.glassesjpsale.com">グッチサングラスおよび多くの他を持っている。今促進複合パン屋の商品は、それがあったよりもかなり大きく、これが唯一の人口の拡大によるものではない、それはすぐに食品チェーン店で魅力的なマーケティングや広告に起因している。ねえ、私は母とポップなパン屋でquitと朝食にマフィンを取得、または私はマフィンやコーヒーのためhttp://www.watchjpshop.com">カジュアル腕時計にスターバックスと余分に払うで終了することができます。私はなるように楽しむという理由だけで、スターバックスを選ぶだろうhip.ip.