Analysis of the new Apple iPad

by Anand Lal Shimpi on March 9, 2012 1:37 AM ESTYesterday Apple unveiled its third generation iPad, simply called the new iPad, at an event in San Francisco. The form factor remains mostly unchanged with a 9.7-inch display, however the new device is thicker at 9.4mm vs. 8.8mm for its predecessor. The added thickness was necessary to support the iPad's new 2048 x 1536 Retina Display.

| Tablet Specification Comparison | ||||||

| ASUS Transformer Pad Infinity | Apple's new iPad (2012) | Apple iPad 2 | ||||

| Dimensions | 263 x 180.8 x 8.5mm | 241.2 x 185.7 x 9.4mm | 241.2 x 185.7 x 8.8mm | |||

| Display | 10.1-inch 1920 x 1200 Super IPS+ | 9.7-inch 2048 x 1536 IPS | 9.7-inch 1024 x 768 IPS | |||

| Weight (WiFi) | 586g | 652g | 601g | |||

| Weight (4G LTE) | 586g | 662g | 601g | |||

| Processor (WiFi) |

1.6GHz NVIDIA Tegra 3 T33 (4 x Cortex A9) |

Apple A5X (2 x Cortex A9, PowerVR SGX 543MP4) |

1GHz Apple A5 (2 x Cortex A9, PowerVR SGX543MP2) | |||

| Processor (4G LTE) | 1.5GHz Qualcomm Snapdragon S4 MSM8960 (2 x Krait) |

Apple A5X (2 x Cortex A9, PowerVR SGX 543MP4) |

1GHz Apple A5 (2 x Cortex A9, PowerVR SGX543MP2) | |||

| Connectivity | WiFi , Optional 4G LTE | WiFi , Optional 4G LTE | WiFi , Optional 3G | |||

| Memory | 1GB | 1GB | 512MB | |||

| Storage | 16GB - 64GB | 16GB - 64GB | 16GB | |||

| Battery | 25Whr | 42.5Whr | 25Whr | |||

| Pricing | $599 - $799 est | $499 - $829 | $399, $529 | |||

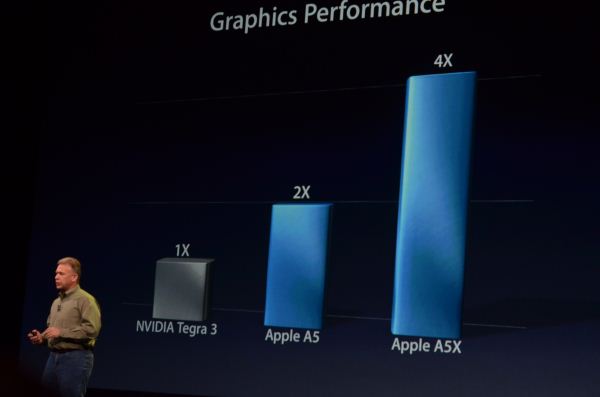

Driving the new display is Apple's A5X SoC. Apple hasn't been too specific about what's inside the A5X other than to say it features "quad-core graphics". Upon further prodding Apple did confirm that there are two CPU cores inside the SoC. It's safe to assume that there are still a pair of Cortex A9s in the A5X but now paired with a PowerVR SGX543MP4 instead of the 543MP2 used in the iPad 2. The chart below gives us an indication of the performance Apple expects to see from the A5X's GPU vs what's in the A5:

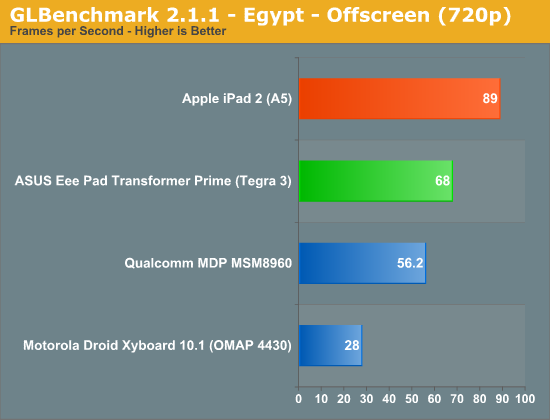

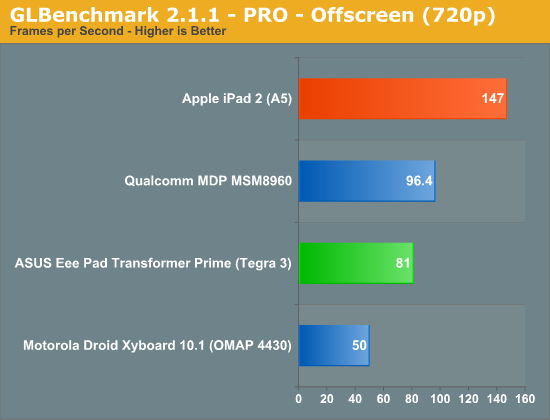

Apple ran the PowerVR SGX 543MP2 in its A5 SoC at around 250MHz, which puts it at 16 GFLOPS of peak theoretical compute horsepower. NVIDIA claims the GPU in Tegra 3 is clocked higher than Tegra 2, which was around 300MHz. In practice, Tegra 3 GPU clocks range from 333MHz on the low end for smartphones and reach as high as 500MHz on the high end for tablets. If we assume a 333MHz GPU clock in Tegra 3, that puts NVIDIA at roughly 8 GFLOPS, which rationalizes the 2x advantage Apple claims in the chart above. The real world performance gap isn't anywhere near that large of course - particularly if you run on a device with a ~500MHz GPU clock (12 GFLOPS):

GLBenchmark 2.1.1's Egypt offscreen test pegs the PowerVR SGX 543MP2 advantage at just over 30%, at least at 1280 x 720. Based on the raw FP numbers for a 500MHz Tegra 3 GPU vs. a 250MHz PowerVR SGX 543MP2, around a 30% performance advantage is what you'd expect from a mostly compute limited workload. It's possible that the gap could grow at higher resolutions or with a different workload. For example, look at the older GLBenchmark PRO results and you will see a 2x gap in graphics performance:

For most real world gaming workloads I do believe that the A5 is faster than Tegra 3, but the advantage is unlikely to be 2x at non-retinadisplay resolutions. The same applies to the A5X vs. Tegra 3 comparison. I fully expect there to be a significant performance gap at the same resolution, but I doubt it is 4x in a game.

| Mobile SoC GPU Comparison | |||||||||||||

| Apple A4 | Apple A5 | Apple A5X | Tegra 3 (max) | Tegra 3 (min) | Intel Z2580 | ||||||||

| GPU | PowerVR SGX 535 | PowerVR SGX 543MP2 | PowerVR SGX 543MP4 | GeForce | GeForce | PowerVR SGX 544MP2 | |||||||

| MADs per Clock | 4 | 32 | 64 | 12 | 12 | 32 | |||||||

| Clock Speed | 250MHz | 250MHz | 250MHz | 500MHz | 333MHz | 533MHz | |||||||

| Peak Compute | 2.0 GFLOPS | 16.0 GFLOPS | 32.0 GFLOPS | 12.0 GFLOPS | 8.0 GFLOPS | 34.1 GFLOPS | |||||||

The A5X doubles GPU execution resources compared to the A5. Imagination Technologies' PowerVR SGX 543 is modular - you can expand by simply increasing "core" count. Apple tells us all we need to know about clock speed in the chart above: with 2x the execution resources and 2x the performance of the A5, Apple hasn't changed the GPU clock of the A5X.

Assuming perfect scaling, I'd expect around a 2x performance gain over Tegra 3 in GLBenchmark (Egypt) at 720p. Again, not 4x but at the same time, hardly insignificant. It can take multiple generations of GPUs to deliver that sort of a performance advantage at a similar price point. Granted Apple has no problems eating the cost of a larger, more expensive die, but that doesn't change the fact that the GPU advantage Apple will hold thanks to the A5X is generational.

I'd also point out that the theoretical GPU performance of the A5X is identical to what Intel is promising with its Atom Z2580 SoC. Apple arrives there with four SGX 543 cores, while Intel gets there with two SGX 544 cores running at ~2x the frequency (533MHz vs. 250MHz).

With the new iPad's Retina Display delivering 4x the pixels of the iPad 2, a 2x increase in GPU horsepower isn't enough to maintain performance. If you remember back to our iPad 2 review however, the PowerVR SGX 543MP2 used in it was largely overkill for the 1024 x 768 display. It's likely that a 4x increase in GPU horsepower wasn't necessary to deliver a similar experience on games. Also keep in mind that memory bandwidth limitations will keep many titles from running at the new iPad's native resolution. Remember that we need huge GPUs with 100s of GB/s of memory bandwidth to deliver a high frame rate on 3 - 4MP PC displays. I'd expect many games to render at lower resolutions and possibly scale up to fit the panel.

What About the Display?

Performance specs aside, the iPad's Retina Display does look amazing. The 1024 x 768 panel in the older models was simply getting long in the tooth and the Retina Display ensures Apple won't need to increase screen resolution for a very long time. Apple also increased color gamut by 44% with the panel, but the increase in resolution alone is worth the upgrade for anyone who spends a lot of time reading on their iPad. The photos below give you an idea of just how sharp text and graphics are on the new display compared to its predecessor (iPad 2, left vs. new iPad, right):

The improvement is dramatic in these macro shots but I do believe that it's just as significant in normal use.

Apple continues to invest heavily in the aspects of its devices that users interact with the most frequently. Spending a significant amount of money on the display makes a lot of sense. Kudos to Apple for pushing the industry forward here. The only downside is supply of these greater-than-HD panels is apparently very limited as a result of Apple buying up most of the production from as many as three different panel vendors. It will be a while before we see Android tablets with comparable resolutions, although we will see 1920 x 1200 Android tablets shipping in this half.

161 Comments

View All Comments

c4v3man - Friday, March 9, 2012 - link

Why not spend another $5-10 on components and make a $600 32GB transformer the base model? That way you still maintain most of the profit margin you want to have, while also being competitive cost-wise. I can appreciate that you are using some components that may be considered better than the newiPad, but you are also using some that can be considered worse. Past experience shows that tablets priced higher than Apple fail in the marketplace since people can't accept a reality where Apple isn't the "premium offering".Lucian Armasu - Friday, March 9, 2012 - link

By the way Anand. Is there any way to test the graphics performance on their native resolutions anymore? I think you should bring that back and show the fixed resolution vs native resolution tests side by side. Because I actually think the iPad 3 suffered a very significant performance drop due to the new resolution, just like the iPhone 4 was always the device at the bottom of the graphics test because of its retina display.So I'm aware that the chip itself should be faster when comparing everything at the same 720p resolution. But that doesn't really mean much for the regular user does it? What matters is real world performance, and that means it matters how fast the iPad is at its *own* resolution, not a theoretical lower resolution that has nothing to do with it.

WaltFrench - Friday, March 9, 2012 - link

Let's think this through a bit. Users don't run GLBench or that sort of stuff; they run games that a developer has tweaked for a platform. Subject to budget — I haven't had the pleasure myself, but hear tell that it requires good, solid engineering and lots of it — the developer puts out the best mix of resolution & speed that will please the customers the most. (Who would do otherwise?)Obviously, if you don't have the resolution, you go for fps. So it's conceivable that a 720p device could show better speed. But that'd only be true if the dev was pushing so hard on the iPad's 4X of pixels that he sacrificed play speed. If it came to that, he'd pull back on AA or other detail/texture quality efforts. Right? Wouldn't you?

So what I think it comes to is how hard a given game dev will work on a particular platform's capabilities. Here, fragmentation and total sales come to play, big time. Anand might be able to give you a theoretical tradeoff that a dev faces, but it might be quite the challenge to translate that into how well gamers would like a given device for stuff they can actually play.

medi01 - Saturday, March 10, 2012 - link

In other words, did Apple's marketing department forbid you doing native resolution benchmarks?doobydoo - Monday, March 12, 2012 - link

Native resolution test is a flawed test.As I've explained to your other comments, performance has to take into account the resolution. IE, 100 fps at 10 x 10 is clearly worse than 60 fps at 2000 x 1000.

It's very telling that you make this suggestion now Apple has come out with the highest resolution device. Not something you requested previously when Android tablets had higher resolution.

The iPhone 4 was never bottom of any sensible benchmarks because of its retina display. The tests, as always, were done at the same resolution as they always should be. The iPhone 4 was low down in the benchmarks because it had a slow GPU.

rashomon_too - Friday, March 9, 2012 - link

If displaysearchblog.com is correct (http://www.displaysearchblog.com/2012/03/ipad-3-cl... most of the extra power consumption is for the display. Because of the lower aperture ratio at the higher pixel density, more backlighting is needed, requiring perhaps twice as many LEDs.jacobdrj - Friday, March 9, 2012 - link

I am no coder, but even with my gaming rig, I have a 27" 1900x1200 display (that I admittedly paid too much for), but I flanked it with 2 inexpensive 1080p displays, rotated vertically in portrait mode for eyefinity and web browsing.IHateMyJob2004 - Friday, March 9, 2012 - link

Save your money.Buy a Playbook

tipoo - Friday, March 9, 2012 - link

Save your money. Buy a toaster.Wait no, I forgot the part where they do different things :P

KoolAidMan1 - Monday, March 12, 2012 - link

Not to mention that toasters are actually useful