Westmere-EX: Intel Improves their Xeon Flagship

by Johan De Gelas on April 6, 2011 2:39 PM EST- Posted in

- IT Computing

- Intel

- Nehalem EX

- Xeon

- Cloud Computing

- Westmere-EX

Comparing Westmere-EX and Nehalem-EX

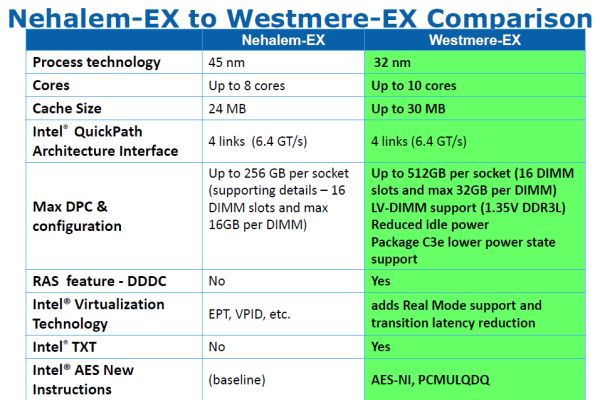

Yesterday, Intel announced that their flagship server processor, the Xeon Nehalem-EX, is being succeeded by the Xeon Westmere-EX, a process-shrinking " tick" in Intel's terminology. By shrinking Intel's largest Xeon to 32nm, the best Westmere-EX Xeon is now clocked 6% higher (2.4GHz versus 2.26GHz), gets two extra cores (10 versus 8) and has a 30MB L3 (instead of 24MB).

As is typical for a tick, the core improvements are rather subtle. The only tangible improvement should be the improved memory controller that is capable of extracting up to 20% more bandwidth out of the same DIMMs. The Nehalem-EX was the first quad-socket Xeon that was not starved by memory bandwidth, and we expect that the Westmere-EX will perform very well in bandwidth limited HPC applications.

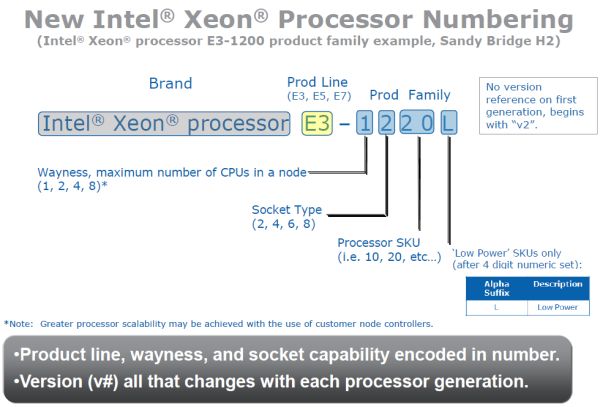

With the launch of Westmere-EX (and Sandy Bridge on the consumer side before it), it appears Intel is finally ready to admit that their BMW-inspired naming system doesn't make any sense at all. They've promised a new, "more logical" system that will be used for the coming years. The details of the new Xeon naming system are presented in the image below.

There is some BMW-ness left (e.g. the product line 3-5-7), but the numbers make more sense now. You can directly derive from the model number the maximum number of sockets, the type of the socket, and whether the CPU is low-end, midrange, or high-end. Intel also has the "L" suffix present for low power models.

32 Comments

View All Comments

JohanAnandtech - Thursday, April 7, 2011 - link

Correct. the Octal CPU market is shrinking fast now that we have 10 and 12 core machines. VMware only recently update vSphere to be able to work with 160 logical CPUs. Linux is able to go up to 255 logical CPUs I believe (x86-64).jihadjoe - Wednesday, April 6, 2011 - link

Rack space can get expensive pretty quickly. When you're renting out space per-rack at a colocation facility, you want to consolidate as much as possible.Power efficiency is another. More cpus per server mean less supporting hardware. Imagine virtualizing 200 or so Atoms with a single 4x 10-core Xeon setup. Atom may well be efficient, but getting rid of 200 chipsets, LAN adapters and GPUs will save quite a bit of energy.

mozartrules - Wednesday, April 6, 2011 - link

Big companies try to run virtualized environments (think VMWare) where many applications run on the same server. Different applications tend to use CPU at different times so end up with much higher average usage of your hardware. My employer (a huge bank) often expect a 4-10 times benefit from running on servers that are bigger than this. It also simplifies administration.The purchase cost of these servers are often a relatively small part of the actual cost. Rack space, power, heat, administration and network connectivity are important considerations. And the RAM cost will often exceed the CPU cost.

I happen to work on an application that needs all data in memory (huge Monte-Carlo model), even a microsecond latency for accessing data would kill performance. This means that I run on a 32-core Nehalem EX with 512Gb RAM and I use all of it. The 512Gb RAM is about 2/3rds of the whole server, partly because it is a lot of RAM and partly because 16Gb DIMMs are a lot more expensive per Gb that desktop memory.

Calin - Thursday, April 7, 2011 - link

There are two reasons:1. Workloads that scale poorly on more systems. If your workload scales perfectly, you can get faster results cheaper by going with more and somewhat slower processors.

2. When your workload will work on a (let's say) 4-processor system with the faster processors, but only on a 8-processor system using the slow processors. In this case, a base 4-processors system with expensive processors might be cheaper than an 8-processors system with cheap processors and offer similar or better performance.

That is, some workloads scale perfectly well over more servers, others scale perfectly well over more processors, and others don't scale well enough over more servers/processors. If your workload is the latter, you want the fastest processors around.

And by the way, some things are really really influenced by performance (see stock market manipulation games when you being half a millisecond faster than the competition means you can earn more money). In the grand scheme of things, the hardware price might be a small consideration (or might be the driving factor). If you absolutely need that performance (and can gain financial use from it), those processors are cheap at whatever price.

alent1234 - Thursday, April 7, 2011 - link

virtualization and huge databasesfor VM this monster will replace a lot of servers, probably a hundred or more. depending on your version of vmware. i think the later versions will let you oversubscribe a host

if you have a database server that hosts tens of thousands of users at a time than going from 2 6 core CPU to 4 10 core CPU's means 24 threads vs 80 threads at a time can be processed.

hellcats - Thursday, April 7, 2011 - link

Can I have that bad boy when you're done testing?MrBuffet - Thursday, April 7, 2011 - link

Especially if you are counting on getting benchmarks that includes Windows 2008 R2 -- as you can see here http://support.microsoft.com/kb/2529956/us-en -- Intel and Microsoft apparently forgot to cross-test their products.While both are clearly at blame here, this is a MAJOR black eye to the Intel validation department, I mean how can you realease a new processor that do not work with the most sold OS in the world...embarrasing to say the least, and makes you wonder what else they missed

alent1234 - Thursday, April 7, 2011 - link

that's assuming you use WIndows 2008 R2 as your hypervisor. most people use vmware.JohanAnandtech - Thursday, April 7, 2011 - link

Thx! Excellent feedback, will save us quite a bit of time and searching. We will talk this through with both MS and Intel.koinkoin - Thursday, April 7, 2011 - link

Well, some of the customers I work with have some very specific work load. They do research and development and for them it is worth to have the best performance when they buy the system…About price, yes and no, as with 64 memory slot you can get a good price per gigabit compared to a system with only 18 slots or so if you need around 256GB or more memory. So there it can be good, but again specific.

But you are right, for most Virtualization system I would rather go with 2U system like the Dell R710 who fit a good balance of CPU power and memory options at a better price. You can have them redundant so you can lose one or more to distribute your loads.