The Ampere Altra Max Review: Pushing it to 128 Cores per Socket

by Andrei Frumusanu on October 7, 2021 8:00 AM EST- Posted in

- Servers

- Arm

- Neoverse N1

- Ampere

- Altra Max

Test Bed and Setup - Compiler Options

For the rest of our performance testing, we’re disclosing the details of the various test setups:

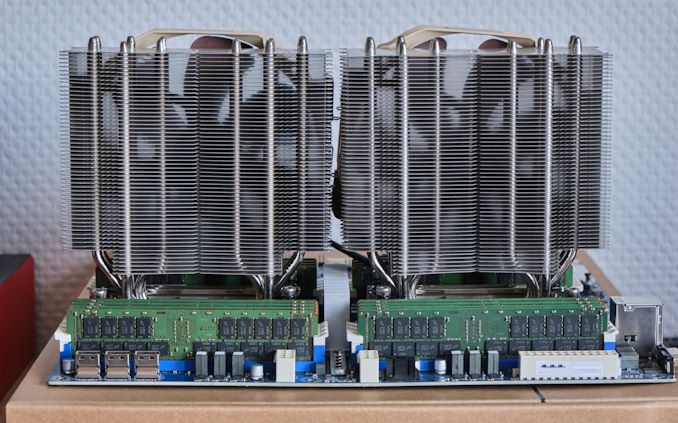

Ampere "Mount Jade" - Dual Altra Max M128-30 / Altra Q80-33

For the Ampere system, we’re still using our Mount Jade server, manufactured by Wiwynn, featuring Ampere’s Mount Jade DVT reference motherboard, testing both the Q80-33 and the new M128-30.

In terms of memory, we’re using the bundled 16 DIMMs of 32GB of Samsung DDR4-3200 for a total of 512GB, 256GB per socket.

| CPU | 2x Ampere Altra Max M128-30 (3.0 GHz, 128c, 16MB L3, 250W) 2x Ampere Altra Q80-33 (3.3 GHz, 80c, 32 MB L3, 250W) |

| RAM | 512 GB (16x32 GB) Samsung DDR4-3200 |

| Internal Disks | Samsung MZ-QLB960NE 960GB Samsung MZ-1LB960NE 960GB |

| Motherboard | Mount Jade DVT Reference Motherboard |

| PSU | 2000W (94%) |

We’re running an Ubuntu 21.04 server image – naturally because the system is Arm SBSA compatible, you’re able to boot off any compatible generic Linux distribution off of it.

The system has all relevant security mitigations activated, including SSBS (Speculative Store Bypass Safe) against Spectre variants.

The system has all relevant security mitigations activated against the various vulnerabilities.

AMD - Dual EPYC 7763 / 75F3 / 7443 / 7343 / 72F3

Our AMD platform for the Milan generation is a GIGABYTE’s MZ72-HB0 rev.3.0 board as the primary test platform for the EPYC 7763, 75F3, 7443, 7343 and 72F3. The system is running under full default settings, meaning performance or power determinism as configured by AMD in their default SKU fuse settings.

| CPU | 2x AMD EPYC 7763 (2.45-3.500 GHz, 64c, 256 MB L3, 280W) / 2x AMD EPYC 75F3 (3.20-4.000 GHz, 32c, 256 MB L3, 280W) / 2x AMD EPYC 7443 (2.85-4.000 GHz, 24c, 128 MB L3, 200W) / 2x AMD EPYC 7343 (3.20-3.900 GHz, 16c, 128 MB L3, 190W) / 2x AMD EPYC 72F3 (3.70-4.100 GHz, 8c, 256MB L3, 180W) |

| RAM | 512 GB (16x32 GB) Micron DDR4-3200 |

| Internal Disks | Crucial MX300 1TB |

| Motherboard | GIGABYTE MZ72-HB0 (rev. 3.0) |

| PSU | EVGA 1600 T2 (1600W) |

Software wise, we ran Ubuntu 20.10 images with the latest release 5.11 Linux kernel. Performance settings both on the OS as well on the BIOS were left to default settings, including such things as a regular Schedutil based frequency governor and the CPUs running performance determinism mode at their respective default TDPs unless otherwise indicated.

AMD - Dual EPYC 7713 / 7662

A few performance figures of the EPYC Milan 7713 and Rome 7762 parts were done on AMD’s Daytona system – unfortunately we no longer have access to these SKUs so this is why the differing platform.

| CPU | 2x AMD EPYC 7713 (2.00-3.365 GHz, 64c, 256 MB L3, 225W) / 2x AMD EPYC 7662 (2.00-3.300 GHz, 64c, 256 MB L3, 225W) |

| RAM | 512 GB (16x32 GB) Micron DDR4-3200 |

| Internal Disks | Varying |

| Motherboard | Daytona reference board: S5BQ |

| PSU | PWS-1200 |

AMD - Dual EPYC 7742

Our local AMD EPYC 7742 system is running on a SuperMicro H11DSI Rev 2.0.

| CPU | 2x AMD EPYC 7742 (2.25-3.4 GHz, 64c, 256 MB L3, 225W) |

| RAM | 512 GB (16x32 GB) Micron DDR4-3200 |

| Internal Disks | Crucial MX300 1TB |

| Motherboard | SuperMicro H11DSI0 |

| PSU | EVGA 1600 T2 (1600W) |

As an operating system we’re using Ubuntu 20.10 with no further optimisations. In terms of BIOS settings we’re using complete defaults, including retaining the default 225W TDP of the EPYC 7742’s, as well as leaving further CPU configurables to auto, except of NPS settings where it’s we explicitly state the configuration in the results.

The system has all relevant security mitigations activated against speculative store bypass and Spectre variants.

Intel - Dual Xeon Platinum 8380

For our new Ice Lake test system based on the Whiskey Lake platform, we’re using Intel’s SDP (Software Development Platform 2SW3SIL4Q, featuring a 2-socket Intel server board (Coyote Pass).

The system is an airflow optimised 2U rack unit with otherwise little fanfare.

Our review setup solely includes the new Intel Xeon 8380 with 40 cores, 2.3GHz base clock, 3.0GHz all-core boost, and 3.4GHz peak single core boost. That’s unusual about this part as noted in the intro, it’s running at a default 205W TDP which is above what we’ve seen from previous generation non-specialised Intel SKUs.

| CPU | 2x Intel Xeon Platinum 8380 (2.3-3.4 GHz, 40c, 60MB L3, 270W) |

| RAM | 512 GB (16x32 GB) SK Hynix DDR4-3200 |

| Internal Disks | Intel SSD P5510 7.68TB |

| Motherboard | Intel Coyote Pass (Server System S2W3SIL4Q) |

| PSU | 2x Platinum 2100W |

The system came with several SSDs including Optane SSD P5800X’s, however we ran our test suite on the P5510 – not that we’re I/O affected in our current benchmarks anyhow.

As per Intel guidance, we’re using the latest BIOS available with the 270 release microcode update.

Intel - Dual Xeon Platinum 8280

For the older Cascade Lake Intel system we’re also using a test-bench setup with the same SSD and OS image as on the EPYC 7742 system.

Because the Xeons only have 6-channel memory, their maximum capacity is limited to 384GB of the same Micron memory, running at a default 2933MHz to remain in-spec with the processor’s capabilities.

| CPU | 2x Intel Xeon Platinum 8280 (2.7-4.0 GHz, 28c, 38.5MB L3, 205W) |

| RAM | 384 GB (12x32 GB) Micron DDR4-3200 (Running at 2933MHz) |

| Internal Disks | Crucial MX300 1TB |

| Motherboard | ASRock EP2C621D12 WS |

| PSU | EVGA 1600 T2 (1600W) |

The Xeon system was similarly run on BIOS defaults on an ASRock EP2C621D12 WS with the latest firmware available.

Compiler Setup

For compiled tests, we’re using the release version of GCC 10.2. The toolchain was compiled from scratch on both the x86 systems as well as the Altra system. We’re using shared binaries with the system’s libc libraries.

60 Comments

View All Comments

Wilco1 - Sunday, October 10, 2021 - link

> You need only note the cases where Max significantly underperforms, relative to its 80-core sibling, to see where the cache reduction is likely an issue.There are regressions in 4 of the 10 integer benchmarks - only mcf is significant. However if you look closely, Altra Max still beats/equals the 8380 in 3 out of those 4. Clearly a 40MB L3 is not large enough for these benchmarks, so would you also call that a "major liability"? EPYC beats all by a huge margin in these 4, so clearly 256MB L3 works well, but it's also way too expensive for a monolithic die.

> The reason why there are so many different benchmarks is that you can't just seize on the aggregate numbers to tell the whole story.

No. The aggregate result averages out the extremes and is a better prediction for average performance. For example Altra Max is slower than Altra on gcc_r and far behind EPYC. However in LLVM compilation Altra Max beats Altra by ~20% and is pretty much equal to the 7763. So in real world tests EPYC's huge caches don't help nearly as much as the gcc_r subtest suggests.

mode_13h - Sunday, October 10, 2021 - link

> There are regressions in 4 of the 10 integer benchmarks - only mcf is significant.When you have 45% more core x GHz, *any* regression is significant! By that token, we should also be marking the xz test as underperforming, since it's only a ~20% improvement.

It's also convenient to seize on specint, when it suffers regressions on 7 of the 12 specfp tests.

> Altra Max still beats/equals the 8380 in 3 out of those 4.

> Clearly a 40MB L3 is not large enough for these benchmarks,

> so would you also call that a "major liability"?

This seems like a rather disingenuous point. To say anything about the 8380's cache, we'd need to see a comparison against other Ice Lake CPUs with a different core-to-cache ratio.

> No. The aggregate result averages out the extremes and is a better

> prediction for average performance.

It's a flawed inference to conclude that "the average workload" will match an unweighted average of a set of intentionally disparate workloads.

Furthermore, people less & less buy hardware for "the average workload".

> in LLVM compilation Altra Max beats Altra by ~20% and is pretty much equal to the 7763.

You can't just cherry-pick the best results of each memory configuration. If you're going to deal in aggregates, then you need to aggregate the results per-configuration.

> So in real world tests EPYC's huge caches don't help nearly as much as the gcc_r subtest suggests.

As a matter of fact, the monolithic vs. quadrant results would argue the opposite, in your chosen example of LLVM. Furthermore, what qualifies LLVM compilation as more "real world" than the gcc test?

schujj07 - Monday, October 11, 2021 - link

"The Altra Max wins the more useful critical-jOPS benchmark by over 30%"What are you talking about? In both critical-jOPS & max-jOPS the 2s 7763 is on the top of the chart. We cannot try to extrapolate possible performance on the Altra Max due to "Unfortunately, trading in one issue with another, we ran into other issues on the 2-socket test scenario where the test ran into issues at large thread counts. The 2S Q80-33 figures here only stresses 130 cores, while I wasn’t able at all to get 2S M128-30 figures at reasonable core counts, so I completely omitted results here."

Per-core performance matters a lot. There are A LOT of programs, especially databases, that are licensed on a per core metric. This means I need 8 cores of Altra Max to equal the performance I get from an Epyc 4c that will kill my licensing cost. Those added cores could easily double the license cost and those license are often times MUCH more expensive than the server itself. It is obvious you don't work in industry as this is common knowledge.

Overall the Altra Max is interesting but nothing more than that. It won't be a player in industry until the per core performance is at least double what it currently is and there is enterprise software able to take advantage of it. Basically Altra Max is like IBM Power and that is niche at best.

Wilco1 - Tuesday, October 12, 2021 - link

Altra Max is still at the top for 1S critical-jOPS - that's not invalidated by missing 2S results.If you worked in the industry, you would know that per-core licenses have a multiplier based on CPU type to level out performance differences. In cases where per-core performance really matters and you completely disable SMT (for example for high-frequency trading), you would not consider these many-core servers at all but get 8 or 16 core CPUs with significantly higher bandwidth, cache and power per core.

It seems you misunderstand the target market completely. You probably also call Graviton 2 a niche eventhough it is already a significant percentage of AWS and growing fast. And that with just 64 cores and far lower per-core performance than Altra...

schujj07 - Tuesday, October 12, 2021 - link

How about we do some math instead. Compared to the 1S 80 core, the 1S Max gets 42% better performance, THP disabled, or 30% better performance THP enabled for 60% more cores in your beloved critical-jOPS. Compare that to the Epyc 7763 which gets 105% better THP disabled and 102% THP enabled. Even the older 80C only adds 62% despite doubling its cores. Based on that alone best case scenario is the 2S Altra Max ties the Epyc 2S in critical-jOPS. Sure it is beating the 1S 7763 but it barely beats the 2S 7443 a 24c/48t CPU.I do work in industry as a VMware Admin. Unless you are running Oracle, most of these will be run on systems up to 32c/64t to max out your VMware license. If you have specific needs you can get the higher frequency parts that also are up to 32c AMD or 28c Intel. The difference in costs for Windows DataCenter for the core additional core licenses is saved by reducing the number of physical hosts. What software has "per-core licenses have a multiplier based on CPU type to level out performance differences?" That sounds like they are going to charge your X for Xeon Scalable Gen 1 but Y for Gen 2 and Z for AMD. That doesn't happen. MS SQL Server charges per core with a base license of 4 cores. Now if I need 8 cores on the Altra Max to equal the performance of an Epyc at 4 cores I have doubled my license cost.

Overall ARM with under 10% total market share IS a niche player. They need to get to the same per core performance & have software available for it to be an actual alternative. Until that happens companies will play around with it but nothing serious in the data center environment.

mode_13h - Tuesday, October 12, 2021 - link

> You probably also call Graviton 2 a nicheDon't put words in people's mouths. If you want to know whether @schujj07 considers it a niche, you can certainly ask.

Kangal - Thursday, October 7, 2021 - link

This is basically a 3GHz Cortex-A76 (Neoverse N1), running in a 128-core tandem, and built with a more efficient/expensive Monolithic Socket based on TSMC's 7nm node. Sounds neat.I enjoyed seeing the older generation which was basically a 2GHz Cortex-A73, running in 64-core tandem, and built on TSMC's 16nm node. Was quiet value-for-money, at least in its time.

Seems like this new version is giving Intel's Core-i, decent competition in the single-threaded work. Since Intel is having some issues with their own node, and can't clock too high. Whilst AMD has a clear advantage here. When it comes to total/multi-threaded performance, ARM wins through sheer grunt of all those extra cores. Overall, it is a competitive choice for today and the next few years.

What will be interesting is when they bump it up to the Cortex-A78 (Neoverse V1) and use something like TSMC's 5nm node which should bring it to full-parity on the single-threaded performance against Intel. Or to the next best thing, ARM v9, using the Cortex-X2 (Neoverse N2) on the same TSMC 5nm node. But I share my previous concerns that the first-generation of (USA) ARM v9 is going to be quiet disappointing, but I'm optimistic about the (European) second-generation. I think then we should see more tangible benefits, when combined with the TSMC 3nm node, which should bring it on parity to AMD's cores on the single-threaded characteristic. Exciting times ahead. And yes, I know I am over-simplifying things here.

SarahKerrigan - Thursday, October 7, 2021 - link

Previous Ampere parts weren't 64-core, 2GHz, or Cortex-A73. They were a custom (and bad) core, 32 per socket, at 3.3GHz.Neoverse V1 is based on the Cortex-X1, not the Cortex-A78. Neoverse N2 is based on the Cortex-A710, not the Cortex-X2.

Kangal - Friday, October 8, 2021 - link

Sorry, by "older generation" I was talking about the Amazon Graviton one, not the previous Ampere Version.The proper upgrade from the Cortex-A76 is the Cortex-A78.

The Cortex-A78 is the base micro-architecture, with the Cortex-X1 being a slightly modified derivative of it, and the Neoverse-V1 is a further slightly modified version of that. That's why I worded it in that way. Whilst ARM claims a divergence between the Cortex-A710, Cortex-X2, and Neoverse-N2... I think we will end up seeing them much more closer in-common than different.

SarahKerrigan - Friday, October 8, 2021 - link

The Graviton1 was 16 Cortex-A72 at 2.3GHz.