Examining Soft Machines' Architecture: An Element of VISC to Improving IPC

by Ian Cutress on February 12, 2016 8:00 AM EST- Posted in

- CPUs

- Arm

- x86

- Architecture

- Soft Machines

- IPC

The VISC Instruction Set and Global Front End

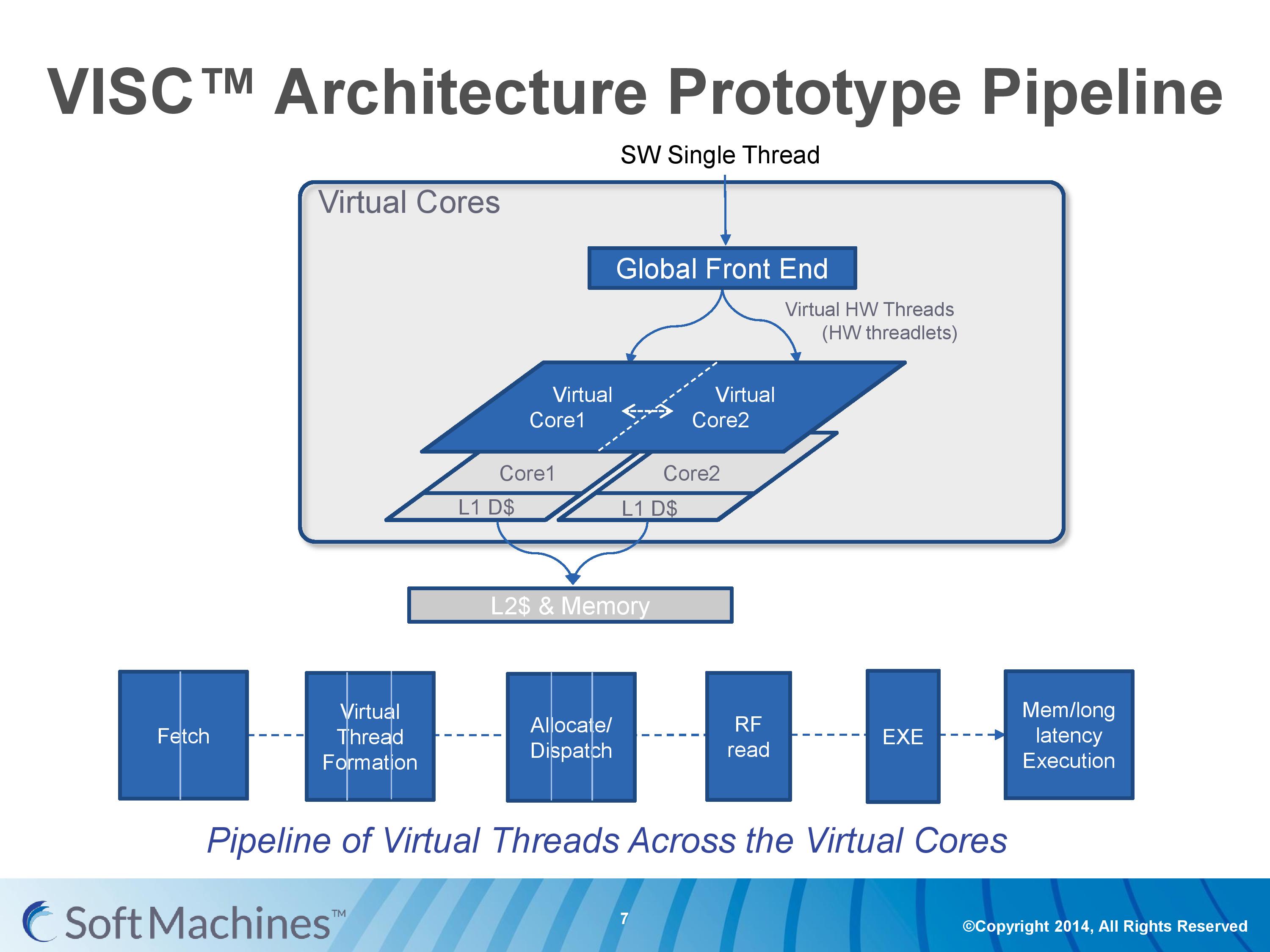

Common instruction set architectures (ISAs) such as x86, ARMv8, Power, SPARC and other more esoteric ones rely on system code converting into predefined instructions that each design can handle. VISC comes with its own ISA as well, separate from the others, which VISC cores and virtual cores use. When using native VISC code, the global front end will split the instructions into smaller ‘virtual hardware threadlets’ which are then dispatched to separate virtual cores. These virtual cores can then issue them to the available resources on any of the physical cores and keep track of where the data goes. Multiple virtual cores can push threadlets into the reorder buffer of a single physical core, which can split partial instructions and data from multiple threadlets through the execution ports at the same time. We were told that each ‘virtual core’ keeps track of the position of the relative output.

The true kicker (and so much of what sets VISC apart) is that when multiple virtual cores are in flight at one time, the core design allows the virtual core allocation of resources to be dynamic on a near-single cycle latency level (we were told from 1-4 cycles depending on the change in allocation). Thus if two virtual cores are competing for resources, there are appropriate algorithms in place to determine what resources are allocated where.

One big area of focus in optimizing processor designs for single-thread performance is speculation – being able to deal with branches in code and/or prefetch relevant data from memory when needed. Typically when speculation occurs, as the data for a single thread is contained within a core, it is easy enough to deal with code paths that rely on previous data or end up with bad speculation.

In the virtual core scenario however this becomes trickier. VISC tackles this in two ways – firstly, the threadlet generation is designed to minimize cross-core communication because this adds latency and reduces performance. Second, each core can communicate through either the register file or the L1 data caches. The register files have a single cycle latency for data but can only transmit tens of values, whereas the L1 cache has a 4-cycle latency but can transmit thousands of values.

Typically communicating through a register file is seen as a risky maneuver and difficult to control, especially when you have multiple physical cores and each core needs each other core to be able to place/take data into the right registers. Soft Machines told us that a large part of their design work has been in this area of speculation and data transfer. Specifically on speculation and branch prediction, we postulated that they were over ten years behind Intel in this, and the response we got was in a similar vein, stating that using Intel’s branch prediction methods could offer at least 20-30% better performance with branching code. However, we were told that the VISC design is quicker to recover in the event of a failed branch, needing only a few cycles.

The Pipeline

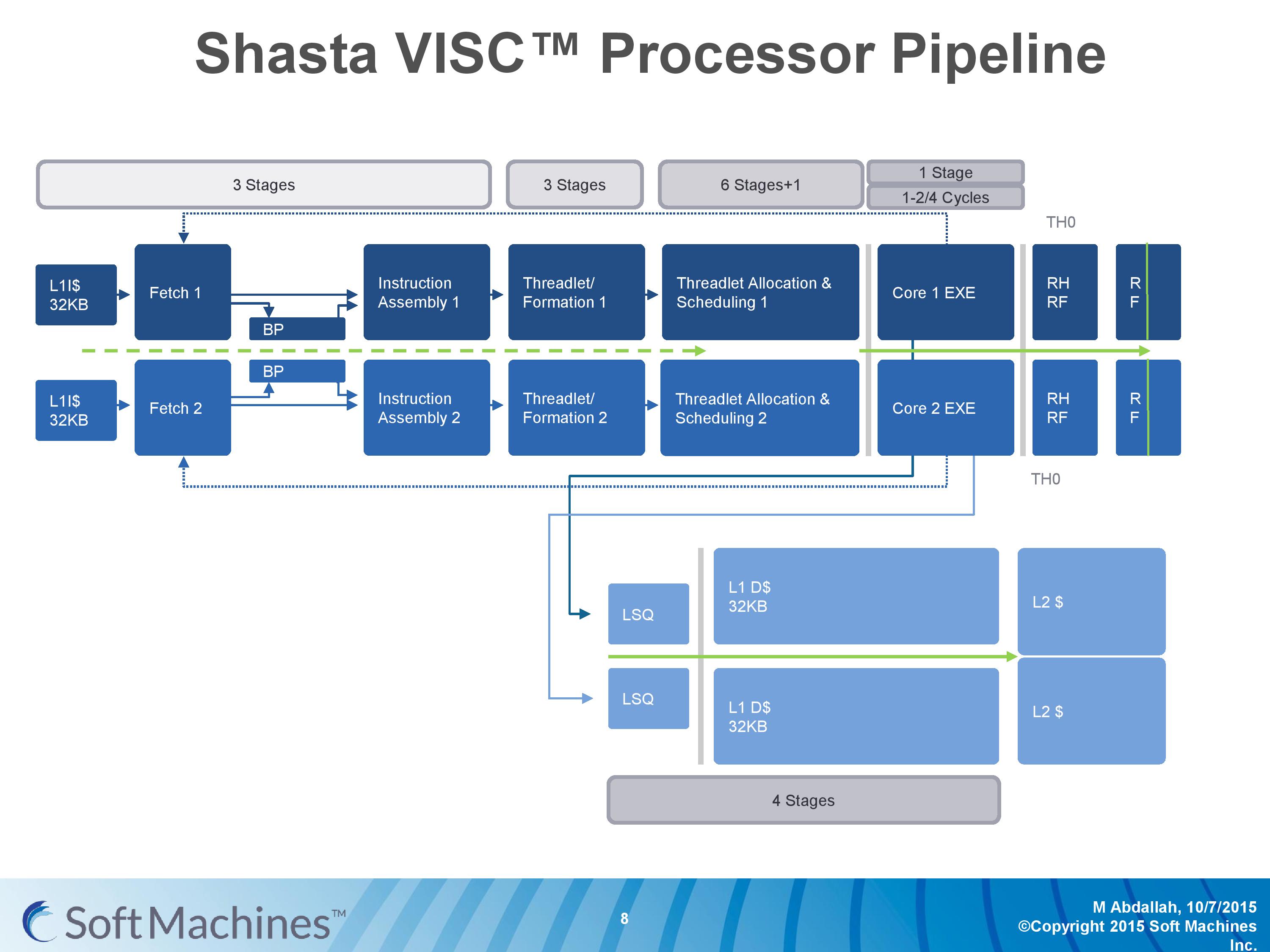

The first VISC core available for license is Shasta, a dual core part that enables up to two virtual cores or threads (2C/2VC), and we were given a base overview of the pipeline.

Normally we would see a pipeline of one core but this is a pipeline of both cores of Shasta. This pipeline, compared to the original VISC prototype, is also deeper. The pipeline looks relatively normal to others to start, where the thread either takes an instruction or issues a fetch for data into the instruction assembly. Making the VISC instructions and data into threadlets takes another three stages, but the allocation and scheduling takes six (plus one). On that subject, Soft Machines mentioned that keeping track of data across multiple cores per virtual core is tricky, as well as dealing with reorder buffers and parallel instruction management, that’s why there are a large amount of stages here. The plus one goes back to variable physical core allocation methodology, ensuring that if there are two threads active that the heavier one will get the most resources. The threadlets are then executed on the ports of each core, with a possible 1-4 cycle delay if data needs to be transferred across the core boundaries via registers or L1 cache.

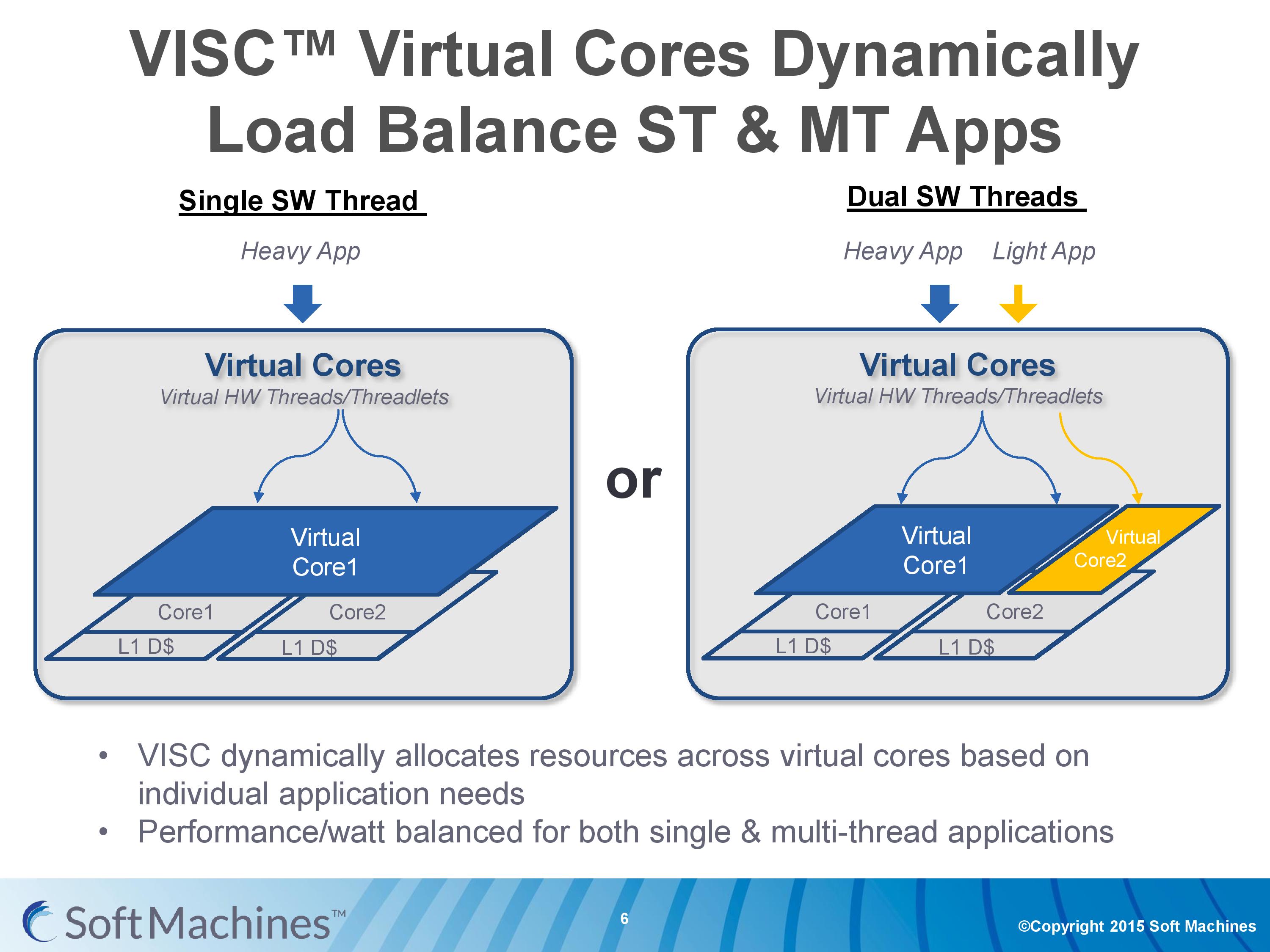

With the variable allocation of fractions of a core to a virtual core, VISC is designed for this situation:

If one heaver thread needs more resources, it can take them from idle ports on a second core (or third, or fourth). The virtual cores can be configured at the software stage as well to limit their use (e.g. keep a VC to half a physical core), and this can be configured at runtime at the expense of 10-12 cycles. There is a quality of service implementation as well, so if a virtual core takes a high priority thread, it will have access to more resources by default.

97 Comments

View All Comments

ddriver - Saturday, February 13, 2016 - link

All abbreviations are capitalized, not just acronyms, idiot. Whether it is an acronym or not depends on how it is pronounced.erple2 - Saturday, March 12, 2016 - link

Enough people confuse initialisms and acronym that it probably doesn't matter anymore.FunBunny2 - Friday, February 12, 2016 - link

If SMI wants this to be believed, then just publish a paper (in a peer reviewed journal) showing how VISC invalidates Amdahl's Law. This is, after all, what they're really claiming.willis936 - Friday, February 12, 2016 - link

Could you explain how they're claiming to invalidate Amdahl's law ?FunBunny2 - Friday, February 12, 2016 - link

as I read the piece, SMI is implying/claiming performance improvement in running serial code in a parallel fashion, and Amdahl says you can't do that. if, OTOH, the claim is that VISC is able to suss out parallel execution in superficially serial code, then that process has to be proven to exist algorithmically. as the piece goes to some length to describe, based on what's been provided by SMI, it much like smoke and mirrors.Arnulf - Friday, February 12, 2016 - link

I read their claims as an expansion of superscalar design. Nothing new here and certainly nothing breaking any "laws". It still cannot magically make non-parallelizable code run faster than it normally would.Samus - Monday, February 15, 2016 - link

If their decoder can break up serial code and run it through different cores optimized to do different things better, this would theoretically complete the code faster because their will be no pipeline penalty.Personally. I think we have better odds of seeing a quantum processor before this type of thing taking off, though. That is to say, no time soon.

gamerk2 - Friday, February 12, 2016 - link

Kinda. There will still be a limited performance simply because some operations can not be made parallel under any circumstances, but Soft Machines is really taking ILP to the extreme here.xthetenth - Friday, February 12, 2016 - link

No it really isn't and you're profoundly misunderstanding Amdahl's law. All that says is how much an improvement to a portion of a workload's execution speed will affect the workload as a whole's execution speed. Meanwhile what they're doing is trying to extract parallelism from single threads, which means that they're speeding up a greater fraction of the code. Funnily enough, you can use Amdahl's law to predict when this method (shrinking the non-improved section to allow higher maximum speed) is more effective than things like clocking higher.I suspect what you're doing is confusing the law with an explanation/common use of the law because it is very popular to use it to show there's a limit on the gains that can be made by parallel processors.

Drumsticks - Friday, February 12, 2016 - link

It doesn't really invalidate Amdahl's Law. Serial code still can't be run on multiple cores. As I understand it, it only allows extracting more ILP using an ultra ultra wide design when possible.